Add ddim noise comparative analysis pipeline (#2665)

* add DDIM Noise Comparative Analysis pipeline * update README * add comments * run BLACK format

This commit is contained in:

parent

d185c0dfa7

commit

268ebcb015

|

|

@ -29,7 +29,7 @@ MagicMix | Diffusion Pipeline for semantic mixing of an image and a text prompt

|

|||

| Stable UnCLIP | Diffusion Pipeline for combining prior model (generate clip image embedding from text, UnCLIPPipeline `"kakaobrain/karlo-v1-alpha"`) and decoder pipeline (decode clip image embedding to image, StableDiffusionImageVariationPipeline `"lambdalabs/sd-image-variations-diffusers"` ). | [Stable UnCLIP](#stable-unclip) | - |[Ray Wang](https://wrong.wang) |

|

||||

| UnCLIP Text Interpolation Pipeline | Diffusion Pipeline that allows passing two prompts and produces images while interpolating between the text-embeddings of the two prompts | [UnCLIP Text Interpolation Pipeline](#unclip-text-interpolation-pipeline) | - | [Naga Sai Abhinay Devarinti](https://github.com/Abhinay1997/) |

|

||||

| UnCLIP Image Interpolation Pipeline | Diffusion Pipeline that allows passing two images/image_embeddings and produces images while interpolating between their image-embeddings | [UnCLIP Image Interpolation Pipeline](#unclip-image-interpolation-pipeline) | - | [Naga Sai Abhinay Devarinti](https://github.com/Abhinay1997/) |

|

||||

|

||||

| DDIM Noise Comparative Analysis Pipeline | Investigating how the diffusion models learn visual concepts from each noise level (which is a contribution of [P2 weighting (CVPR 2022)](https://arxiv.org/abs/2204.00227)) | [DDIM Noise Comparative Analysis Pipeline](#ddim-noise-comparative-analysis-pipeline) | - |[Aengus (Duc-Anh)](https://github.com/aengusng8) |

|

||||

|

||||

|

||||

|

||||

|

|

@ -1033,4 +1033,44 @@ The resulting images in order:-

|

|||

|

||||

|

||||

|

||||

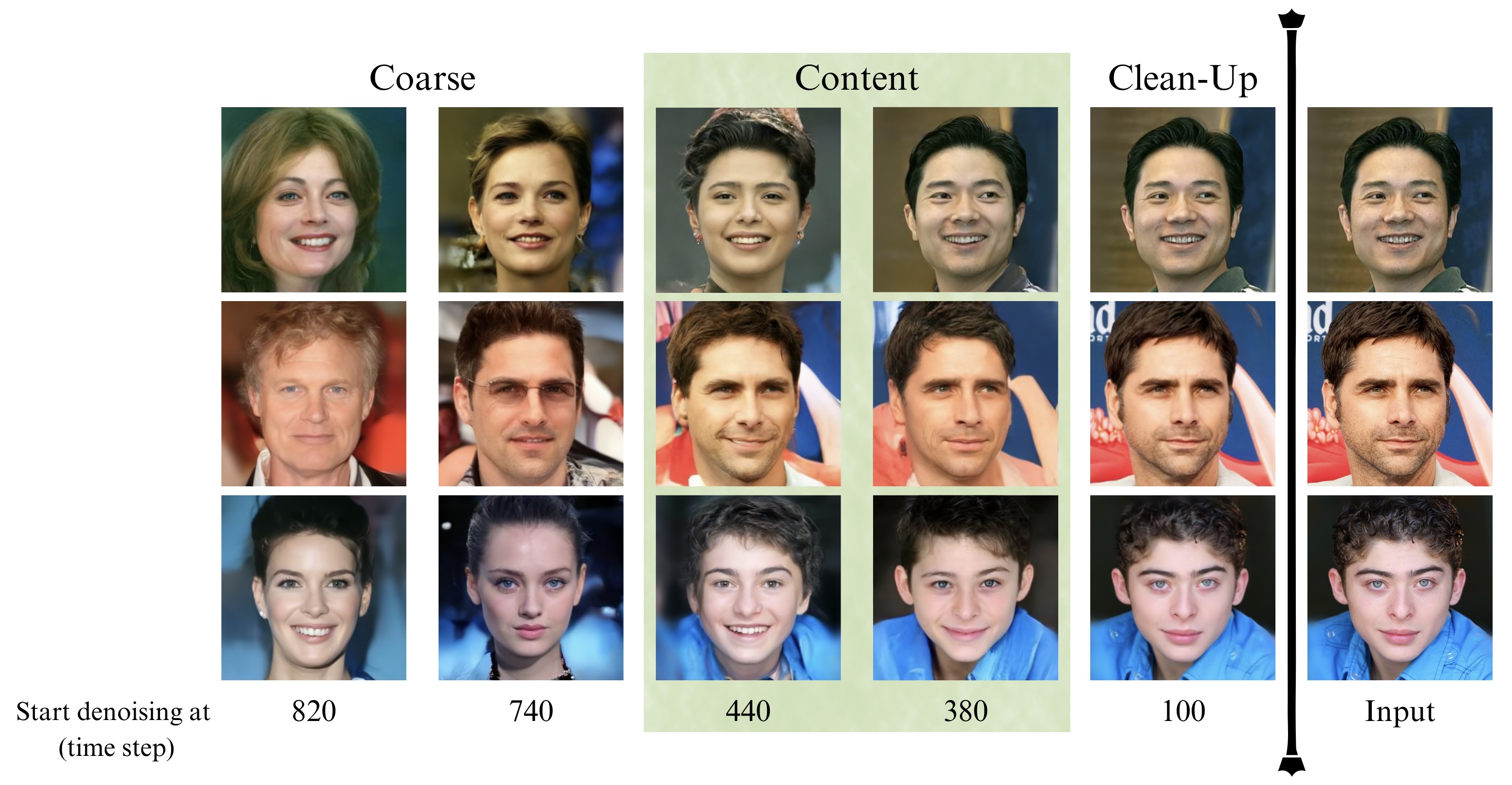

### DDIM Noise Comparative Analysis Pipeline

|

||||

#### **Research question: What visual concepts do the diffusion models learn from each noise level during training?**

|

||||

The [P2 weighting (CVPR 2022)](https://arxiv.org/abs/2204.00227) paper proposed an approach to answer the above question, which is their second contribution.

|

||||

The approach consists of the following steps:

|

||||

|

||||

1. The input is an image x0.

|

||||

2. Perturb it to xt using a diffusion process q(xt|x0).

|

||||

- `strength` is a value between 0.0 and 1.0, that controls the amount of noise that is added to the input image. Values that approach 1.0 allow for lots of variations but will also produce images that are not semantically consistent with the input.

|

||||

3. Reconstruct the image with the learned denoising process pθ(ˆx0|xt).

|

||||

4. Compare x0 and ˆx0 among various t to show how each step contributes to the sample.

|

||||

The authors used [openai/guided-diffusion](https://github.com/openai/guided-diffusion) model to denoise images in FFHQ dataset. This pipeline extends their second contribution by investigating DDIM on any input image.

|

||||

|

||||

```python

|

||||

import torch

|

||||

from PIL import Image

|

||||

import numpy as np

|

||||

|

||||

image_path = "path/to/your/image" # images from CelebA-HQ might be better

|

||||

image_pil = Image.open(image_path)

|

||||

image_name = image_path.split("/")[-1].split(".")[0]

|

||||

|

||||

device = torch.device("cpu" if not torch.cuda.is_available() else "cuda")

|

||||

pipe = DiffusionPipeline.from_pretrained(

|

||||

"google/ddpm-ema-celebahq-256",

|

||||

custom_pipeline="ddim_noise_comparative_analysis",

|

||||

)

|

||||

pipe = pipe.to(device)

|

||||

|

||||

for strength in np.linspace(0.1, 1, 25):

|

||||

denoised_image, latent_timestep = pipe(

|

||||

image_pil, strength=strength, return_dict=False

|

||||

)

|

||||

denoised_image = denoised_image[0]

|

||||

denoised_image.save(

|

||||

f"noise_comparative_analysis_{image_name}_{latent_timestep}.png"

|

||||

)

|

||||

```

|

||||

|

||||

Here is the result of this pipeline (which is DDIM) on CelebA-HQ dataset.

|

||||

|

||||

|

||||

|

|

|

|||

|

|

@ -0,0 +1,190 @@

|

|||

# Copyright 2022 The HuggingFace Team. All rights reserved.

|

||||

#

|

||||

# Licensed under the Apache License, Version 2.0 (the "License");

|

||||

# you may not use this file except in compliance with the License.

|

||||

# You may obtain a copy of the License at

|

||||

#

|

||||

# http://www.apache.org/licenses/LICENSE-2.0

|

||||

#

|

||||

# Unless required by applicable law or agreed to in writing, software

|

||||

# distributed under the License is distributed on an "AS IS" BASIS,

|

||||

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

# See the License for the specific language governing permissions and

|

||||

# limitations under the License.

|

||||

|

||||

from typing import List, Optional, Tuple, Union

|

||||

|

||||

import PIL

|

||||

import torch

|

||||

from torchvision import transforms

|

||||

|

||||

from diffusers.pipeline_utils import DiffusionPipeline, ImagePipelineOutput

|

||||

from diffusers.schedulers import DDIMScheduler

|

||||

from diffusers.utils import randn_tensor

|

||||

|

||||

|

||||

trans = transforms.Compose(

|

||||

[

|

||||

transforms.Resize((256, 256)),

|

||||

transforms.ToTensor(),

|

||||

transforms.Normalize([0.5], [0.5]),

|

||||

]

|

||||

)

|

||||

|

||||

|

||||

def preprocess(image):

|

||||

if isinstance(image, torch.Tensor):

|

||||

return image

|

||||

elif isinstance(image, PIL.Image.Image):

|

||||

image = [image]

|

||||

|

||||

image = [trans(img.convert("RGB")) for img in image]

|

||||

image = torch.stack(image)

|

||||

return image

|

||||

|

||||

|

||||

class DDIMNoiseComparativeAnalysisPipeline(DiffusionPipeline):

|

||||

r"""

|

||||

This model inherits from [`DiffusionPipeline`]. Check the superclass documentation for the generic methods the

|

||||

library implements for all the pipelines (such as downloading or saving, running on a particular device, etc.)

|

||||

|

||||

Parameters:

|

||||

unet ([`UNet2DModel`]): U-Net architecture to denoise the encoded image.

|

||||

scheduler ([`SchedulerMixin`]):

|

||||

A scheduler to be used in combination with `unet` to denoise the encoded image. Can be one of

|

||||

[`DDPMScheduler`], or [`DDIMScheduler`].

|

||||

"""

|

||||

|

||||

def __init__(self, unet, scheduler):

|

||||

super().__init__()

|

||||

|

||||

# make sure scheduler can always be converted to DDIM

|

||||

scheduler = DDIMScheduler.from_config(scheduler.config)

|

||||

|

||||

self.register_modules(unet=unet, scheduler=scheduler)

|

||||

|

||||

def check_inputs(self, strength):

|

||||

if strength < 0 or strength > 1:

|

||||

raise ValueError(f"The value of strength should in [0.0, 1.0] but is {strength}")

|

||||

|

||||

def get_timesteps(self, num_inference_steps, strength, device):

|

||||

# get the original timestep using init_timestep

|

||||

init_timestep = min(int(num_inference_steps * strength), num_inference_steps)

|

||||

|

||||

t_start = max(num_inference_steps - init_timestep, 0)

|

||||

timesteps = self.scheduler.timesteps[t_start:]

|

||||

|

||||

return timesteps, num_inference_steps - t_start

|

||||

|

||||

def prepare_latents(self, image, timestep, batch_size, dtype, device, generator=None):

|

||||

if not isinstance(image, (torch.Tensor, PIL.Image.Image, list)):

|

||||

raise ValueError(

|

||||

f"`image` has to be of type `torch.Tensor`, `PIL.Image.Image` or list but is {type(image)}"

|

||||

)

|

||||

|

||||

init_latents = image.to(device=device, dtype=dtype)

|

||||

|

||||

if isinstance(generator, list) and len(generator) != batch_size:

|

||||

raise ValueError(

|

||||

f"You have passed a list of generators of length {len(generator)}, but requested an effective batch"

|

||||

f" size of {batch_size}. Make sure the batch size matches the length of the generators."

|

||||

)

|

||||

|

||||

shape = init_latents.shape

|

||||

noise = randn_tensor(shape, generator=generator, device=device, dtype=dtype)

|

||||

|

||||

# get latents

|

||||

print("add noise to latents at timestep", timestep)

|

||||

init_latents = self.scheduler.add_noise(init_latents, noise, timestep)

|

||||

latents = init_latents

|

||||

|

||||

return latents

|

||||

|

||||

@torch.no_grad()

|

||||

def __call__(

|

||||

self,

|

||||

image: Union[torch.FloatTensor, PIL.Image.Image] = None,

|

||||

strength: float = 0.8,

|

||||

batch_size: int = 1,

|

||||

generator: Optional[Union[torch.Generator, List[torch.Generator]]] = None,

|

||||

eta: float = 0.0,

|

||||

num_inference_steps: int = 50,

|

||||

use_clipped_model_output: Optional[bool] = None,

|

||||

output_type: Optional[str] = "pil",

|

||||

return_dict: bool = True,

|

||||

) -> Union[ImagePipelineOutput, Tuple]:

|

||||

r"""

|

||||

Args:

|

||||

image (`torch.FloatTensor` or `PIL.Image.Image`):

|

||||

`Image`, or tensor representing an image batch, that will be used as the starting point for the

|

||||

process.

|

||||

strength (`float`, *optional*, defaults to 0.8):

|

||||

Conceptually, indicates how much to transform the reference `image`. Must be between 0 and 1. `image`

|

||||

will be used as a starting point, adding more noise to it the larger the `strength`. The number of

|

||||

denoising steps depends on the amount of noise initially added. When `strength` is 1, added noise will

|

||||

be maximum and the denoising process will run for the full number of iterations specified in

|

||||

`num_inference_steps`. A value of 1, therefore, essentially ignores `image`.

|

||||

batch_size (`int`, *optional*, defaults to 1):

|

||||

The number of images to generate.

|

||||

generator (`torch.Generator`, *optional*):

|

||||

One or a list of [torch generator(s)](https://pytorch.org/docs/stable/generated/torch.Generator.html)

|

||||

to make generation deterministic.

|

||||

eta (`float`, *optional*, defaults to 0.0):

|

||||

The eta parameter which controls the scale of the variance (0 is DDIM and 1 is one type of DDPM).

|

||||

num_inference_steps (`int`, *optional*, defaults to 50):

|

||||

The number of denoising steps. More denoising steps usually lead to a higher quality image at the

|

||||

expense of slower inference.

|

||||

use_clipped_model_output (`bool`, *optional*, defaults to `None`):

|

||||

if `True` or `False`, see documentation for `DDIMScheduler.step`. If `None`, nothing is passed

|

||||

downstream to the scheduler. So use `None` for schedulers which don't support this argument.

|

||||

output_type (`str`, *optional*, defaults to `"pil"`):

|

||||

The output format of the generate image. Choose between

|

||||

[PIL](https://pillow.readthedocs.io/en/stable/): `PIL.Image.Image` or `np.array`.

|

||||

return_dict (`bool`, *optional*, defaults to `True`):

|

||||

Whether or not to return a [`~pipelines.ImagePipelineOutput`] instead of a plain tuple.

|

||||

|

||||

Returns:

|

||||

[`~pipelines.ImagePipelineOutput`] or `tuple`: [`~pipelines.utils.ImagePipelineOutput`] if `return_dict` is

|

||||

True, otherwise a `tuple. When returning a tuple, the first element is a list with the generated images.

|

||||

"""

|

||||

# 1. Check inputs. Raise error if not correct

|

||||

self.check_inputs(strength)

|

||||

|

||||

# 2. Preprocess image

|

||||

image = preprocess(image)

|

||||

|

||||

# 3. set timesteps

|

||||

self.scheduler.set_timesteps(num_inference_steps, device=self.device)

|

||||

timesteps, num_inference_steps = self.get_timesteps(num_inference_steps, strength, self.device)

|

||||

latent_timestep = timesteps[:1].repeat(batch_size)

|

||||

|

||||

# 4. Prepare latent variables

|

||||

latents = self.prepare_latents(image, latent_timestep, batch_size, self.unet.dtype, self.device, generator)

|

||||

image = latents

|

||||

|

||||

# 5. Denoising loop

|

||||

for t in self.progress_bar(timesteps):

|

||||

# 1. predict noise model_output

|

||||

model_output = self.unet(image, t).sample

|

||||

|

||||

# 2. predict previous mean of image x_t-1 and add variance depending on eta

|

||||

# eta corresponds to η in paper and should be between [0, 1]

|

||||

# do x_t -> x_t-1

|

||||

image = self.scheduler.step(

|

||||

model_output,

|

||||

t,

|

||||

image,

|

||||

eta=eta,

|

||||

use_clipped_model_output=use_clipped_model_output,

|

||||

generator=generator,

|

||||

).prev_sample

|

||||

|

||||

image = (image / 2 + 0.5).clamp(0, 1)

|

||||

image = image.cpu().permute(0, 2, 3, 1).numpy()

|

||||

if output_type == "pil":

|

||||

image = self.numpy_to_pil(image)

|

||||

|

||||

if not return_dict:

|

||||

return (image, latent_timestep.item())

|

||||

|

||||

return ImagePipelineOutput(images=image)

|

||||

Loading…

Reference in New Issue