|

|

||

|---|---|---|

| .github/workflows | ||

| docs/source | ||

| examples | ||

| scripts | ||

| src/diffusers | ||

| tests | ||

| utils | ||

| .gitignore | ||

| LICENSE | ||

| MANIFEST.in | ||

| Makefile | ||

| README.md | ||

| pyproject.toml | ||

| setup.cfg | ||

| setup.py | ||

README.md

🤗 Diffusers provides pretrained diffusion models across multiple modalities, such as vision and audio, and serves as a modular toolbox for inference and training of diffusion models.

More precisely, 🤗 Diffusers offers:

- State-of-the-art diffusion pipelines that can be run in inference with just a couple of lines of code (see src/diffusers/pipelines).

- Various noise schedulers that can be used interchangeably for the prefered speed vs. quality trade-off in inference (see src/diffusers/schedulers).

- Multiple types of models, such as UNet, that can be used as building blocks in an end-to-end diffusion system (see src/diffusers/models).

- Training examples to show how to train the most popular diffusion models (see examples).

Quickstart

In order to get started, we recommend taking a look at two notebooks:

- The Getting started with Diffusers notebook, which showcases an end-to-end example of usage for diffusion models, schedulers and pipelines. Take a look at this notebook to learn how to use the pipeline abstraction, which takes care of everything (model, scheduler, noise handling) for you, but also to get an understanding of each independent building blocks in the library.

- The Training a diffusers model notebook, which summarizes diffuser model training methods. This notebook takes a step-by-step approach to training your diffuser model on an image dataset, with explanatory graphics.

Examples

If you want to run the code yourself 💻, you can try out:

# !pip install diffusers transformers

from diffusers import DiffusionPipeline

model_id = "CompVis/ldm-text2im-large-256"

# load model and scheduler

ldm = DiffusionPipeline.from_pretrained(model_id)

# run pipeline in inference (sample random noise and denoise)

prompt = "A painting of a squirrel eating a burger"

images = ldm([prompt], num_inference_steps=50, eta=0.3, guidance_scale=6)["sample"]

# save images

for idx, image in enumerate(images):

image.save(f"squirrel-{idx}.png")

# !pip install diffusers

from diffusers import DDPMPipeline, DDIMPipeline, PNDMPipeline

model_id = "google/ddpm-celebahq-256"

# load model and scheduler

ddpm = DDPMPipeline.from_pretrained(model_id) # you can replace DDPMPipeline with DDIMPipeline or PNDMPipeline for faster inference

# run pipeline in inference (sample random noise and denoise)

image = ddpm()["sample"]

# save image

image[0].save("ddpm_generated_image.png")

If you just want to play around with some web demos, you can try out the following 🚀 Spaces:

| Model | Hugging Face Spaces |

|---|---|

| Text-to-Image Latent Diffusion |  |

| Faces generator |  |

| DDPM with different schedulers |  |

Definitions

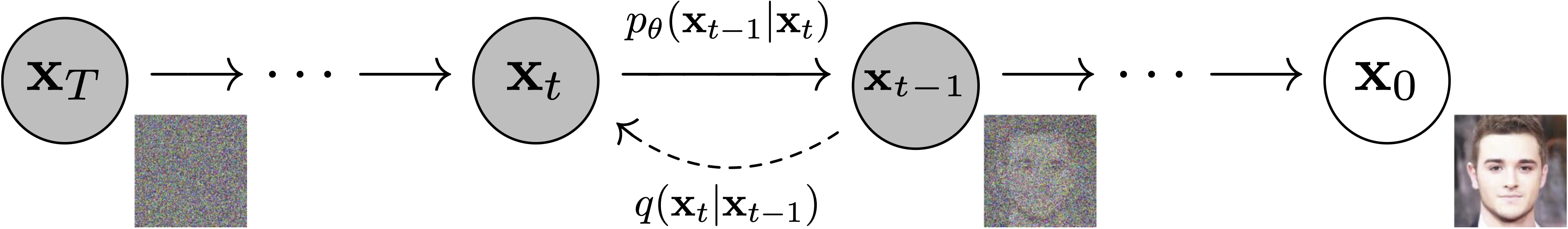

Models: Neural network that models p_\theta(\mathbf{x}_{t-1}|\mathbf{x}_t) (see image below) and is trained end-to-end to denoise a noisy input to an image.

Examples: UNet, Conditioned UNet, 3D UNet, Transformer UNet

Figure from DDPM paper (https://arxiv.org/abs/2006.11239).

Schedulers: Algorithm class for both inference and training. The class provides functionality to compute previous image according to alpha, beta schedule as well as predict noise for training. Examples: DDPM, DDIM, PNDM, DEIS

Sampling and training algorithms. Figure from DDPM paper (https://arxiv.org/abs/2006.11239).

Diffusion Pipeline: End-to-end pipeline that includes multiple diffusion models, possible text encoders, ... Examples: Glide, Latent-Diffusion, Imagen, DALL-E 2

Figure from ImageGen (https://imagen.research.google/).

Philosophy

- Readability and clarity is prefered over highly optimized code. A strong importance is put on providing readable, intuitive and elementary code design. E.g., the provided schedulers are separated from the provided models and provide well-commented code that can be read alongside the original paper.

- Diffusers is modality independent and focusses on providing pretrained models and tools to build systems that generate continous outputs, e.g. vision and audio.

- Diffusion models and schedulers are provided as consise, elementary building blocks whereas diffusion pipelines are a collection of end-to-end diffusion systems that can be used out-of-the-box, should stay as close as possible to their original implementation and can include components of other library, such as text-encoders. Examples for diffusion pipelines are Glide and Latent Diffusion.

Installation

pip install diffusers # should install diffusers 0.1.2

In the works

For the first release, 🤗 Diffusers focuses on text-to-image diffusion techniques. However, diffusers can be used for much more than that! Over the upcoming releases, we'll be focusing on:

- Diffusers for audio

- Diffusers for reinforcement learning (initial work happening in https://github.com/huggingface/diffusers/pull/105).

- Diffusers for video generation

- Diffusers for molecule generation (initial work happening in https://github.com/huggingface/diffusers/pull/54)

A few pipeline components are already being worked on, namely:

- BDDMPipeline for spectrogram-to-sound vocoding

- GLIDEPipeline to support OpenAI's GLIDE model

- Grad-TTS for text to audio generation / conditional audio generation

We want diffusers to be a toolbox useful for diffusers models in general; if you find yourself limited in any way by the current API, or would like to see additional models, schedulers, or techniques, please open a GitHub issue mentioning what you would like to see.

Credits

This library concretizes previous work by many different authors and would not have been possible without their great research and implementations. We'd like to thank, in particular, the following implementations which have helped us in our development and without which the API could not have been as polished today:

- @CompVis' latent diffusion models library, available here

- @hojonathanho original DDPM implementation, available here as well as the extremely useful translation into PyTorch by @pesser, available here

- @ermongroup's DDIM implementation, available here.

- @yang-song's Score-VE and Score-VP implementations, available here

We also want to thank @heejkoo for the very helpful overview of papers, code and resources on diffusion models, available here as well as @crowsonkb and @rromb for useful discussions and insights.