The API to create tokens is missing the ability to set the required

scopes for tokens, and to show them on the API and on the UI.

This PR adds this functionality.

Signed-off-by: Andrew Thornton <art27@cantab.net>

Close: #22910

---

I'm confused about that why does the api (`GET

/repos/{owner}/{repo}/pulls/{index}/files`) require caller to pass the

parameters `limit` and `page`.

In my case, the caller only needs to pass a `skip-to` to paging. This is

consistent with the api `GET /{owner}/{repo}/pulls/{index}/files`

So, I deleted the code related to `listOptions`

---------

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

Some bugs caused by less unit tests in fundamental packages. This PR

refactor `setting` package so that create a unit test will be easier

than before.

- All `LoadFromXXX` files has been splited as two functions, one is

`InitProviderFromXXX` and `LoadCommonSettings`. The first functions will

only include the code to create or new a ini file. The second function

will load common settings.

- It also renames all functions in setting from `newXXXService` to

`loadXXXSetting` or `loadXXXFrom` to make the function name less

confusing.

- Move `XORMLog` to `SQLLog` because it's a better name for that.

Maybe we should finally move these `loadXXXSetting` into the `XXXInit`

function? Any idea?

---------

Co-authored-by: 6543 <6543@obermui.de>

Co-authored-by: delvh <dev.lh@web.de>

This PR refactors and improves the password hashing code within gitea

and makes it possible for server administrators to set the password

hashing parameters

In addition it takes the opportunity to adjust the settings for `pbkdf2`

in order to make the hashing a little stronger.

The majority of this work was inspired by PR #14751 and I would like to

thank @boppy for their work on this.

Thanks to @gusted for the suggestion to adjust the `pbkdf2` hashing

parameters.

Close#14751

---------

Signed-off-by: Andrew Thornton <art27@cantab.net>

Co-authored-by: delvh <dev.lh@web.de>

Co-authored-by: John Olheiser <john.olheiser@gmail.com>

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

Add a new "exclusive" option per label. This makes it so that when the

label is named `scope/name`, no other label with the same `scope/`

prefix can be set on an issue.

The scope is determined by the last occurence of `/`, so for example

`scope/alpha/name` and `scope/beta/name` are considered to be in

different scopes and can coexist.

Exclusive scopes are not enforced by any database rules, however they

are enforced when editing labels at the models level, automatically

removing any existing labels in the same scope when either attaching a

new label or replacing all labels.

In menus use a circle instead of checkbox to indicate they function as

radio buttons per scope. Issue filtering by label ensures that only a

single scoped label is selected at a time. Clicking with alt key can be

used to remove a scoped label, both when editing individual issues and

batch editing.

Label rendering refactor for consistency and code simplification:

* Labels now consistently have the same shape, emojis and tooltips

everywhere. This includes the label list and label assignment menus.

* In label list, show description below label same as label menus.

* Don't use exactly black/white text colors to look a bit nicer.

* Simplify text color computation. There is no point computing luminance

in linear color space, as this is a perceptual problem and sRGB is

closer to perceptually linear.

* Increase height of label assignment menus to show more labels. Showing

only 3-4 labels at a time leads to a lot of scrolling.

* Render all labels with a new RenderLabel template helper function.

Label creation and editing in multiline modal menu:

* Change label creation to open a modal menu like label editing.

* Change menu layout to place name, description and colors on separate

lines.

* Don't color cancel button red in label editing modal menu.

* Align text to the left in model menu for better readability and

consistent with settings layout elsewhere.

Custom exclusive scoped label rendering:

* Display scoped label prefix and suffix with slightly darker and

lighter background color respectively, and a slanted edge between them

similar to the `/` symbol.

* In menus exclusive labels are grouped with a divider line.

---------

Co-authored-by: Yarden Shoham <hrsi88@gmail.com>

Co-authored-by: Lauris BH <lauris@nix.lv>

Allow back-dating user creation via the `adminCreateUser` API operation.

`CreateUserOption` now has an optional field `created_at`, which can

contain a datetime-formatted string. If this field is present, the

user's `created_unix` database field will be updated to its value.

This is important for Blender's migration of users from Phabricator to

Gitea. There are many users, and the creation timestamp of their account

can give us some indication as to how long someone's been part of the

community.

The back-dating is done in a separate query that just updates the user's

`created_unix` field. This was the easiest and cleanest way I could

find, as in the initial `INSERT` query the field always is set to "now".

To avoid duplicated load of the same data in an HTTP request, we can set

a context cache to do that. i.e. Some pages may load a user from a

database with the same id in different areas on the same page. But the

code is hidden in two different deep logic. How should we share the

user? As a result of this PR, now if both entry functions accept

`context.Context` as the first parameter and we just need to refactor

`GetUserByID` to reuse the user from the context cache. Then it will not

be loaded twice on an HTTP request.

But of course, sometimes we would like to reload an object from the

database, that's why `RemoveContextData` is also exposed.

The core context cache is here. It defines a new context

```go

type cacheContext struct {

ctx context.Context

data map[any]map[any]any

lock sync.RWMutex

}

var cacheContextKey = struct{}{}

func WithCacheContext(ctx context.Context) context.Context {

return context.WithValue(ctx, cacheContextKey, &cacheContext{

ctx: ctx,

data: make(map[any]map[any]any),

})

}

```

Then you can use the below 4 methods to read/write/del the data within

the same context.

```go

func GetContextData(ctx context.Context, tp, key any) any

func SetContextData(ctx context.Context, tp, key, value any)

func RemoveContextData(ctx context.Context, tp, key any)

func GetWithContextCache[T any](ctx context.Context, cacheGroupKey string, cacheTargetID any, f func() (T, error)) (T, error)

```

Then let's take a look at how `system.GetString` implement it.

```go

func GetSetting(ctx context.Context, key string) (string, error) {

return cache.GetWithContextCache(ctx, contextCacheKey, key, func() (string, error) {

return cache.GetString(genSettingCacheKey(key), func() (string, error) {

res, err := GetSettingNoCache(ctx, key)

if err != nil {

return "", err

}

return res.SettingValue, nil

})

})

}

```

First, it will check if context data include the setting object with the

key. If not, it will query from the global cache which may be memory or

a Redis cache. If not, it will get the object from the database. In the

end, if the object gets from the global cache or database, it will be

set into the context cache.

An object stored in the context cache will only be destroyed after the

context disappeared.

Add setting to allow edits by maintainers by default, to avoid having to

often ask contributors to enable this.

This also reorganizes the pull request settings UI to improve clarity.

It was unclear which checkbox options were there to control available

merge styles and which merge styles they correspond to.

Now there is a "Merge Styles" label followed by the merge style options

with the same name as in other menus. The remaining checkboxes were

moved to the bottom, ordered rougly by typical order of operations.

---------

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

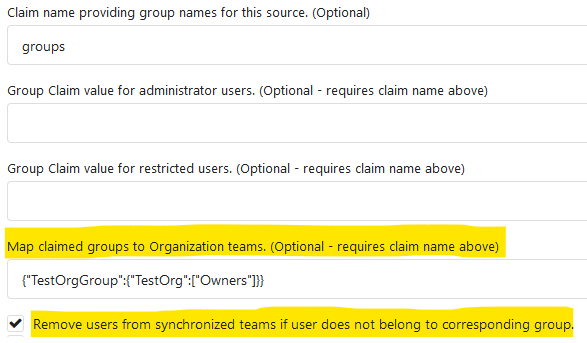

Fixes#19555

Test-Instructions:

https://github.com/go-gitea/gitea/pull/21441#issuecomment-1419438000

This PR implements the mapping of user groups provided by OIDC providers

to orgs teams in Gitea. The main part is a refactoring of the existing

LDAP code to make it usable from different providers.

Refactorings:

- Moved the router auth code from module to service because of import

cycles

- Changed some model methods to take a `Context` parameter

- Moved the mapping code from LDAP to a common location

I've tested it with Keycloak but other providers should work too. The

JSON mapping format is the same as for LDAP.

---------

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

The `commit_id` property name is the same as equivalent functionality in

GitHub. If the action was not caused by a commit, an empty string is

used.

This can for example be used to automatically add a Resolved label to an

issue fixed by a commit, or clear it when the issue is reopened.

This PR adds the support for scopes of access tokens, mimicking the

design of GitHub OAuth scopes.

The changes of the core logic are in `models/auth` that `AccessToken`

struct will have a `Scope` field. The normalized (no duplication of

scope), comma-separated scope string will be stored in `access_token`

table in the database.

In `services/auth`, the scope will be stored in context, which will be

used by `reqToken` middleware in API calls. Only OAuth2 tokens will have

granular token scopes, while others like BasicAuth will default to scope

`all`.

A large amount of work happens in `routers/api/v1/api.go` and the

corresponding `tests/integration` tests, that is adding necessary scopes

to each of the API calls as they fit.

- [x] Add `Scope` field to `AccessToken`

- [x] Add access control to all API endpoints

- [x] Update frontend & backend for when creating tokens

- [x] Add a database migration for `scope` column (enable 'all' access

to past tokens)

I'm aiming to complete it before Gitea 1.19 release.

Fixes#4300

This PR introduce glob match for protected branch name. The separator is

`/` and you can use `*` matching non-separator chars and use `**` across

separator.

It also supports input an exist or non-exist branch name as matching

condition and branch name condition has high priority than glob rule.

Should fix#2529 and #15705

screenshots

<img width="1160" alt="image"

src="https://user-images.githubusercontent.com/81045/205651179-ebb5492a-4ade-4bb4-a13c-965e8c927063.png">

Co-authored-by: zeripath <art27@cantab.net>

The API endpoints for "git" can panic if they are called on an empty

repo. We can simply allow empty repos for these endpoints without worry

as they should just work.

Fix#22452

Signed-off-by: Andrew Thornton <art27@cantab.net>

- Move the file `compare.go` and `slice.go` to `slice.go`.

- Fix `ExistsInSlice`, it's buggy

- It uses `sort.Search`, so it assumes that the input slice is sorted.

- It passes `func(i int) bool { return slice[i] == target })` to

`sort.Search`, that's incorrect, check the doc of `sort.Search`.

- Conbine `IsInt64InSlice(int64, []int64)` and `ExistsInSlice(string,

[]string)` to `SliceContains[T]([]T, T)`.

- Conbine `IsSliceInt64Eq([]int64, []int64)` and `IsEqualSlice([]string,

[]string)` to `SliceSortedEqual[T]([]T, T)`.

- Add `SliceEqual[T]([]T, T)` as a distinction from

`SliceSortedEqual[T]([]T, T)`.

- Redesign `RemoveIDFromList([]int64, int64) ([]int64, bool)` to

`SliceRemoveAll[T]([]T, T) []T`.

- Add `SliceContainsFunc[T]([]T, func(T) bool)` and

`SliceRemoveAllFunc[T]([]T, func(T) bool)` for general use.

- Add comments to explain why not `golang.org/x/exp/slices`.

- Add unit tests.

After #22362, we can feel free to use transactions without

`db.DefaultContext`.

And there are still lots of models using `db.DefaultContext`, I think we

should refactor them carefully and one by one.

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

Previously, there was an `import services/webhooks` inside

`modules/notification/webhook`.

This import was removed (after fighting against many import cycles).

Additionally, `modules/notification/webhook` was moved to

`modules/webhook`,

and a few structs/constants were extracted from `models/webhooks` to

`modules/webhook`.

Co-authored-by: 6543 <6543@obermui.de>

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

Push mirrors `sync_on_commit` option was added to the web interface in

v1.18.0. However, it's not added to the API. This PR updates the API

endpoint.

Fixes#22267

Also, I think this should be backported to 1.18

This PR changed the Auth interface signature from

`Verify(http *http.Request, w http.ResponseWriter, store DataStore, sess

SessionStore) *user_model.User`

to

`Verify(http *http.Request, w http.ResponseWriter, store DataStore, sess

SessionStore) (*user_model.User, error)`.

There is a new return argument `error` which means the verification

condition matched but verify process failed, we should stop the auth

process.

Before this PR, when return a `nil` user, we don't know the reason why

it returned `nil`. If the match condition is not satisfied or it

verified failure? For these two different results, we should have

different handler. If the match condition is not satisfied, we should

try next auth method and if there is no more auth method, it's an

anonymous user. If the condition matched but verify failed, the auth

process should be stop and return immediately.

This will fix#20563

Co-authored-by: KN4CK3R <admin@oldschoolhack.me>

Co-authored-by: Jason Song <i@wolfogre.com>

If user has reached the maximum limit of repositories:

- Before

- disallow create

- allow fork without limit

- This patch:

- disallow create

- disallow fork

- Add option `ALLOW_FORK_WITHOUT_MAXIMUM_LIMIT` (Default **true**) :

enable this allow user fork repositories without maximum number limit

fixed https://github.com/go-gitea/gitea/issues/21847

Signed-off-by: Xinyu Zhou <i@sourcehut.net>

Since we changed the /api/v1/ routes to disallow session authentication

we also removed their reliance on CSRF. However, we left the

ReverseProxy authentication here - but this means that POSTs to the API

are no longer protected by CSRF.

Now, ReverseProxy authentication is a kind of session authentication,

and is therefore inconsistent with the removal of session from the API.

This PR proposes that we simply remove the ReverseProxy authentication

from the API and therefore users of the API must explicitly use tokens

or basic authentication.

Replace #22077Close#22221Close#22077

Signed-off-by: Andrew Thornton <art27@cantab.net>

Fixes#22178

After this change upload versions with different semver metadata are

treated as the same version and trigger a duplicated version error.

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

depends on #22094

Fixes https://codeberg.org/forgejo/forgejo/issues/77

The old logic did not consider `is_internal`.

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

Co-authored-by: techknowlogick <techknowlogick@gitea.io>

Close#14601Fix#3690

Revive of #14601.

Updated to current code, cleanup and added more read/write checks.

Signed-off-by: Andrew Thornton <art27@cantab.net>

Signed-off-by: Andre Bruch <ab@andrebruch.com>

Co-authored-by: zeripath <art27@cantab.net>

Co-authored-by: 6543 <6543@obermui.de>

Co-authored-by: Norwin <git@nroo.de>

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

`hex.EncodeToString` has better performance than `fmt.Sprintf("%x",

[]byte)`, we should use it as much as possible.

I'm not an extreme fan of performance, so I think there are some

exceptions:

- `fmt.Sprintf("%x", func(...)[N]byte())`

- We can't slice the function return value directly, and it's not worth

adding lines.

```diff

func A()[20]byte { ... }

- a := fmt.Sprintf("%x", A())

- a := hex.EncodeToString(A()[:]) // invalid

+ tmp := A()

+ a := hex.EncodeToString(tmp[:])

```

- `fmt.Sprintf("%X", []byte)`

- `strings.ToUpper(hex.EncodeToString(bytes))` has even worse

performance.

Change all license headers to comply with REUSE specification.

Fix#16132

Co-authored-by: flynnnnnnnnnn <flynnnnnnnnnn@github>

Co-authored-by: John Olheiser <john.olheiser@gmail.com>

This PR addresses #19586

I added a mutex to the upload version creation which will prevent the

push errors when two requests try to create these database entries. I'm

not sure if this should be the final solution for this problem.

I added a workaround to allow a reupload of missing blobs. Normally a

reupload is skipped because the database knows the blob is already

present. The workaround checks if the blob exists on the file system.

This should not be needed anymore with the above fix so I marked this

code to be removed with Gitea v1.20.

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

This PR adds a context parameter to a bunch of methods. Some helper

`xxxCtx()` methods got replaced with the normal name now.

Co-authored-by: delvh <dev.lh@web.de>

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

Fix#19513

This PR introduce a new db method `InTransaction(context.Context)`,

and also builtin check on `db.TxContext` and `db.WithTx`.

There is also a new method `db.AutoTx` has been introduced but could be used by other PRs.

`WithTx` will always open a new transaction, if a transaction exist in context, return an error.

`AutoTx` will try to open a new transaction if no transaction exist in context.

That means it will always enter a transaction if there is no error.

Co-authored-by: delvh <dev.lh@web.de>

Co-authored-by: 6543 <6543@obermui.de>

In #21637 it was mentioned that the purpose of the API routes for the

packages is unclear. This PR adds some documentation.

Fix#21637

Signed-off-by: Andrew Thornton <art27@cantab.net>

Co-authored-by: KN4CK3R <admin@oldschoolhack.me>

Fix#20921.

The `ctx.Repo.GitRepo` has been used in deleting issues when the issue

is a PR.

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

Co-authored-by: Lauris BH <lauris@nix.lv>

This PR enhances the CORS middleware usage by allowing for the headers

to be configured in `app.ini`.

Fixes#21746

Co-authored-by: KN4CK3R <admin@oldschoolhack.me>

Co-authored-by: John Olheiser <john.olheiser@gmail.com>

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

Related #20471

This PR adds global quota limits for the package registry. Settings for

individual users/orgs can be added in a seperate PR using the settings

table.

Co-authored-by: Lauris BH <lauris@nix.lv>

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

This addresses #21707 and adds a second package test case for a

non-semver compatible version (this might be overkill though since you

could also edit the old package version to have an epoch in front and

see the error, this just seemed more flexible for the future).

Co-authored-by: KN4CK3R <admin@oldschoolhack.me>

_This is a different approach to #20267, I took the liberty of adapting

some parts, see below_

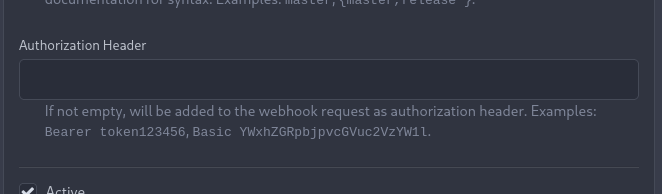

## Context

In some cases, a weebhook endpoint requires some kind of authentication.

The usual way is by sending a static `Authorization` header, with a

given token. For instance:

- Matrix expects a `Bearer <token>` (already implemented, by storing the

header cleartext in the metadata - which is buggy on retry #19872)

- TeamCity #18667

- Gitea instances #20267

- SourceHut https://man.sr.ht/graphql.md#authentication-strategies (this

is my actual personal need :)

## Proposed solution

Add a dedicated encrypt column to the webhook table (instead of storing

it as meta as proposed in #20267), so that it gets available for all

present and future hook types (especially the custom ones #19307).

This would also solve the buggy matrix retry #19872.

As a first step, I would recommend focusing on the backend logic and

improve the frontend at a later stage. For now the UI is a simple

`Authorization` field (which could be later customized with `Bearer` and

`Basic` switches):

The header name is hard-coded, since I couldn't fine any usecase

justifying otherwise.

## Questions

- What do you think of this approach? @justusbunsi @Gusted @silverwind

- ~~How are the migrations generated? Do I have to manually create a new

file, or is there a command for that?~~

- ~~I started adding it to the API: should I complete it or should I

drop it? (I don't know how much the API is actually used)~~

## Done as well:

- add a migration for the existing matrix webhooks and remove the

`Authorization` logic there

_Closes #19872_

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

Co-authored-by: Gusted <williamzijl7@hotmail.com>

Co-authored-by: delvh <dev.lh@web.de>

I found myself wondering whether a PR I scheduled for automerge was

actually merged. It was, but I didn't receive a mail notification for it

- that makes sense considering I am the doer and usually don't want to

receive such notifications. But ideally I want to receive a notification

when a PR was merged because I scheduled it for automerge.

This PR implements exactly that.

The implementation works, but I wonder if there's a way to avoid passing

the "This PR was automerged" state down so much. I tried solving this

via the database (checking if there's an automerge scheduled for this PR

when sending the notification) but that did not work reliably, probably

because sending the notification happens async and the entry might have

already been deleted. My implementation might be the most

straightforward but maybe not the most elegant.

Signed-off-by: Andrew Thornton <art27@cantab.net>

Co-authored-by: Lauris BH <lauris@nix.lv>

Co-authored-by: Andrew Thornton <art27@cantab.net>

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

Fixes an 500 error/panic if using the changed PR files API with pages

that should return empty lists because there are no items anymore.

`start-end` is then < 0 which ends in panic.

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

Co-authored-by: 6543 <6543@obermui.de>

Co-authored-by: delvh <dev.lh@web.de>

I noticed an admin is not allowed to upload packages for other users

because `ctx.IsSigned` was not set.

I added a check for `user.IsActive` and `user.ProhibitLogin` too because

both was not checked. Tests enforce this now.

Co-authored-by: Lauris BH <lauris@nix.lv>

The OAuth spec [defines two types of

client](https://datatracker.ietf.org/doc/html/rfc6749#section-2.1),

confidential and public. Previously Gitea assumed all clients to be

confidential.

> OAuth defines two client types, based on their ability to authenticate

securely with the authorization server (i.e., ability to

> maintain the confidentiality of their client credentials):

>

> confidential

> Clients capable of maintaining the confidentiality of their

credentials (e.g., client implemented on a secure server with

> restricted access to the client credentials), or capable of secure

client authentication using other means.

>

> **public

> Clients incapable of maintaining the confidentiality of their

credentials (e.g., clients executing on the device used by the resource

owner, such as an installed native application or a web browser-based

application), and incapable of secure client authentication via any

other means.**

>

> The client type designation is based on the authorization server's

definition of secure authentication and its acceptable exposure levels

of client credentials. The authorization server SHOULD NOT make

assumptions about the client type.

https://datatracker.ietf.org/doc/html/rfc8252#section-8.4

> Authorization servers MUST record the client type in the client

registration details in order to identify and process requests

accordingly.

Require PKCE for public clients:

https://datatracker.ietf.org/doc/html/rfc8252#section-8.1

> Authorization servers SHOULD reject authorization requests from native

apps that don't use PKCE by returning an error message

Fixes#21299

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

Previously mentioning a user would link to its profile, regardless of

whether the user existed. This change tests if the user exists and only

if it does - a link to its profile is added.

* Fixes#3444

Signed-off-by: Yarden Shoham <hrsi88@gmail.com>

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

At the moment a repository reference is needed for webhooks. With the

upcoming package PR we need to send webhooks without a repository

reference. For example a package is uploaded to an organization. In

theory this enables the usage of webhooks for future user actions.

This PR removes the repository id from `HookTask` and changes how the

hooks are processed (see `services/webhook/deliver.go`). In a follow up

PR I want to remove the usage of the `UniqueQueue´ and replace it with a

normal queue because there is no reason to be unique.

Co-authored-by: 6543 <6543@obermui.de>

Fixes#21379

The commits are capped by `setting.UI.FeedMaxCommitNum` so

`len(commits)` is not the correct number. So this PR adds a new

`TotalCommits` field.

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

NuGet symbol file lookup returned 404 on Visual Studio 2019 due to

case-sensitive api router. The api router should accept case-insensitive GUID.

Co-authored-by: techknowlogick <techknowlogick@gitea.io>

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

Fixes#21250

Related #20414

Conan packages don't have to follow SemVer.

The migration fixes the setting for all existing Conan and Generic

(#20414) packages.

Calls to ToCommit are very slow due to fetching diffs, analyzing files.

This patch lets us supply `stat` as false to speed fetching a commit

when we don't need the diff.

/v1/repo/commits has a default `stat` set as true now. Set to false to

experience fetching thousands of commits per second instead of 2-5 per

second.

This adds an api endpoint `/files` to PRs that allows to get a list of changed files.

built upon #18228, reviews there are included

closes https://github.com/go-gitea/gitea/issues/654

Co-authored-by: Anton Bracke <anton@ju60.de>

Co-authored-by: 6543 <6543@obermui.de>

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

This PR would presumably

Fix#20522Fix#18773Fix#19069Fix#21077Fix#13622

-----

1. Check whether unit type is currently enabled

2. Check if it _will_ be enabled via opt

3. Allow modification as necessary

Signed-off-by: jolheiser <john.olheiser@gmail.com>

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

Co-authored-by: 6543 <6543@obermui.de>

Addition to #20734, Fixes#20717

The `/index.json` endpoint needs to be accessible even if the registry

is private. The NuGet client uses this endpoint without

authentification.

The old fix only works if the NuGet cli is used with `--source <name>`

but not with `--source <url>/index.json`.

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

Close#20098, in the NPM registry API, implemented to match what's described by https://github.com/npm/registry/blob/master/docs/REGISTRY-API.md#get-v1search

Currently have only implemented the bare minimum to work with the [Unity Package Manager](https://docs.unity3d.com/Manual/upm-ui.html).

Co-authored-by: Jack Vine <jackv@jack-lemur-suse.cat-prometheus.ts.net>

Co-authored-by: KN4CK3R <admin@oldschoolhack.me>

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>

Co-authored-by: wxiaoguang <wxiaoguang@gmail.com>

The PyPI name regexp is too restrictive and only permits lowercase characters. This PR adjusts the regexp to add in support for uppercase characters.

Fix#21014

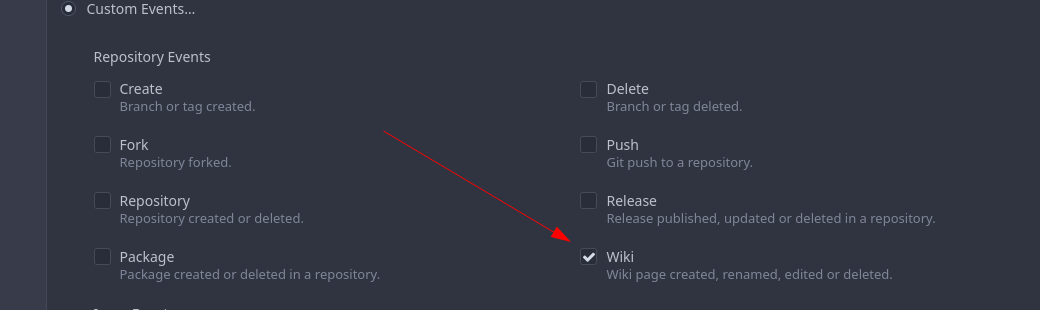

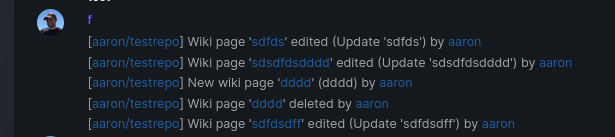

Add support for triggering webhook notifications on wiki changes.

This PR contains frontend and backend for webhook notifications on wiki actions (create a new page, rename a page, edit a page and delete a page). The frontend got a new checkbox under the Custom Event -> Repository Events section. There is only one checkbox for create/edit/rename/delete actions, because it makes no sense to separate it and others like releases or packages follow the same schema.

The actions itself are separated, so that different notifications will be executed (with the "action" field). All the webhook receivers implement the new interface method (Wiki) and the corresponding tests.

When implementing this, I encounter a little bug on editing a wiki page. Creating and editing a wiki page is technically the same action and will be handled by the ```updateWikiPage``` function. But the function need to know if it is a new wiki page or just a change. This distinction is done by the ```action``` parameter, but this will not be sent by the frontend (on form submit). This PR will fix this by adding the ```action``` parameter with the values ```_new``` or ```_edit```, which will be used by the ```updateWikiPage``` function.

I've done integration tests with matrix and gitea (http).

Fix#16457

Signed-off-by: Aaron Fischer <mail@aaron-fischer.net>

When migrating add several more important sanity checks:

* SHAs must be SHAs

* Refs must be valid Refs

* URLs must be reasonable

Signed-off-by: Andrew Thornton <art27@cantab.net>

Signed-off-by: Andrew Thornton <art27@cantab.net>

Co-authored-by: techknowlogick <matti@mdranta.net>

The webhook payload should use the right ref when it‘s specified in the testing request.

The compare URL should not be empty, a URL like `compare/A...A` seems useless in most cases but is helpful when testing.

* fix hard-coded timeout and error panic in API archive download endpoint

This commit updates the `GET /api/v1/repos/{owner}/{repo}/archive/{archive}`

endpoint which prior to this PR had a couple of issues.

1. The endpoint had a hard-coded 20s timeout for the archiver to complete after

which a 500 (Internal Server Error) was returned to client. For a scripted

API client there was no clear way of telling that the operation timed out and

that it should retry.

2. Whenever the timeout _did occur_, the code used to panic. This was caused by

the API endpoint "delegating" to the same call path as the web, which uses a

slightly different way of reporting errors (HTML rather than JSON for

example).

More specifically, `api/v1/repo/file.go#GetArchive` just called through to

`web/repo/repo.go#Download`, which expects the `Context` to have a `Render`

field set, but which is `nil` for API calls. Hence, a `nil` pointer error.

The code addresses (1) by dropping the hard-coded timeout. Instead, any

timeout/cancelation on the incoming `Context` is used.

The code addresses (2) by updating the API endpoint to use a separate call path

for the API-triggered archive download. This avoids producing HTML-errors on

errors (it now produces JSON errors).

Signed-off-by: Peter Gardfjäll <peter.gardfjall.work@gmail.com>

The recovery, API, Web and package frameworks all create their own HTML

Renderers. This increases the memory requirements of Gitea

unnecessarily with duplicate templates being kept in memory.

Further the reloading framework in dev mode for these involves locking

and recompiling all of the templates on each load. This will potentially

hide concurrency issues and it is inefficient.

This PR stores the templates renderer in the context and stores this

context in the NormalRoutes, it then creates a fsnotify.Watcher

framework to watch files.

The watching framework is then extended to the mailer templates which

were previously not being reloaded in dev.

Then the locales are simplified to a similar structure.

Fix#20210Fix#20211Fix#20217

Signed-off-by: Andrew Thornton <art27@cantab.net>

Add code to test if GetAttachmentByID returns an ErrAttachmentNotExist error

and return NotFound instead of InternalServerError

Fix#20884

Signed-off-by: Andrew Thornton <art27@cantab.net>

Co-authored-by: Lunny Xiao <xiaolunwen@gmail.com>