Merge branch 'main' into lora-internal

This commit is contained in:

commit

1104885f00

|

|

@ -140,9 +140,9 @@ RUN TORCH_CUDA_ARCH_LIST="8.0;8.6+PTX" make build-eetq

|

|||

# Build marlin kernels

|

||||

FROM kernel-builder as marlin-kernels-builder

|

||||

WORKDIR /usr/src

|

||||

COPY server/Makefile-marlin Makefile

|

||||

COPY server/marlin/ .

|

||||

# Build specific version of transformers

|

||||

RUN TORCH_CUDA_ARCH_LIST="8.0;8.6+PTX" make build-marlin

|

||||

RUN TORCH_CUDA_ARCH_LIST="8.0;8.6+PTX" python setup.py build

|

||||

|

||||

# Build Lorax Punica kernels

|

||||

FROM kernel-builder as lorax-punica-builder

|

||||

|

|

|

|||

|

|

@ -17,6 +17,8 @@

|

|||

title: Supported Models and Hardware

|

||||

- local: messages_api

|

||||

title: Messages API

|

||||

- local: architecture

|

||||

title: Internal Architecture

|

||||

title: Getting started

|

||||

- sections:

|

||||

- local: basic_tutorials/consuming_tgi

|

||||

|

|

|

|||

|

|

@ -0,0 +1,227 @@

|

|||

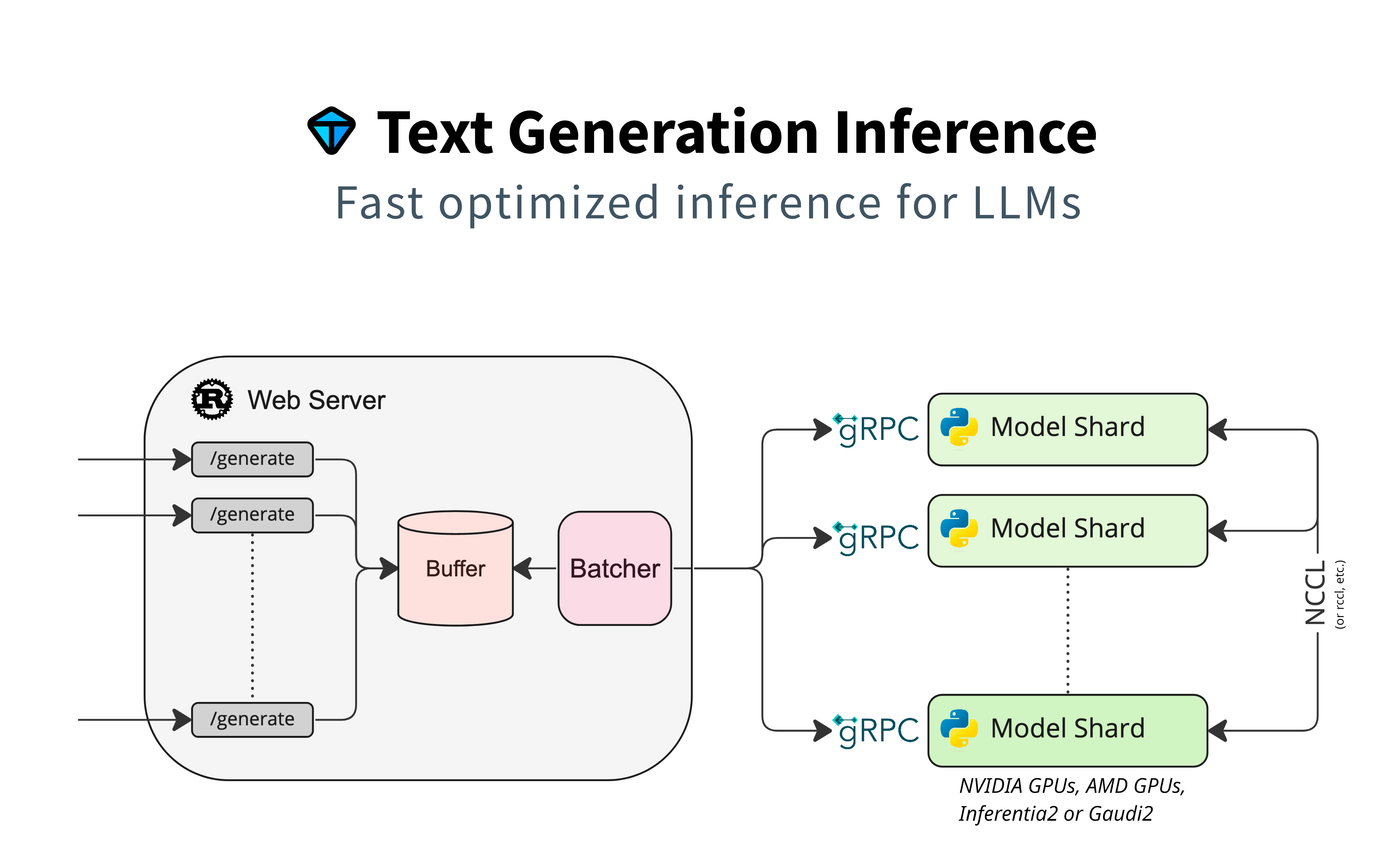

# Text Generation Inference Architecture

|

||||

|

||||

This document aims at describing the architecture of Text Generation Inference (TGI), by describing the call flow between the separate components.

|

||||

|

||||

A high-level architecture diagram can be seen here:

|

||||

|

||||

|

||||

|

||||

This diagram shows well there are these separate components:

|

||||

|

||||

- **The router**, also named `webserver`, that receives the client requests, buffers them, creates some batches, and prepares gRPC calls to a model server.

|

||||

- **The model server**, responsible of receiving the gRPC requests and to process the inference on the model. If the model is sharded across multiple accelerators (e.g.: multiple GPUs), the model server shards might be synchronized via NCCL or equivalent.

|

||||

- **The launcher** is a helper thar will be able to launch one or several model servers (if model is sharded), and it launches the router with the compatible arguments.

|

||||

|

||||

The router and the model server can be two different machines, they do not need to be deployed together.

|

||||

|

||||

## The Router

|

||||

|

||||

This component is a rust web server binary that accepts HTTP requests using the custom [HTTP API](https://huggingface.github.io/text-generation-inference/), as well as OpenAI's [Messages API](https://huggingface.co/docs/text-generation-inference/messages_api).

|

||||

The router receives the API calls and handles the "baches" logic (and introduction to batching can be found [here](https://github.com/huggingface/text-generation-inference/blob/main/router/README.md)).

|

||||

It uses different strategies to reduce latency between requests and responses, especially oriented to decoding latency. It will use queues, schedulers, and block allocators to achieve that and produce batched requests that it will then be sent to the model server.

|

||||

|

||||

### Router's command line

|

||||

|

||||

The router command line will be the way to pass parameters to it (it does not rely on configuration file):

|

||||

|

||||

```

|

||||

Text Generation Webserver

|

||||

|

||||

Usage: text-generation-router [OPTIONS]

|

||||

|

||||

Options:

|

||||

--max-concurrent-requests <MAX_CONCURRENT_REQUESTS>

|

||||

[env: MAX_CONCURRENT_REQUESTS=] [default: 128]

|

||||

--max-best-of <MAX_BEST_OF>

|

||||

[env: MAX_BEST_OF=] [default: 2]

|

||||

--max-stop-sequences <MAX_STOP_SEQUENCES>

|

||||

[env: MAX_STOP_SEQUENCES=] [default: 4]

|

||||

--max-top-n-tokens <MAX_TOP_N_TOKENS>

|

||||

[env: MAX_TOP_N_TOKENS=] [default: 5]

|

||||

--max-input-tokens <MAX_INPUT_TOKENS>

|

||||

[env: MAX_INPUT_TOKENS=] [default: 1024]

|

||||

--max-total-tokens <MAX_TOTAL_TOKENS>

|

||||

[env: MAX_TOTAL_TOKENS=] [default: 2048]

|

||||

--waiting-served-ratio <WAITING_SERVED_RATIO>

|

||||

[env: WAITING_SERVED_RATIO=] [default: 1.2]

|

||||

--max-batch-prefill-tokens <MAX_BATCH_PREFILL_TOKENS>

|

||||

[env: MAX_BATCH_PREFILL_TOKENS=] [default: 4096]

|

||||

--max-batch-total-tokens <MAX_BATCH_TOTAL_TOKENS>

|

||||

[env: MAX_BATCH_TOTAL_TOKENS=]

|

||||

--max-waiting-tokens <MAX_WAITING_TOKENS>

|

||||

[env: MAX_WAITING_TOKENS=] [default: 20]

|

||||

--max-batch-size <MAX_BATCH_SIZE>

|

||||

[env: MAX_BATCH_SIZE=]

|

||||

--hostname <HOSTNAME>

|

||||

[env: HOSTNAME=] [default: 0.0.0.0]

|

||||

-p, --port <PORT>

|

||||

[env: PORT=] [default: 3000]

|

||||

--master-shard-uds-path <MASTER_SHARD_UDS_PATH>

|

||||

[env: MASTER_SHARD_UDS_PATH=] [default: /tmp/text-generation-server-0]

|

||||

--tokenizer-name <TOKENIZER_NAME>

|

||||

[env: TOKENIZER_NAME=] [default: bigscience/bloom]

|

||||

--tokenizer-config-path <TOKENIZER_CONFIG_PATH>

|

||||

[env: TOKENIZER_CONFIG_PATH=]

|

||||

--revision <REVISION>

|

||||

[env: REVISION=]

|

||||

--validation-workers <VALIDATION_WORKERS>

|

||||

[env: VALIDATION_WORKERS=] [default: 2]

|

||||

--json-output

|

||||

[env: JSON_OUTPUT=]

|

||||

--otlp-endpoint <OTLP_ENDPOINT>

|

||||

[env: OTLP_ENDPOINT=]

|

||||

--cors-allow-origin <CORS_ALLOW_ORIGIN>

|

||||

[env: CORS_ALLOW_ORIGIN=]

|

||||

--ngrok

|

||||

[env: NGROK=]

|

||||

--ngrok-authtoken <NGROK_AUTHTOKEN>

|

||||

[env: NGROK_AUTHTOKEN=]

|

||||

--ngrok-edge <NGROK_EDGE>

|

||||

[env: NGROK_EDGE=]

|

||||

--messages-api-enabled

|

||||

[env: MESSAGES_API_ENABLED=]

|

||||

--disable-grammar-support

|

||||

[env: DISABLE_GRAMMAR_SUPPORT=]

|

||||

--max-client-batch-size <MAX_CLIENT_BATCH_SIZE>

|

||||

[env: MAX_CLIENT_BATCH_SIZE=] [default: 4]

|

||||

-h, --help

|

||||

Print help

|

||||

-V, --version

|

||||

Print version

|

||||

```

|

||||

|

||||

## The Model Server

|

||||

|

||||

The model server is a python server, capable of starting a server waiting for gRPC requests, loads a given model, perform sharding to provide [tensor parallelism](https://huggingface.co/docs/text-generation-inference/conceptual/tensor_parallelism), and stays alive while waiting for new requests.

|

||||

The model server supports models instantiated using Pytorch and optimized for inference mainly on CUDA/ROCM.

|

||||

|

||||

### Model Server Variants

|

||||

|

||||

Several variants of the model server exist that are actively supported by Hugging Face:

|

||||

|

||||

- By default, the model server will attempt building [a server optimized for Nvidia GPUs with CUDA](https://huggingface.co/docs/text-generation-inference/installation_nvidia). The code for this version is hosted in the [main TGI repository](https://github.com/huggingface/text-generation-inference).

|

||||

- A [version optimized for AMD with ROCm](https://huggingface.co/docs/text-generation-inference/installation_amd) is hosted in the main TGI repository. Some model features differ.

|

||||

- The [version for Intel Gaudi](https://huggingface.co/docs/text-generation-inference/installation_gaudi) is maintained on a forked repository, often resynchronized with the main [TGI repository](https://github.com/huggingface/tgi-gaudi).

|

||||

- A [version for Neuron (AWS Inferentia2)](https://huggingface.co/docs/text-generation-inference/installation_inferentia) is maintained as part of [Optimum Neuron](https://github.com/huggingface/optimum-neuron/tree/main/text-generation-inference).

|

||||

- A version for Google TPUs is maintained as part of [Optimum TPU](https://github.com/huggingface/optimum-tpu/tree/main/text-generation-inference).

|

||||

|

||||

Not all variants provide the same features, as hardware and middleware capabilities do not provide the same optimizations.

|

||||

|

||||

### Command Line Interface

|

||||

|

||||

The official command line interface (CLI) for the server supports three subcommands, `download-weights`, `quantize` and `serve`:

|

||||

|

||||

- `download-weights` will download weights from the hub and, in some variants it will convert weights to a format that is adapted to the given implementation;

|

||||

- `quantize` will allow to quantize a model using the `qptq` package. This feature is not available nor supported on all variants;

|

||||

- `serve` will start the server that load a model (or a model shard), receives gRPC calls from the router, performs an inference and provides a formatted response to the given request.

|

||||

|

||||

Serve's command line parameters on the TGI repository are these:

|

||||

|

||||

```

|

||||

Usage: cli.py serve [OPTIONS] MODEL_ID

|

||||

|

||||

╭─ Arguments ──────────────────────────────────────────────────────────────────────────────────────────────╮

|

||||

│ * model_id TEXT [default: None] [required] │

|

||||

╰──────────────────────────────────────────────────────────────────────────────────────────────────────────╯

|

||||

╭─ Options ────────────────────────────────────────────────────────────────────────────────────────────────╮

|

||||

│ --revision TEXT [default: None] │

|

||||

│ --sharded --no-sharded [default: no-sharded] │

|

||||

│ --quantize [bitsandbytes|bitsandbytes [default: None] │

|

||||

│ -nf4|bitsandbytes-fp4|gptq │

|

||||

│ |awq|eetq|exl2|fp8] │

|

||||

│ --speculate INTEGER [default: None] │

|

||||

│ --dtype [float16|bfloat16] [default: None] │

|

||||

│ --trust-remote-code --no-trust-remote-code [default: │

|

||||

│ no-trust-remote-code] │

|

||||

│ --uds-path PATH [default: │

|

||||

│ /tmp/text-generation-serve… │

|

||||

│ --logger-level TEXT [default: INFO] │

|

||||

│ --json-output --no-json-output [default: no-json-output] │

|

||||

│ --otlp-endpoint TEXT [default: None] │

|

||||

│ --help Show this message and exit. │

|

||||

╰──────────────────────────────────────────────────────────────────────────────────────────────────────────╯

|

||||

```

|

||||

|

||||

Note that some variants might support different parameters, and they could possibly accept more options that can be passed on using environment variables.

|

||||

|

||||

## Call Flow

|

||||

|

||||

Once both components are initialized, weights downloaded and model server is up and running, router and model server exchange data and info through the gRPC call. There are currently two supported schemas, [v2](https://github.com/huggingface/text-generation-inference/blob/main/proto/generate.proto) and [v3](https://github.com/huggingface/text-generation-inference/blob/main/proto/v3/generate.proto). These two versions are almost identical, except for:

|

||||

|

||||

- input chunks support, for text and image data,

|

||||

- paged attention support

|

||||

|

||||

Here's a diagram that displays the exchanges that follow the router and model server startup.

|

||||

|

||||

```mermaid

|

||||

sequenceDiagram

|

||||

|

||||

Router->>Model Server: service discovery

|

||||

Model Server-->>Router: urls for other shards

|

||||

|

||||

Router->>Model Server: get model info

|

||||

Model Server-->>Router: shard info

|

||||

|

||||

Router->>Model Server: health check

|

||||

Model Server-->>Router: health OK

|

||||

|

||||

Router->>Model Server: warmup(max_input_tokens, max_batch_prefill_tokens, max_total_tokens, max_batch_size)

|

||||

Model Server-->>Router: warmup result

|

||||

```

|

||||

|

||||

After these are done, the router is ready to receive generate calls from multiple clients. Here's an example.

|

||||

|

||||

```mermaid

|

||||

sequenceDiagram

|

||||

participant Client 1

|

||||

participant Client 2

|

||||

participant Client 3

|

||||

participant Router

|

||||

participant Model Server

|

||||

|

||||

Client 1->>Router: generate_stream

|

||||

Router->>Model Server: prefill(batch1)

|

||||

Model Server-->>Router: generations, cached_batch1, timings

|

||||

Router-->>Client 1: token 1

|

||||

|

||||

Router->>Model Server: decode(cached_batch1)

|

||||

Model Server-->>Router: generations, cached_batch1, timings

|

||||

Router-->>Client 1: token 2

|

||||

|

||||

Router->>Model Server: decode(cached_batch1)

|

||||

Model Server-->>Router: generations, cached_batch1, timings

|

||||

Router-->>Client 1: token 3

|

||||

|

||||

Client 2->>Router: generate_stream

|

||||

Router->>Model Server: prefill(batch2)

|

||||

Note right of Model Server: This stops previous batch, that is restarted

|

||||

Model Server-->>Router: generations, cached_batch2, timings

|

||||

Router-->>Client 2: token 1'

|

||||

|

||||

Router->>Model Server: decode(cached_batch1, cached_batch2)

|

||||

Model Server-->>Router: generations, cached_batch1, timings

|

||||

Router-->>Client 1: token 4

|

||||

Router-->>Client 2: token 2'

|

||||

|

||||

Note left of Client 1: Client 1 leaves

|

||||

Router->>Model Server: filter_batch(cached_batch1, request_ids_to_keep=batch2)

|

||||

Model Server-->>Router: filtered batch

|

||||

|

||||

Router->>Model Server: decode(cached_batch2)

|

||||

Model Server-->>Router: generations, cached_batch2, timings

|

||||

Router-->>Client 2: token 3'

|

||||

|

||||

Client 3->>Router: generate_stream

|

||||

Note right of Model Server: This stops previous batch, that is restarted

|

||||

Router->>Model Server: prefill(batch3)

|

||||

Note left of Client 1: Client 3 leaves without receiving any batch

|

||||

Router->>Model Server: clear_cache(batch3)

|

||||

Note right of Model Server: This stops previous batch, that is restarted

|

||||

|

||||

Router->>Model Server: decode(cached_batch3)

|

||||

Note right of Model Server: Last token (stopping criteria)

|

||||

Model Server-->>Router: generations, cached_batch3, timings

|

||||

Router-->>Client 2: token 4'

|

||||

|

||||

|

||||

```

|

||||

|

|

@ -20,7 +20,7 @@ Text Generation Inference enables serving optimized models on specific hardware

|

|||

- [Baichuan](https://huggingface.co/baichuan-inc/Baichuan2-7B-Chat)

|

||||

- [Falcon](https://huggingface.co/tiiuae/falcon-7b-instruct)

|

||||

- [StarCoder 2](https://huggingface.co/bigcode/starcoder2-15b-instruct-v0.1)

|

||||

- [Qwen 2](https://huggingface.co/bigcode/starcoder2-15b-instruct-v0.1)

|

||||

- [Qwen 2](https://huggingface.co/collections/Qwen/qwen2-6659360b33528ced941e557f)

|

||||

- [Opt](https://huggingface.co/facebook/opt-6.7b)

|

||||

- [T5](https://huggingface.co/google/flan-t5-xxl)

|

||||

- [Galactica](https://huggingface.co/facebook/galactica-120b)

|

||||

|

|

|

|||

|

|

@ -0,0 +1,84 @@

|

|||

{

|

||||

"details": {

|

||||

"best_of_sequences": null,

|

||||

"finish_reason": "length",

|

||||

"generated_tokens": 10,

|

||||

"prefill": [

|

||||

{

|

||||

"id": 2323,

|

||||

"logprob": null,

|

||||

"text": "Test"

|

||||

},

|

||||

{

|

||||

"id": 1715,

|

||||

"logprob": -11.34375,

|

||||

"text": " request"

|

||||

}

|

||||

],

|

||||

"seed": null,

|

||||

"tokens": [

|

||||

{

|

||||

"id": 198,

|

||||

"logprob": -2.5742188,

|

||||

"special": false,

|

||||

"text": "\n"

|

||||

},

|

||||

{

|

||||

"id": 262,

|

||||

"logprob": -1.6230469,

|

||||

"special": false,

|

||||

"text": " "

|

||||

},

|

||||

{

|

||||

"id": 3270,

|

||||

"logprob": -2.046875,

|

||||

"special": false,

|

||||

"text": " \"\"\"\n"

|

||||

},

|

||||

{

|

||||

"id": 262,

|

||||

"logprob": -0.015281677,

|

||||

"special": false,

|

||||

"text": " "

|

||||

},

|

||||

{

|

||||

"id": 422,

|

||||

"logprob": -2.1425781,

|

||||

"special": false,

|

||||

"text": " if"

|

||||

},

|

||||

{

|

||||

"id": 1715,

|

||||

"logprob": -0.9238281,

|

||||

"special": false,

|

||||

"text": " request"

|

||||

},

|

||||

{

|

||||

"id": 13204,

|

||||

"logprob": -0.076660156,

|

||||

"special": false,

|

||||

"text": ".method"

|

||||

},

|

||||

{

|

||||

"id": 624,

|

||||

"logprob": -0.021987915,

|

||||

"special": false,

|

||||

"text": " =="

|

||||

},

|

||||

{

|

||||

"id": 364,

|

||||

"logprob": -0.39208984,

|

||||

"special": false,

|

||||

"text": " '"

|

||||

},

|

||||

{

|

||||

"id": 3019,

|

||||

"logprob": -0.10821533,

|

||||

"special": false,

|

||||

"text": "POST"

|

||||

}

|

||||

],

|

||||

"top_tokens": null

|

||||

},

|

||||

"generated_text": "\n \"\"\"\n if request.method == 'POST"

|

||||

}

|

||||

|

|

@ -0,0 +1,84 @@

|

|||

{

|

||||

"details": {

|

||||

"best_of_sequences": null,

|

||||

"finish_reason": "length",

|

||||

"generated_tokens": 10,

|

||||

"prefill": [

|

||||

{

|

||||

"id": 2323,

|

||||

"logprob": null,

|

||||

"text": "Test"

|

||||

},

|

||||

{

|

||||

"id": 1715,

|

||||

"logprob": -11.34375,

|

||||

"text": " request"

|

||||

}

|

||||

],

|

||||

"seed": 0,

|

||||

"tokens": [

|

||||

{

|

||||

"id": 13,

|

||||

"logprob": -2.2539062,

|

||||

"special": false,

|

||||

"text": "."

|

||||

},

|

||||

{

|

||||

"id": 578,

|

||||

"logprob": -0.15563965,

|

||||

"special": false,

|

||||

"text": " The"

|

||||

},

|

||||

{

|

||||

"id": 3622,

|

||||

"logprob": -0.8203125,

|

||||

"special": false,

|

||||

"text": " server"

|

||||

},

|

||||

{

|

||||

"id": 706,

|

||||

"logprob": 0.0,

|

||||

"special": false,

|

||||

"text": " has"

|

||||

},

|

||||

{

|

||||

"id": 539,

|

||||

"logprob": 0.0,

|

||||

"special": false,

|

||||

"text": " not"

|

||||

},

|

||||

{

|

||||

"id": 3686,

|

||||

"logprob": 0.0,

|

||||

"special": false,

|

||||

"text": " yet"

|

||||

},

|

||||

{

|

||||

"id": 3288,

|

||||

"logprob": 0.0,

|

||||

"special": false,

|

||||

"text": " sent"

|

||||

},

|

||||

{

|

||||

"id": 904,

|

||||

"logprob": 0.0,

|

||||

"special": false,

|

||||

"text": " any"

|

||||

},

|

||||

{

|

||||

"id": 828,

|

||||

"logprob": 0.0,

|

||||

"special": false,

|

||||

"text": " data"

|

||||

},

|

||||

{

|

||||

"id": 382,

|

||||

"logprob": -1.5517578,

|

||||

"special": false,

|

||||

"text": ".\n\n"

|

||||

}

|

||||

],

|

||||

"top_tokens": null

|

||||

},

|

||||

"generated_text": "Test request. The server has not yet sent any data.\n\n"

|

||||

}

|

||||

|

|

@ -0,0 +1,338 @@

|

|||

[

|

||||

{

|

||||

"details": {

|

||||

"best_of_sequences": null,

|

||||

"finish_reason": "length",

|

||||

"generated_tokens": 10,

|

||||

"prefill": [

|

||||

{

|

||||

"id": 2323,

|

||||

"logprob": null,

|

||||

"text": "Test"

|

||||

},

|

||||

{

|

||||

"id": 1715,

|

||||

"logprob": -11.34375,

|

||||

"text": " request"

|

||||

}

|

||||

],

|

||||

"seed": null,

|

||||

"tokens": [

|

||||

{

|

||||

"id": 198,

|

||||

"logprob": -2.5742188,

|

||||

"special": false,

|

||||

"text": "\n"

|

||||

},

|

||||

{

|

||||

"id": 262,

|

||||

"logprob": -1.6220703,

|

||||

"special": false,

|

||||

"text": " "

|

||||

},

|

||||

{

|

||||

"id": 3270,

|

||||

"logprob": -2.0410156,

|

||||

"special": false,

|

||||

"text": " \"\"\"\n"

|

||||

},

|

||||

{

|

||||

"id": 262,

|

||||

"logprob": -0.015281677,

|

||||

"special": false,

|

||||

"text": " "

|

||||

},

|

||||

{

|

||||

"id": 422,

|

||||

"logprob": -2.1445312,

|

||||

"special": false,

|

||||

"text": " if"

|

||||

},

|

||||

{

|

||||

"id": 1715,

|

||||

"logprob": -0.92333984,

|

||||

"special": false,

|

||||

"text": " request"

|

||||

},

|

||||

{

|

||||

"id": 13204,

|

||||

"logprob": -0.07672119,

|

||||

"special": false,

|

||||

"text": ".method"

|

||||

},

|

||||

{

|

||||

"id": 624,

|

||||

"logprob": -0.021987915,

|

||||

"special": false,

|

||||

"text": " =="

|

||||

},

|

||||

{

|

||||

"id": 364,

|

||||

"logprob": -0.39208984,

|

||||

"special": false,

|

||||

"text": " '"

|

||||

},

|

||||

{

|

||||

"id": 3019,

|

||||

"logprob": -0.10638428,

|

||||

"special": false,

|

||||

"text": "POST"

|

||||

}

|

||||

],

|

||||

"top_tokens": null

|

||||

},

|

||||

"generated_text": "\n \"\"\"\n if request.method == 'POST"

|

||||

},

|

||||

{

|

||||

"details": {

|

||||

"best_of_sequences": null,

|

||||

"finish_reason": "length",

|

||||

"generated_tokens": 10,

|

||||

"prefill": [

|

||||

{

|

||||

"id": 2323,

|

||||

"logprob": null,

|

||||

"text": "Test"

|

||||

},

|

||||

{

|

||||

"id": 1715,

|

||||

"logprob": -11.34375,

|

||||

"text": " request"

|

||||

}

|

||||

],

|

||||

"seed": null,

|

||||

"tokens": [

|

||||

{

|

||||

"id": 198,

|

||||

"logprob": -2.5742188,

|

||||

"special": false,

|

||||

"text": "\n"

|

||||

},

|

||||

{

|

||||

"id": 262,

|

||||

"logprob": -1.6220703,

|

||||

"special": false,

|

||||

"text": " "

|

||||

},

|

||||

{

|

||||

"id": 3270,

|

||||

"logprob": -2.0410156,

|

||||

"special": false,

|

||||

"text": " \"\"\"\n"

|

||||

},

|

||||

{

|

||||

"id": 262,

|

||||

"logprob": -0.015281677,

|

||||

"special": false,

|

||||

"text": " "

|

||||

},

|

||||

{

|

||||

"id": 422,

|

||||

"logprob": -2.1445312,

|

||||

"special": false,

|

||||

"text": " if"

|

||||

},

|

||||

{

|

||||

"id": 1715,

|

||||

"logprob": -0.92333984,

|

||||

"special": false,

|

||||

"text": " request"

|

||||

},

|

||||

{

|

||||

"id": 13204,

|

||||

"logprob": -0.07672119,

|

||||

"special": false,

|

||||

"text": ".method"

|

||||

},

|

||||

{

|

||||

"id": 624,

|

||||

"logprob": -0.021987915,

|

||||

"special": false,

|

||||

"text": " =="

|

||||

},

|

||||

{

|

||||

"id": 364,

|

||||

"logprob": -0.39208984,

|

||||

"special": false,

|

||||

"text": " '"

|

||||

},

|

||||

{

|

||||

"id": 3019,

|

||||

"logprob": -0.10638428,

|

||||

"special": false,

|

||||

"text": "POST"

|

||||

}

|

||||

],

|

||||

"top_tokens": null

|

||||

},

|

||||

"generated_text": "\n \"\"\"\n if request.method == 'POST"

|

||||

},

|

||||

{

|

||||

"details": {

|

||||

"best_of_sequences": null,

|

||||

"finish_reason": "length",

|

||||

"generated_tokens": 10,

|

||||

"prefill": [

|

||||

{

|

||||

"id": 2323,

|

||||

"logprob": null,

|

||||

"text": "Test"

|

||||

},

|

||||

{

|

||||

"id": 1715,

|

||||

"logprob": -11.34375,

|

||||

"text": " request"

|

||||

}

|

||||

],

|

||||

"seed": null,

|

||||

"tokens": [

|

||||

{

|

||||

"id": 198,

|

||||

"logprob": -2.5742188,

|

||||

"special": false,

|

||||

"text": "\n"

|

||||

},

|

||||

{

|

||||

"id": 262,

|

||||

"logprob": -1.6220703,

|

||||

"special": false,

|

||||

"text": " "

|

||||

},

|

||||

{

|

||||

"id": 3270,

|

||||

"logprob": -2.0410156,

|

||||

"special": false,

|

||||

"text": " \"\"\"\n"

|

||||

},

|

||||

{

|

||||

"id": 262,

|

||||

"logprob": -0.015281677,

|

||||

"special": false,

|

||||

"text": " "

|

||||

},

|

||||

{

|

||||

"id": 422,

|

||||

"logprob": -2.1445312,

|

||||

"special": false,

|

||||

"text": " if"

|

||||

},

|

||||

{

|

||||

"id": 1715,

|

||||

"logprob": -0.92333984,

|

||||

"special": false,

|

||||

"text": " request"

|

||||

},

|

||||

{

|

||||

"id": 13204,

|

||||

"logprob": -0.07672119,

|

||||

"special": false,

|

||||

"text": ".method"

|

||||

},

|

||||

{

|

||||

"id": 624,

|

||||

"logprob": -0.021987915,

|

||||

"special": false,

|

||||

"text": " =="

|

||||

},

|

||||

{

|

||||

"id": 364,

|

||||

"logprob": -0.39208984,

|

||||

"special": false,

|

||||

"text": " '"

|

||||

},

|

||||

{

|

||||

"id": 3019,

|

||||

"logprob": -0.10638428,

|

||||

"special": false,

|

||||

"text": "POST"

|

||||

}

|

||||

],

|

||||

"top_tokens": null

|

||||

},

|

||||

"generated_text": "\n \"\"\"\n if request.method == 'POST"

|

||||

},

|

||||

{

|

||||

"details": {

|

||||

"best_of_sequences": null,

|

||||

"finish_reason": "length",

|

||||

"generated_tokens": 10,

|

||||

"prefill": [

|

||||

{

|

||||

"id": 2323,

|

||||

"logprob": null,

|

||||

"text": "Test"

|

||||

},

|

||||

{

|

||||

"id": 1715,

|

||||

"logprob": -11.34375,

|

||||

"text": " request"

|

||||

}

|

||||

],

|

||||

"seed": null,

|

||||

"tokens": [

|

||||

{

|

||||

"id": 198,

|

||||

"logprob": -2.5742188,

|

||||

"special": false,

|

||||

"text": "\n"

|

||||

},

|

||||

{

|

||||

"id": 262,

|

||||

"logprob": -1.6220703,

|

||||

"special": false,

|

||||

"text": " "

|

||||

},

|

||||

{

|

||||

"id": 3270,

|

||||

"logprob": -2.0410156,

|

||||

"special": false,

|

||||

"text": " \"\"\"\n"

|

||||

},

|

||||

{

|

||||

"id": 262,

|

||||

"logprob": -0.015281677,

|

||||

"special": false,

|

||||

"text": " "

|

||||

},

|

||||

{

|

||||

"id": 422,

|

||||

"logprob": -2.1445312,

|

||||

"special": false,

|

||||

"text": " if"

|

||||

},

|

||||

{

|

||||

"id": 1715,

|

||||

"logprob": -0.92333984,

|

||||

"special": false,

|

||||

"text": " request"

|

||||

},

|

||||

{

|

||||

"id": 13204,

|

||||

"logprob": -0.07672119,

|

||||

"special": false,

|

||||

"text": ".method"

|

||||

},

|

||||

{

|

||||

"id": 624,

|

||||

"logprob": -0.021987915,

|

||||

"special": false,

|

||||

"text": " =="

|

||||

},

|

||||

{

|

||||

"id": 364,

|

||||

"logprob": -0.39208984,

|

||||

"special": false,

|

||||

"text": " '"

|

||||

},

|

||||

{

|

||||

"id": 3019,

|

||||

"logprob": -0.10638428,

|

||||

"special": false,

|

||||

"text": "POST"

|

||||

}

|

||||

],

|

||||

"top_tokens": null

|

||||

},

|

||||

"generated_text": "\n \"\"\"\n if request.method == 'POST"

|

||||

}

|

||||

]

|

||||

|

|

@ -0,0 +1,65 @@

|

|||

import pytest

|

||||

|

||||

|

||||

@pytest.fixture(scope="module")

|

||||

def flash_llama_gptq_marlin_handle(launcher):

|

||||

with launcher(

|

||||

"astronomer/Llama-3-8B-Instruct-GPTQ-4-Bit", num_shard=2, quantize="marlin"

|

||||

) as handle:

|

||||

yield handle

|

||||

|

||||

|

||||

@pytest.fixture(scope="module")

|

||||

async def flash_llama_gptq_marlin(flash_llama_gptq_marlin_handle):

|

||||

await flash_llama_gptq_marlin_handle.health(300)

|

||||

return flash_llama_gptq_marlin_handle.client

|

||||

|

||||

|

||||

@pytest.mark.asyncio

|

||||

@pytest.mark.private

|

||||

async def test_flash_llama_gptq_marlin(flash_llama_gptq_marlin, response_snapshot):

|

||||

response = await flash_llama_gptq_marlin.generate(

|

||||

"Test request", max_new_tokens=10, decoder_input_details=True

|

||||

)

|

||||

|

||||

assert response.details.generated_tokens == 10

|

||||

assert response == response_snapshot

|

||||

|

||||

|

||||

@pytest.mark.asyncio

|

||||

@pytest.mark.private

|

||||

async def test_flash_llama_gptq_marlin_all_params(

|

||||

flash_llama_gptq_marlin, response_snapshot

|

||||

):

|

||||

response = await flash_llama_gptq_marlin.generate(

|

||||

"Test request",

|

||||

max_new_tokens=10,

|

||||

repetition_penalty=1.2,

|

||||

return_full_text=True,

|

||||

temperature=0.5,

|

||||

top_p=0.9,

|

||||

top_k=10,

|

||||

truncate=5,

|

||||

typical_p=0.9,

|

||||

watermark=True,

|

||||

decoder_input_details=True,

|

||||

seed=0,

|

||||

)

|

||||

|

||||

assert response.details.generated_tokens == 10

|

||||

assert response == response_snapshot

|

||||

|

||||

|

||||

@pytest.mark.asyncio

|

||||

@pytest.mark.private

|

||||

async def test_flash_llama_gptq_marlin_load(

|

||||

flash_llama_gptq_marlin, generate_load, response_snapshot

|

||||

):

|

||||

responses = await generate_load(

|

||||

flash_llama_gptq_marlin, "Test request", max_new_tokens=10, n=4

|

||||

)

|

||||

|

||||

assert len(responses) == 4

|

||||

assert all([r.generated_text == responses[0].generated_text for r in responses])

|

||||

|

||||

assert responses == response_snapshot

|

||||

|

|

@ -3,7 +3,6 @@ include Makefile-flash-att-v2

|

|||

include Makefile-vllm

|

||||

include Makefile-awq

|

||||

include Makefile-eetq

|

||||

include Makefile-marlin

|

||||

include Makefile-selective-scan

|

||||

|

||||

unit-tests:

|

||||

|

|

|

|||

|

|

@ -1,11 +0,0 @@

|

|||

marlin_commit := 2f6d7c10e124b3c5fa29ff8d77d568bd7af3274c

|

||||

|

||||

build-marlin:

|

||||

if [ ! -d 'marlin' ]; then \

|

||||

pip install -U ninja packaging --no-cache-dir && \

|

||||

git clone https://github.com/IST-DASLab/marlin.git marlin; \

|

||||

fi

|

||||

cd marlin && git fetch && git checkout $(marlin_commit) && python setup.py build

|

||||

|

||||

install-marlin: build-marlin

|

||||

cd marlin && git fetch && git checkout $(marlin_commit) && pip install -e .

|

||||

|

|

@ -0,0 +1,20 @@

|

|||

These kernels were vendored from VLLM. The Marlin kernels were developed

|

||||

by Elias Frantar and extended by Neural Magic.

|

||||

|

||||

---

|

||||

|

||||

Copyright (C) Marlin.2024 Elias Frantar

|

||||

Modified by Neural Magic

|

||||

Copyright 2024 The vLLM team.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License");

|

||||

you may not use this file except in compliance with the License.

|

||||

You may obtain a copy of the License at

|

||||

|

||||

http://www.apache.org/licenses/LICENSE-2.0

|

||||

|

||||

Unless required by applicable law or agreed to in writing, software

|

||||

distributed under the License is distributed on an "AS IS" BASIS,

|

||||

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

See the License for the specific language governing permissions and

|

||||

limitations under the License.

|

||||

|

|

@ -0,0 +1,44 @@

|

|||

import torch

|

||||

|

||||

def gptq_marlin_gemm(

|

||||

a: torch.Tensor,

|

||||

b_q_weight: torch.Tensor,

|

||||

b_scales: torch.Tensor,

|

||||

g_idx: torch.Tensor,

|

||||

perm: torch.Tensor,

|

||||

workspace: torch.Tensor,

|

||||

num_bits: int,

|

||||

size_m: int,

|

||||

size_n: int,

|

||||

size_k: int,

|

||||

is_k_full: bool,

|

||||

) -> torch.Tensor:

|

||||

"""

|

||||

Matrix multiplication using Marlin kernels. This is an extension of

|

||||

`marlin_gemm` that supports converted GPTQ kernels.

|

||||

"""

|

||||

...

|

||||

|

||||

def gptq_marlin_repack(

|

||||

b_q_weight: torch.Tensor,

|

||||

perm: torch.Tensor,

|

||||

size_k: int,

|

||||

size_n: int,

|

||||

num_bits: int,

|

||||

) -> torch.Tensor:

|

||||

"""Repack GPTQ parameters for Marlin kernels."""

|

||||

...

|

||||

|

||||

def marlin_gemm(

|

||||

a: torch.Tensor,

|

||||

b_q_weight: torch.Tensor,

|

||||

b_scales: torch.Tensor,

|

||||

workspace: torch.Tensor,

|

||||

size_m: int,

|

||||

size_n: int,

|

||||

size_k: int,

|

||||

) -> torch.Tensor:

|

||||

"""

|

||||

Matrix multiplication using Marlin kernels.

|

||||

"""

|

||||

...

|

||||

|

|

@ -0,0 +1,11 @@

|

|||

#include <torch/extension.h>

|

||||

|

||||

#include "ext.hh"

|

||||

|

||||

PYBIND11_MODULE(TORCH_EXTENSION_NAME, m) {

|

||||

m.def("gptq_marlin_gemm", &gptq_marlin_gemm,

|

||||

"Marlin gemm with GPTQ compatibility");

|

||||

m.def("gptq_marlin_repack", &gptq_marlin_repack,

|

||||

"Repack GPTQ parameters for Marlin");

|

||||

m.def("marlin_gemm", &marlin_gemm, "Marlin gemm");

|

||||

}

|

||||

|

|

@ -0,0 +1,23 @@

|

|||

#pragma once

|

||||

|

||||

#include <torch/library.h>

|

||||

|

||||

#if defined(__CUDA_ARCH__) && __CUDA_ARCH__ < 800

|

||||

// No support for async

|

||||

#else

|

||||

|

||||

torch::Tensor gptq_marlin_gemm(torch::Tensor &a, torch::Tensor &b_q_weight,

|

||||

torch::Tensor &b_scales, torch::Tensor &g_idx,

|

||||

torch::Tensor &perm, torch::Tensor &workspace,

|

||||

int64_t num_bits, int64_t size_m, int64_t size_n,

|

||||

int64_t size_k, bool is_k_full);

|

||||

|

||||

torch::Tensor gptq_marlin_repack(torch::Tensor &b_q_weight, torch::Tensor &perm,

|

||||

int64_t size_k, int64_t size_n,

|

||||

int64_t num_bits);

|

||||

|

||||

torch::Tensor marlin_gemm(torch::Tensor &a, torch::Tensor &b_q_weight,

|

||||

torch::Tensor &b_scales, torch::Tensor &workspace,

|

||||

int64_t size_m, int64_t size_n, int64_t size_k);

|

||||

|

||||

#endif

|

||||

File diff suppressed because it is too large

Load Diff

|

|

@ -0,0 +1,76 @@

|

|||

#pragma once

|

||||

|

||||

#include <torch/all.h>

|

||||

|

||||

#include <ATen/cuda/CUDAContext.h>

|

||||

#include <c10/cuda/CUDAGuard.h>

|

||||

#include <cuda.h>

|

||||

#include <cuda_fp16.h>

|

||||

#include <cuda_runtime.h>

|

||||

#include <iostream>

|

||||

|

||||

namespace gptq_marlin {

|

||||

|

||||

// 8 warps are a good choice since every SM has 4 schedulers and having more

|

||||

// than 1 warp per schedule allows some more latency hiding. At the same time,

|

||||

// we want relatively few warps to have many registers per warp and small tiles.

|

||||

static constexpr int default_threads = 256;

|

||||

|

||||

static constexpr int pipe_stages =

|

||||

4; // 4 pipeline stages fit into shared memory

|

||||

|

||||

static constexpr int min_thread_n = 64;

|

||||

static constexpr int min_thread_k = 64;

|

||||

|

||||

static constexpr int tile_size = 16;

|

||||

static constexpr int max_par = 16;

|

||||

|

||||

template <typename T, int n>

|

||||

struct Vec {

|

||||

T elems[n];

|

||||

__device__ T& operator[](int i) { return elems[i]; }

|

||||

};

|

||||

|

||||

using I4 = Vec<int, 4>;

|

||||

|

||||

constexpr int div_ceil(int a, int b) { return (a + b - 1) / b; }

|

||||

|

||||

#if defined(__CUDA_ARCH__) && __CUDA_ARCH__ < 800

|

||||

// No support for async

|

||||

#else

|

||||

|

||||

__device__ inline void cp_async4_pred(void* smem_ptr, const void* glob_ptr,

|

||||

bool pred = true) {

|

||||

const int BYTES = 16;

|

||||

uint32_t smem = static_cast<uint32_t>(__cvta_generic_to_shared(smem_ptr));

|

||||

asm volatile(

|

||||

"{\n"

|

||||

" .reg .pred p;\n"

|

||||

" setp.ne.b32 p, %0, 0;\n"

|

||||

" @p cp.async.cg.shared.global [%1], [%2], %3;\n"

|

||||

"}\n" ::"r"((int)pred),

|

||||

"r"(smem), "l"(glob_ptr), "n"(BYTES));

|

||||

}

|

||||

|

||||

__device__ inline void cp_async4(void* smem_ptr, const void* glob_ptr) {

|

||||

const int BYTES = 16;

|

||||

uint32_t smem = static_cast<uint32_t>(__cvta_generic_to_shared(smem_ptr));

|

||||

asm volatile(

|

||||

"{\n"

|

||||

" cp.async.cg.shared.global [%0], [%1], %2;\n"

|

||||

"}\n" ::"r"(smem),

|

||||

"l"(glob_ptr), "n"(BYTES));

|

||||

}

|

||||

|

||||

__device__ inline void cp_async_fence() {

|

||||

asm volatile("cp.async.commit_group;\n" ::);

|

||||

}

|

||||

|

||||

template <int n>

|

||||

__device__ inline void cp_async_wait() {

|

||||

asm volatile("cp.async.wait_group %0;\n" ::"n"(n));

|

||||

}

|

||||

|

||||

#endif

|

||||

|

||||

} // namespace gptq_marlin

|

||||

|

|

@ -0,0 +1,77 @@

|

|||

|

||||

#ifndef _data_types_cuh

|

||||

#define _data_types_cuh

|

||||

#include "gptq_marlin.cuh"

|

||||

#include <cuda_fp16.h>

|

||||

#include <cuda_bf16.h>

|

||||

|

||||

namespace gptq_marlin {

|

||||

|

||||

template <typename scalar_t>

|

||||

class ScalarType {};

|

||||

|

||||

template <>

|

||||

class ScalarType<half> {

|

||||

public:

|

||||

using scalar_t = half;

|

||||

using scalar_t2 = half2;

|

||||

|

||||

// Matrix fragments for tensor core instructions; their precise layout is

|

||||

// documented here:

|

||||

// https://docs.nvidia.com/cuda/parallel-thread-execution/index.html#matrix-fragments-for-mma-m16n8k16-with-floating-point-type

|

||||

using FragA = Vec<half2, 4>;

|

||||

using FragB = Vec<half2, 2>;

|

||||

using FragC = Vec<float, 4>;

|

||||

using FragS = Vec<half2, 1>;

|

||||

|

||||

static __device__ float inline num2float(const half x) {

|

||||

return __half2float(x);

|

||||

}

|

||||

|

||||

static __device__ half2 inline num2num2(const half x) {

|

||||

return __half2half2(x);

|

||||

}

|

||||

|

||||

static __device__ half2 inline nums2num2(const half x1, const half x2) {

|

||||

return __halves2half2(x1, x2);

|

||||

}

|

||||

|

||||

static __host__ __device__ half inline float2num(const float x) {

|

||||

return __float2half(x);

|

||||

}

|

||||

};

|

||||

|

||||

template <>

|

||||

class ScalarType<nv_bfloat16> {

|

||||

public:

|

||||

using scalar_t = nv_bfloat16;

|

||||

using scalar_t2 = nv_bfloat162;

|

||||

|

||||

using FragA = Vec<nv_bfloat162, 4>;

|

||||

using FragB = Vec<nv_bfloat162, 2>;

|

||||

using FragC = Vec<float, 4>;

|

||||

using FragS = Vec<nv_bfloat162, 1>;

|

||||

|

||||

#if defined(__CUDA_ARCH__) && __CUDA_ARCH__ >= 800

|

||||

static __device__ float inline num2float(const nv_bfloat16 x) {

|

||||

return __bfloat162float(x);

|

||||

}

|

||||

|

||||

static __device__ nv_bfloat162 inline num2num2(const nv_bfloat16 x) {

|

||||

return __bfloat162bfloat162(x);

|

||||

}

|

||||

|

||||

static __device__ nv_bfloat162 inline nums2num2(const nv_bfloat16 x1,

|

||||

const nv_bfloat16 x2) {

|

||||

return __halves2bfloat162(x1, x2);

|

||||

}

|

||||

|

||||

static __host__ __device__ nv_bfloat16 inline float2num(const float x) {

|

||||

return __float2bfloat16(x);

|

||||

}

|

||||

#endif

|

||||

};

|

||||

|

||||

} // namespace gptq_marlin

|

||||

|

||||

#endif

|

||||

|

|

@ -0,0 +1,350 @@

|

|||

#include "gptq_marlin.cuh"

|

||||

|

||||

namespace gptq_marlin {

|

||||

|

||||

static constexpr int repack_stages = 8;

|

||||

|

||||

static constexpr int repack_threads = 256;

|

||||

|

||||

static constexpr int tile_k_size = tile_size;

|

||||

static constexpr int tile_n_size = tile_k_size * 4;

|

||||

|

||||

#if defined(__CUDA_ARCH__) && __CUDA_ARCH__ < 800

|

||||

|

||||

template <int const num_threads, int const num_bits, bool const has_perm>

|

||||

__global__ void marlin_repack_kernel(

|

||||

uint32_t const* __restrict__ b_q_weight_ptr,

|

||||

uint32_t const* __restrict__ perm_ptr, uint32_t* __restrict__ out_ptr,

|

||||

int size_k, int size_n) {}

|

||||

|

||||

} // namespace gptq_marlin

|

||||

|

||||

torch::Tensor gptq_marlin_repack(torch::Tensor& b_q_weight, torch::Tensor& perm,

|

||||

int64_t size_k, int64_t size_n,

|

||||

int64_t num_bits) {

|

||||

TORCH_CHECK_NOT_IMPLEMENTED(

|

||||

false, "marlin_repack_from_gptq(..) requires CUDA_ARCH >= 8.0");

|

||||

return torch::empty({1, 1});

|

||||

}

|

||||

|

||||

#else

|

||||

|

||||

template <int const num_threads, int const num_bits, bool const has_perm>

|

||||

__global__ void marlin_repack_kernel(

|

||||

uint32_t const* __restrict__ b_q_weight_ptr,

|

||||

uint32_t const* __restrict__ perm_ptr, uint32_t* __restrict__ out_ptr,

|

||||

int size_k, int size_n) {

|

||||

constexpr int pack_factor = 32 / num_bits;

|

||||

|

||||

int k_tiles = size_k / tile_k_size;

|

||||

int n_tiles = size_n / tile_n_size;

|

||||

int block_k_tiles = div_ceil(k_tiles, gridDim.x);

|

||||

|

||||

int start_k_tile = blockIdx.x * block_k_tiles;

|

||||

if (start_k_tile >= k_tiles) {

|

||||

return;

|

||||

}

|

||||

|

||||

int finish_k_tile = min(start_k_tile + block_k_tiles, k_tiles);

|

||||

|

||||

// Wait until the next thread tile has been loaded to shared memory.

|

||||

auto wait_for_stage = [&]() {

|

||||

// We only have `stages - 2` active fetches since we are double buffering

|

||||

// and can only issue the next fetch when it is guaranteed that the previous

|

||||

// shared memory load is fully complete (as it may otherwise be

|

||||

// overwritten).

|

||||

cp_async_wait<repack_stages - 2>();

|

||||

__syncthreads();

|

||||

};

|

||||

|

||||

extern __shared__ int4 sh[];

|

||||

|

||||

constexpr int perm_size = tile_k_size / 4;

|

||||

|

||||

int4* sh_perm_ptr = sh;

|

||||

int4* sh_pipe_ptr = sh_perm_ptr;

|

||||

if constexpr (has_perm) {

|

||||

sh_pipe_ptr += perm_size;

|

||||

}

|

||||

|

||||

constexpr int tile_ints = tile_k_size / pack_factor;

|

||||

|

||||

constexpr int stage_n_threads = tile_n_size / 4;

|

||||

constexpr int stage_k_threads = has_perm ? tile_k_size : tile_ints;

|

||||

constexpr int stage_size = stage_k_threads * stage_n_threads;

|

||||

|

||||

auto load_perm_to_shared = [&](int k_tile_id) {

|

||||

int first_k_int4 = (k_tile_id * tile_k_size) / 4;

|

||||

|

||||

int4 const* perm_int4_ptr = reinterpret_cast<int4 const*>(perm_ptr);

|

||||

|

||||

if (threadIdx.x < perm_size) {

|

||||

sh_perm_ptr[threadIdx.x] = perm_int4_ptr[first_k_int4 + threadIdx.x];

|

||||

}

|

||||

__syncthreads();

|

||||

};

|

||||

|

||||

auto fetch_to_shared = [&](int pipe, int k_tile_id, int n_tile_id) {

|

||||

if (n_tile_id >= n_tiles) {

|

||||

cp_async_fence();

|

||||

return;

|

||||

}

|

||||

|

||||

int first_n = n_tile_id * tile_n_size;

|

||||

|

||||

int4* sh_ptr = sh_pipe_ptr + stage_size * pipe;

|

||||

|

||||

if constexpr (has_perm) {

|

||||

if (threadIdx.x < stage_size) {

|

||||

int k_id = threadIdx.x / stage_n_threads;

|

||||

int n_id = threadIdx.x % stage_n_threads;

|

||||

|

||||

uint32_t const* sh_perm_int_ptr =

|

||||

reinterpret_cast<uint32_t const*>(sh_perm_ptr);

|

||||

|

||||

int src_k = sh_perm_int_ptr[k_id];

|

||||

int src_k_packed = src_k / pack_factor;

|

||||

|

||||

cp_async4(

|

||||

&sh_ptr[k_id * stage_n_threads + n_id],

|

||||

reinterpret_cast<int4 const*>(&(

|

||||

b_q_weight_ptr[src_k_packed * size_n + first_n + (n_id * 4)])));

|

||||

}

|

||||

|

||||

} else {

|

||||

if (threadIdx.x < stage_size) {

|

||||

int k_id = threadIdx.x / stage_n_threads;

|

||||

int n_id = threadIdx.x % stage_n_threads;

|

||||

|

||||

int first_k = k_tile_id * tile_k_size;

|

||||

int first_k_packed = first_k / pack_factor;

|

||||

|

||||

cp_async4(&sh_ptr[k_id * stage_n_threads + n_id],

|

||||

reinterpret_cast<int4 const*>(

|

||||

&(b_q_weight_ptr[(first_k_packed + k_id) * size_n +

|

||||

first_n + (n_id * 4)])));

|

||||

}

|

||||

}

|

||||

|

||||

cp_async_fence();

|

||||

};

|

||||

|

||||

auto repack_tile = [&](int pipe, int k_tile_id, int n_tile_id) {

|

||||

if (n_tile_id >= n_tiles) {

|

||||

return;

|

||||

}

|

||||

|

||||

int warp_id = threadIdx.x / 32;

|

||||

int th_id = threadIdx.x % 32;

|

||||

|

||||

if (warp_id >= 4) {

|

||||

return;

|

||||

}

|

||||

|

||||

int tc_col = th_id / 4;

|

||||

int tc_row = (th_id % 4) * 2;

|

||||

|

||||

constexpr int tc_offsets[4] = {0, 1, 8, 9};

|

||||

|

||||

int cur_n = warp_id * 16 + tc_col;

|

||||

|

||||

constexpr int sh_stride = 64;

|

||||

constexpr uint32_t mask = (1 << num_bits) - 1;

|

||||

|

||||

int4* sh_stage_ptr = sh_pipe_ptr + stage_size * pipe;

|

||||

uint32_t* sh_stage_int_ptr = reinterpret_cast<uint32_t*>(sh_stage_ptr);

|

||||

|

||||

uint32_t* sh_perm_int_ptr = reinterpret_cast<uint32_t*>(sh_perm_ptr);

|

||||

|

||||

uint32_t vals[8];

|

||||

|

||||

if constexpr (has_perm) {

|

||||

for (int i = 0; i < 4; i++) {

|

||||

int k_idx = tc_row + tc_offsets[i];

|

||||

|

||||

uint32_t src_k = sh_perm_int_ptr[k_idx];

|

||||

uint32_t src_k_pos = src_k % pack_factor;

|

||||

|

||||

uint32_t b1_val = sh_stage_int_ptr[k_idx * sh_stride + cur_n];

|

||||

uint32_t b1_cur_val = (b1_val >> (src_k_pos * num_bits)) & mask;

|

||||

|

||||

uint32_t b2_val = sh_stage_int_ptr[k_idx * sh_stride + cur_n + 8];

|

||||

uint32_t b2_cur_val = (b2_val >> (src_k_pos * num_bits)) & mask;

|

||||

|

||||

vals[i] = b1_cur_val;

|

||||

vals[4 + i] = b2_cur_val;

|

||||

}

|

||||

|

||||

} else {

|

||||

uint32_t b1_vals[tile_ints];

|

||||

uint32_t b2_vals[tile_ints];

|

||||

|

||||

#pragma unroll

|

||||

for (int i = 0; i < tile_ints; i++) {

|

||||

b1_vals[i] = sh_stage_int_ptr[cur_n + sh_stride * i];

|

||||

b2_vals[i] = sh_stage_int_ptr[cur_n + 8 + sh_stride * i];

|

||||

}

|

||||

|

||||

#pragma unroll

|

||||

for (int i = 0; i < 4; i++) {

|

||||

int cur_elem = tc_row + tc_offsets[i];

|

||||

int cur_int = cur_elem / pack_factor;

|

||||

int cur_pos = cur_elem % pack_factor;

|

||||

|

||||

vals[i] = (b1_vals[cur_int] >> (cur_pos * num_bits)) & mask;

|

||||

vals[4 + i] = (b2_vals[cur_int] >> (cur_pos * num_bits)) & mask;

|

||||

}

|

||||

}

|

||||

|

||||

constexpr int tile_size = tile_k_size * tile_n_size / pack_factor;

|

||||

int out_offset = (k_tile_id * n_tiles + n_tile_id) * tile_size;

|

||||

|

||||

// Result of:

|

||||

// https://github.com/NVIDIA/FasterTransformer/blob/main/src/fastertransformer/cutlass_extensions/include/cutlass_extensions/interleaved_numeric_conversion.h

|

||||

if constexpr (num_bits == 4) {

|

||||

constexpr int pack_idx[8] = {0, 2, 4, 6, 1, 3, 5, 7};

|

||||

|

||||

uint32_t res = 0;

|

||||

#pragma unroll

|

||||

for (int i = 0; i < 8; i++) {

|

||||

res |= vals[pack_idx[i]] << (i * 4);

|

||||

}

|

||||

|

||||

out_ptr[out_offset + th_id * 4 + warp_id] = res;

|

||||

|

||||

} else {

|

||||

constexpr int pack_idx[4] = {0, 2, 1, 3};

|

||||

|

||||

uint32_t res1 = 0;

|

||||

uint32_t res2 = 0;

|

||||

#pragma unroll

|

||||

for (int i = 0; i < 4; i++) {

|

||||

res1 |= vals[pack_idx[i]] << (i * 8);

|

||||

res2 |= vals[4 + pack_idx[i]] << (i * 8);

|

||||

}

|

||||

|

||||

out_ptr[out_offset + th_id * 8 + (warp_id * 2) + 0] = res1;

|

||||

out_ptr[out_offset + th_id * 8 + (warp_id * 2) + 1] = res2;

|

||||

}

|

||||

};

|

||||

|

||||

auto start_pipes = [&](int k_tile_id, int n_tile_id) {

|

||||

#pragma unroll

|

||||

for (int pipe = 0; pipe < repack_stages - 1; pipe++) {

|

||||

fetch_to_shared(pipe, k_tile_id, n_tile_id + pipe);

|

||||

}

|

||||

|

||||

wait_for_stage();

|

||||

};

|

||||

#pragma unroll

|

||||

for (int k_tile_id = start_k_tile; k_tile_id < finish_k_tile; k_tile_id++) {

|

||||

int n_tile_id = 0;

|

||||

|

||||

if constexpr (has_perm) {

|

||||

load_perm_to_shared(k_tile_id);

|

||||

}

|

||||

|

||||

start_pipes(k_tile_id, n_tile_id);

|

||||

|

||||

while (n_tile_id < n_tiles) {

|

||||

#pragma unroll

|

||||

for (int pipe = 0; pipe < repack_stages; pipe++) {

|

||||

fetch_to_shared((pipe + repack_stages - 1) % repack_stages, k_tile_id,

|

||||

n_tile_id + pipe + repack_stages - 1);

|

||||

repack_tile(pipe, k_tile_id, n_tile_id + pipe);

|

||||

wait_for_stage();

|

||||

}

|

||||

n_tile_id += repack_stages;

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

} // namespace gptq_marlin

|

||||

|

||||

#define CALL_IF(NUM_BITS, HAS_PERM) \

|

||||

else if (num_bits == NUM_BITS && has_perm == HAS_PERM) { \

|

||||

cudaFuncSetAttribute( \

|

||||

gptq_marlin::marlin_repack_kernel<gptq_marlin::repack_threads, \

|

||||

NUM_BITS, HAS_PERM>, \

|

||||

cudaFuncAttributeMaxDynamicSharedMemorySize, max_shared_mem); \

|

||||

gptq_marlin::marlin_repack_kernel<gptq_marlin::repack_threads, NUM_BITS, \

|

||||

HAS_PERM> \

|

||||

<<<blocks, gptq_marlin::repack_threads, max_shared_mem, stream>>>( \

|

||||

b_q_weight_ptr, perm_ptr, out_ptr, size_k, size_n); \

|

||||

}

|

||||

|

||||

torch::Tensor gptq_marlin_repack(torch::Tensor& b_q_weight, torch::Tensor& perm,

|

||||

int64_t size_k, int64_t size_n,

|

||||

int64_t num_bits) {

|

||||

// Verify compatibility with marlin tile of 16x64

|

||||

TORCH_CHECK(size_k % gptq_marlin::tile_k_size == 0, "size_k = ", size_k,

|

||||

" is not divisible by tile_k_size = ", gptq_marlin::tile_k_size);

|

||||

TORCH_CHECK(size_n % gptq_marlin::tile_n_size == 0, "size_n = ", size_n,

|

||||

" is not divisible by tile_n_size = ", gptq_marlin::tile_n_size);

|

||||

|

||||

TORCH_CHECK(num_bits == 4 || num_bits == 8,

|

||||

"num_bits must be 4 or 8. Got = ", num_bits);

|

||||

int const pack_factor = 32 / num_bits;

|

||||

|

||||

// Verify B

|

||||

TORCH_CHECK((size_k / pack_factor) == b_q_weight.size(0),

|

||||

"Shape mismatch: b_q_weight.size(0) = ", b_q_weight.size(0),

|

||||

", size_k = ", size_k, ", pack_factor = ", pack_factor);

|

||||

TORCH_CHECK(b_q_weight.size(1) == size_n,

|

||||

"b_q_weight.size(1) = ", b_q_weight.size(1),

|

||||

" is not size_n = ", size_n);

|

||||

|

||||

// Verify device and strides

|

||||

TORCH_CHECK(b_q_weight.device().is_cuda(), "b_q_weight is not on GPU");

|

||||

TORCH_CHECK(b_q_weight.is_contiguous(), "b_q_weight is not contiguous");

|

||||

TORCH_CHECK(b_q_weight.dtype() == at::kInt, "b_q_weight type is not kInt");

|

||||

|

||||

TORCH_CHECK(perm.device().is_cuda(), "perm is not on GPU");

|

||||

TORCH_CHECK(perm.is_contiguous(), "perm is not contiguous");

|

||||

TORCH_CHECK(perm.dtype() == at::kInt, "perm type is not at::kInt");

|

||||

|

||||

// Alloc buffers

|

||||

const at::cuda::OptionalCUDAGuard device_guard(device_of(b_q_weight));

|

||||

auto options = torch::TensorOptions()

|

||||

.dtype(b_q_weight.dtype())

|

||||

.device(b_q_weight.device());

|

||||

torch::Tensor out =

|

||||

torch::empty({size_k / gptq_marlin::tile_size,

|

||||

size_n * gptq_marlin::tile_size / pack_factor},

|

||||

options);

|

||||

|

||||

// Detect if there is act_order

|

||||

bool has_perm = perm.size(0) != 0;

|

||||

|

||||

// Get ptrs

|

||||

uint32_t const* b_q_weight_ptr =

|

||||

reinterpret_cast<uint32_t const*>(b_q_weight.data_ptr());

|

||||

uint32_t const* perm_ptr = reinterpret_cast<uint32_t const*>(perm.data_ptr());

|

||||

uint32_t* out_ptr = reinterpret_cast<uint32_t*>(out.data_ptr());

|

||||

|

||||

// Get dev info

|

||||

int dev = b_q_weight.get_device();

|

||||

cudaStream_t stream = at::cuda::getCurrentCUDAStream(dev);

|

||||

int blocks;

|

||||

cudaDeviceGetAttribute(&blocks, cudaDevAttrMultiProcessorCount, dev);

|

||||

|

||||

int max_shared_mem = 0;

|

||||

cudaDeviceGetAttribute(&max_shared_mem,

|

||||

cudaDevAttrMaxSharedMemoryPerBlockOptin, dev);

|

||||

TORCH_CHECK(max_shared_mem > 0);

|

||||

|

||||

if (false) {

|

||||

}

|

||||

CALL_IF(4, false)

|

||||

CALL_IF(4, true)

|

||||

CALL_IF(8, false)

|

||||

CALL_IF(8, true)

|

||||

else {

|

||||

TORCH_CHECK(false, "Unsupported repack config: num_bits = ", num_bits,

|

||||

", has_perm = ", has_perm);

|

||||

}

|

||||

|

||||

return out;

|

||||

}

|

||||

|

||||

#endif

|

||||

File diff suppressed because it is too large

Load Diff

|

|

@ -0,0 +1,21 @@

|

|||

from setuptools import setup

|

||||

from torch.utils.cpp_extension import BuildExtension, CUDAExtension

|

||||

|

||||

extra_compile_args = []

|

||||

|

||||

setup(

|

||||

name="marlin_kernels",

|

||||

ext_modules=[

|

||||

CUDAExtension(

|

||||

name="marlin_kernels",

|

||||

sources=[

|

||||

"marlin_kernels/gptq_marlin.cu",

|

||||

"marlin_kernels/gptq_marlin_repack.cu",

|

||||

"marlin_kernels/marlin_cuda_kernel.cu",

|

||||

"marlin_kernels/ext.cpp",

|

||||

],

|

||||

extra_compile_args=extra_compile_args,

|

||||

),

|

||||

],

|

||||

cmdclass={"build_ext": BuildExtension},

|

||||

)

|

||||

|

|

@ -1,6 +1,7 @@

|

|||

from typing import Optional

|

||||

import torch

|

||||

from torch.nn import functional as F

|

||||

from text_generation_server.layers.marlin import GPTQMarlinLinear

|

||||

from text_generation_server.utils.import_utils import SYSTEM

|

||||

|

||||

if SYSTEM == "rocm":

|

||||

|

|

@ -223,13 +224,23 @@ def get_linear(weight, bias, quantize):

|

|||

"You do not seem to have awq installed, either install it (cd server && make install-awq), or try using GPTQ `---quantize gptq` a conversion AWQ->GPTQ will happen on the fly"

|

||||

)

|

||||

elif quantize == "marlin":

|

||||

from text_generation_server.layers.marlin import MarlinLinear, MarlinWeight

|

||||

from text_generation_server.layers.marlin import (

|

||||

GPTQMarlinWeight,

|

||||

MarlinLinear,

|

||||

MarlinWeight,

|

||||

)

|

||||

|

||||

if not isinstance(weight, MarlinWeight):

|

||||

if isinstance(weight, GPTQMarlinWeight):

|

||||

linear = GPTQMarlinLinear(

|

||||

weight=weight,

|

||||

bias=bias,

|

||||

)

|

||||

elif isinstance(weight, MarlinWeight):

|

||||

linear = MarlinLinear(weight=weight, bias=bias)

|

||||

else:

|

||||

raise NotImplementedError(

|

||||

f"The passed weight is not `marlin` compatible, loader needs to be updated."

|

||||

)

|

||||

linear = MarlinLinear(B=weight.B, s=weight.s, bias=bias)

|

||||

else:

|

||||

raise NotImplementedError(f"Quantization `{quantize}` is not implemented yet.")

|

||||

return linear

|

||||

|

|

|

|||

|

|

@ -1,13 +1,15 @@

|

|||

from dataclasses import dataclass

|

||||

from typing import Optional

|

||||

from typing import Optional, Tuple, List

|

||||

|

||||

import torch

|

||||

import torch.nn as nn

|

||||

|

||||

from text_generation_server.utils.import_utils import SYSTEM

|

||||

|

||||

try:

|

||||

import marlin

|

||||

import marlin_kernels

|

||||

except ImportError:

|

||||

marlin = None

|

||||

marlin_kernels = None

|

||||

|

||||

try:

|

||||

major, _minor = torch.cuda.get_device_capability()

|

||||

|

|

@ -15,9 +17,204 @@ try:

|

|||

except Exception:

|

||||