Merge branch 'main' into gpt_awq_4

This commit is contained in:

commit

8c3859d153

|

|

@ -32,10 +32,6 @@ jobs:

|

|||

permissions:

|

||||

contents: write

|

||||

packages: write

|

||||

# This is used to complete the identity challenge

|

||||

# with sigstore/fulcio when running outside of PRs.

|

||||

id-token: write

|

||||

security-events: write

|

||||

steps:

|

||||

- name: Checkout repository

|

||||

uses: actions/checkout@v4

|

||||

|

|

|

|||

|

|

@ -39,6 +39,9 @@ jobs:

|

|||

matrix:

|

||||

hardware: ["cuda", "rocm", "intel-xpu", "intel-cpu"]

|

||||

uses: ./.github/workflows/build.yaml # calls the one above ^

|

||||

permissions:

|

||||

contents: write

|

||||

packages: write

|

||||

with:

|

||||

hardware: ${{ matrix.hardware }}

|

||||

# https://github.com/actions/runner/issues/2206

|

||||

|

|

|

|||

|

|

@ -35,7 +35,7 @@ jobs:

|

|||

with:

|

||||

# Released on: 02 May, 2024

|

||||

# https://releases.rs/docs/1.78.0/

|

||||

toolchain: 1.79.0

|

||||

toolchain: 1.80.0

|

||||

override: true

|

||||

components: rustfmt, clippy

|

||||

- name: Install Protoc

|

||||

|

|

|

|||

|

|

@ -19,3 +19,6 @@ server/exllama_kernels/exllama_kernels/exllama_ext_hip.cpp

|

|||

data/

|

||||

load_tests/*.json

|

||||

server/fbgemmm

|

||||

|

||||

.direnv/

|

||||

.venv/

|

||||

|

|

|

|||

|

|

@ -77,3 +77,4 @@ docs/openapi.json:

|

|||

- '#/paths/~1tokenize/post'

|

||||

- '#/paths/~1v1~1chat~1completions/post'

|

||||

- '#/paths/~1v1~1completions/post'

|

||||

- '#/paths/~1v1~1models/get'

|

||||

|

|

|

|||

File diff suppressed because it is too large

Load Diff

|

|

@ -29,6 +29,8 @@ tokenizers = { version = "0.19.1", features = ["http"] }

|

|||

hf-hub = { version = "0.3.1", features = ["tokio"] }

|

||||

metrics = { version = "0.23.0" }

|

||||

metrics-exporter-prometheus = { version = "0.15.1", features = [] }

|

||||

minijinja = { version = "2.2.0", features = ["json"] }

|

||||

minijinja-contrib = { version = "2.0.2", features = ["pycompat"] }

|

||||

|

||||

[profile.release]

|

||||

incremental = true

|

||||

|

|

|

|||

|

|

@ -1,5 +1,5 @@

|

|||

# Rust builder

|

||||

FROM lukemathwalker/cargo-chef:latest-rust-1.79 AS chef

|

||||

FROM lukemathwalker/cargo-chef:latest-rust-1.80 AS chef

|

||||

WORKDIR /usr/src

|

||||

|

||||

ARG CARGO_REGISTRIES_CRATES_IO_PROTOCOL=sparse

|

||||

|

|

@ -184,6 +184,12 @@ WORKDIR /usr/src

|

|||

COPY server/Makefile-selective-scan Makefile

|

||||

RUN make build-all

|

||||

|

||||

# Build flashinfer

|

||||

FROM kernel-builder AS flashinfer-builder

|

||||

WORKDIR /usr/src

|

||||

COPY server/Makefile-flashinfer Makefile

|

||||

RUN make install-flashinfer

|

||||

|

||||

# Text Generation Inference base image

|

||||

FROM nvidia/cuda:12.1.0-base-ubuntu22.04 AS base

|

||||

|

||||

|

|

@ -236,6 +242,7 @@ COPY --from=vllm-builder /usr/src/vllm/build/lib.linux-x86_64-cpython-310 /opt/c

|

|||

# Copy build artifacts from mamba builder

|

||||

COPY --from=mamba-builder /usr/src/mamba/build/lib.linux-x86_64-cpython-310/ /opt/conda/lib/python3.10/site-packages

|

||||

COPY --from=mamba-builder /usr/src/causal-conv1d/build/lib.linux-x86_64-cpython-310/ /opt/conda/lib/python3.10/site-packages

|

||||

COPY --from=flashinfer-builder /opt/conda/lib/python3.10/site-packages/flashinfer/ /opt/conda/lib/python3.10/site-packages/flashinfer/

|

||||

|

||||

# Install flash-attention dependencies

|

||||

RUN pip install einops --no-cache-dir

|

||||

|

|

|

|||

|

|

@ -1,5 +1,5 @@

|

|||

# Rust builder

|

||||

FROM lukemathwalker/cargo-chef:latest-rust-1.79 AS chef

|

||||

FROM lukemathwalker/cargo-chef:latest-rust-1.80 AS chef

|

||||

WORKDIR /usr/src

|

||||

|

||||

ARG CARGO_REGISTRIES_CRATES_IO_PROTOCOL=sparse

|

||||

|

|

|

|||

|

|

@ -1,6 +1,6 @@

|

|||

ARG PLATFORM=xpu

|

||||

|

||||

FROM lukemathwalker/cargo-chef:latest-rust-1.79 AS chef

|

||||

FROM lukemathwalker/cargo-chef:latest-rust-1.80 AS chef

|

||||

WORKDIR /usr/src

|

||||

|

||||

ARG CARGO_REGISTRIES_CRATES_IO_PROTOCOL=sparse

|

||||

|

|

@ -178,5 +178,8 @@ COPY --from=builder /usr/src/target/release-opt/text-generation-router /usr/loca

|

|||

COPY --from=builder /usr/src/target/release-opt/text-generation-launcher /usr/local/bin/text-generation-launcher

|

||||

|

||||

FROM ${PLATFORM} AS final

|

||||

ENV ATTENTION=paged

|

||||

ENV USE_PREFIX_CACHING=0

|

||||

ENV CUDA_GRAPHS=0

|

||||

ENTRYPOINT ["text-generation-launcher"]

|

||||

CMD ["--json-output"]

|

||||

|

|

|

|||

|

|

@ -189,6 +189,8 @@ overridden with the `--otlp-service-name` argument

|

|||

|

||||

|

||||

|

||||

Detailed blogpost by Adyen on TGI inner workings: [LLM inference at scale with TGI (Martin Iglesias Goyanes - Adyen, 2024)](https://www.adyen.com/knowledge-hub/llm-inference-at-scale-with-tgi)

|

||||

|

||||

### Local install

|

||||

|

||||

You can also opt to install `text-generation-inference` locally.

|

||||

|

|

|

|||

|

|

@ -153,6 +153,8 @@ impl Client {

|

|||

}),

|

||||

// We truncate the input on the server side to be sure that it has the correct size

|

||||

truncate,

|

||||

// Most request will have that

|

||||

add_special_tokens: true,

|

||||

// Blocks and slots will be set on the server side if we use paged attention

|

||||

blocks: vec![],

|

||||

slots: vec![],

|

||||

|

|

|

|||

|

|

@ -221,6 +221,7 @@ impl Health for ShardedClient {

|

|||

chunks: vec![Chunk::Text("liveness".into()).into()],

|

||||

}),

|

||||

truncate: 10,

|

||||

add_special_tokens: true,

|

||||

prefill_logprobs: false,

|

||||

parameters: Some(NextTokenChooserParameters {

|

||||

temperature: 1.0,

|

||||

|

|

|

|||

|

|

@ -53,8 +53,8 @@ utoipa-swagger-ui = { version = "6.0.0", features = ["axum"] }

|

|||

init-tracing-opentelemetry = { version = "0.14.1", features = [

|

||||

"opentelemetry-otlp",

|

||||

] }

|

||||

minijinja = { version = "2.0.2" }

|

||||

minijinja-contrib = { version = "2.0.2", features = ["pycompat"] }

|

||||

minijinja = { workspace = true }

|

||||

minijinja-contrib = { workspace = true }

|

||||

futures-util = "0.3.30"

|

||||

regex = "1.10.3"

|

||||

once_cell = "1.19.0"

|

||||

|

|

|

|||

|

|

@ -35,27 +35,15 @@ impl BackendV3 {

|

|||

window_size: Option<u32>,

|

||||

speculate: u32,

|

||||

) -> Self {

|

||||

let prefix_caching = if let Ok(prefix_caching) = std::env::var("USE_PREFIX_CACHING") {

|

||||

matches!(prefix_caching.as_str(), "true" | "1")

|

||||

} else {

|

||||

false

|

||||

};

|

||||

let attention = if let Ok(attention) = std::env::var("ATTENTION") {

|

||||

attention

|

||||

let prefix_caching =

|

||||

std::env::var("USE_PREFIX_CACHING").expect("Expect prefix caching env var");

|

||||

let prefix_caching = matches!(prefix_caching.as_str(), "true" | "1");

|

||||

let attention: String = std::env::var("ATTENTION").expect("attention env var");

|

||||

|

||||

let attention: Attention = attention

|

||||

.parse()

|

||||

.unwrap_or_else(|_| panic!("Invalid attention was specified :`{attention}`"))

|

||||

} else if prefix_caching {

|

||||

Attention::FlashInfer

|

||||

} else {

|

||||

Attention::Paged

|

||||

};

|

||||

let block_size = if attention == Attention::FlashDecoding {

|

||||

256

|

||||

} else if attention == Attention::FlashInfer {

|

||||

1

|

||||

} else {

|

||||

16

|

||||

};

|

||||

.unwrap_or_else(|_| panic!("Invalid attention was specified :`{attention}`"));

|

||||

let block_size = attention.block_size();

|

||||

|

||||

let queue = Queue::new(

|

||||

requires_padding,

|

||||

|

|

@ -180,6 +168,8 @@ pub(crate) async fn batching_task(

|

|||

None

|

||||

} else {

|

||||

// Minimum batch size

|

||||

// TODO: temporarily disable to avoid incorrect deallocation +

|

||||

// reallocation when using prefix caching.

|

||||

Some((batch_size as f32 * waiting_served_ratio).floor() as usize)

|

||||

};

|

||||

|

||||

|

|

|

|||

|

|

@ -1,4 +1,4 @@

|

|||

use std::{cmp::min, sync::Arc};

|

||||

use std::sync::Arc;

|

||||

use tokio::sync::{mpsc, oneshot};

|

||||

|

||||

use crate::radix::RadixAllocator;

|

||||

|

|

@ -137,7 +137,6 @@ pub trait Allocator {

|

|||

|

||||

fn free(&mut self, blocks: Vec<u32>, allocation_id: u64);

|

||||

}

|

||||

|

||||

pub struct SimpleAllocator {

|

||||

free_blocks: Vec<u32>,

|

||||

block_size: u32,

|

||||

|

|

@ -167,7 +166,7 @@ impl Allocator for SimpleAllocator {

|

|||

None => (tokens, 1),

|

||||

Some(window_size) => {

|

||||

let repeats = (tokens + window_size - 1) / window_size;

|

||||

let tokens = min(tokens, window_size);

|

||||

let tokens = core::cmp::min(tokens, window_size);

|

||||

(tokens, repeats as usize)

|

||||

}

|

||||

};

|

||||

|

|

|

|||

|

|

@ -149,6 +149,7 @@ impl Client {

|

|||

requests.push(Request {

|

||||

id: 0,

|

||||

inputs,

|

||||

add_special_tokens: true,

|

||||

input_chunks: Some(Input {

|

||||

chunks: input_chunks,

|

||||

}),

|

||||

|

|

|

|||

|

|

@ -222,6 +222,7 @@ impl Health for ShardedClient {

|

|||

chunks: vec![Chunk::Text("liveness".into()).into()],

|

||||

}),

|

||||

truncate: 10,

|

||||

add_special_tokens: true,

|

||||

prefill_logprobs: false,

|

||||

parameters: Some(NextTokenChooserParameters {

|

||||

temperature: 1.0,

|

||||

|

|

|

|||

|

|

@ -252,17 +252,14 @@ impl State {

|

|||

let next_batch_span = info_span!(parent: None, "batch", batch_size = tracing::field::Empty);

|

||||

next_batch_span.follows_from(Span::current());

|

||||

|

||||

let mut batch_requests = Vec::with_capacity(self.entries.len());

|

||||

let mut batch_entries =

|

||||

IntMap::with_capacity_and_hasher(self.entries.len(), BuildNoHashHasher::default());

|

||||

|

||||

let mut batch = Vec::with_capacity(self.entries.len());

|

||||

let mut max_input_length = 0;

|

||||

let mut prefill_tokens: u32 = 0;

|

||||

let mut decode_tokens: u32 = 0;

|

||||

let mut max_blocks = 0;

|

||||

|

||||

// Pop entries starting from the front of the queue

|

||||

'entry_loop: while let Some((id, mut entry)) = self.entries.pop_front() {

|

||||

'entry_loop: while let Some((id, entry)) = self.entries.pop_front() {

|

||||

// Filter entries where the response receiver was dropped (== entries where the request

|

||||

// was dropped by the client)

|

||||

if entry.response_tx.is_closed() {

|

||||

|

|

@ -276,7 +273,7 @@ impl State {

|

|||

// We pad to max input length in the Python shards

|

||||

// We need to take these padding tokens into the equation

|

||||

max_input_length = max_input_length.max(entry.request.input_length);

|

||||

prefill_tokens = (batch_requests.len() + 1) as u32 * max_input_length;

|

||||

prefill_tokens = (batch.len() + 1) as u32 * max_input_length;

|

||||

|

||||

decode_tokens += entry.request.stopping_parameters.max_new_tokens;

|

||||

let total_tokens = prefill_tokens + decode_tokens + self.speculate;

|

||||

|

|

@ -290,7 +287,7 @@ impl State {

|

|||

}

|

||||

None

|

||||

}

|

||||

Some(block_allocator) => {

|

||||

Some(_block_allocator) => {

|

||||

prefill_tokens += entry.request.input_length;

|

||||

let max_new_tokens = match self.window_size {

|

||||

None => entry.request.stopping_parameters.max_new_tokens,

|

||||

|

|

@ -316,16 +313,57 @@ impl State {

|

|||

+ self.speculate

|

||||

- 1;

|

||||

|

||||

match block_allocator

|

||||

.allocate(tokens, entry.request.input_ids.clone())

|

||||

.await

|

||||

// If users wants the prefill logprobs, we cannot reuse the cache.

|

||||

// So no input_ids for the radix tree.

|

||||

let input_ids = if entry.request.decoder_input_details {

|

||||

None

|

||||

} else {

|

||||

entry.request.input_ids.clone()

|

||||

};

|

||||

|

||||

Some((tokens, input_ids))

|

||||

}

|

||||

};

|

||||

batch.push((id, entry, block_allocation));

|

||||

if Some(batch.len()) == max_size {

|

||||

break;

|

||||

}

|

||||

}

|

||||

|

||||

// Empty batch

|

||||

if batch.is_empty() {

|

||||

tracing::debug!("Filterered out all entries");

|

||||

return None;

|

||||

}

|

||||

|

||||

// XXX We haven't allocated yet, so we're allowed to ditch the results.

|

||||

// Check if our batch is big enough

|

||||

if let Some(min_size) = min_size {

|

||||

// Batch is too small

|

||||

if batch.len() < min_size {

|

||||

// Add back entries to the queue in the correct order

|

||||

for (id, entry, _) in batch.into_iter().rev() {

|

||||

self.entries.push_front((id, entry));

|

||||

}

|

||||

return None;

|

||||

}

|

||||

}

|

||||

|

||||

let mut batch_requests = Vec::with_capacity(self.entries.len());

|

||||

let mut batch_entries =

|

||||

IntMap::with_capacity_and_hasher(self.entries.len(), BuildNoHashHasher::default());

|

||||

|

||||

for (id, mut entry, block_allocation) in batch {

|

||||

let block_allocation = if let (Some((tokens, input_ids)), Some(block_allocator)) =

|

||||

(block_allocation, &self.block_allocator)

|

||||

{

|

||||

match block_allocator.allocate(tokens, input_ids).await {

|

||||

None => {

|

||||

// Entry is over budget

|

||||

// Add it back to the front

|

||||

tracing::debug!("Over budget: not enough free blocks");

|

||||

self.entries.push_front((id, entry));

|

||||

break 'entry_loop;

|

||||

break;

|

||||

}

|

||||

Some(block_allocation) => {

|

||||

tracing::debug!("Allocation: {block_allocation:?}");

|

||||

|

|

@ -333,9 +371,9 @@ impl State {

|

|||

Some(block_allocation)

|

||||

}

|

||||

}

|

||||

}

|

||||

} else {

|

||||

None

|

||||

};

|

||||

|

||||

tracing::debug!("Accepting entry");

|

||||

// Create a new span to link the batch back to this entry

|

||||

let entry_batch_span = info_span!(parent: &entry.span, "infer");

|

||||

|

|

@ -378,6 +416,7 @@ impl State {

|

|||

}),

|

||||

inputs: entry.request.inputs.chunks_to_string(),

|

||||

truncate: entry.request.truncate,

|

||||

add_special_tokens: entry.request.add_special_tokens,

|

||||

parameters: Some(NextTokenChooserParameters::from(

|

||||

entry.request.parameters.clone(),

|

||||

)),

|

||||

|

|

@ -394,32 +433,6 @@ impl State {

|

|||

entry.batch_time = Some(Instant::now());

|

||||

// Insert in batch_entries IntMap

|

||||

batch_entries.insert(id, entry);

|

||||

|

||||

// Check if max_size

|

||||

if Some(batch_requests.len()) == max_size {

|

||||

break;

|

||||

}

|

||||

}

|

||||

|

||||

// Empty batch

|

||||

if batch_requests.is_empty() {

|

||||

tracing::debug!("Filterered out all entries");

|

||||

return None;

|

||||

}

|

||||

|

||||

// Check if our batch is big enough

|

||||

if let Some(min_size) = min_size {

|

||||

// Batch is too small

|

||||

if batch_requests.len() < min_size {

|

||||

// Add back entries to the queue in the correct order

|

||||

for r in batch_requests.into_iter().rev() {

|

||||

let id = r.id;

|

||||

let entry = batch_entries.remove(&id).unwrap();

|

||||

self.entries.push_front((id, entry));

|

||||

}

|

||||

|

||||

return None;

|

||||

}

|

||||

}

|

||||

|

||||

// Final batch size

|

||||

|

|

@ -512,6 +525,7 @@ mod tests {

|

|||

inputs: vec![],

|

||||

input_ids: Some(Arc::new(vec![])),

|

||||

input_length: 0,

|

||||

add_special_tokens: true,

|

||||

truncate: 0,

|

||||

decoder_input_details: false,

|

||||

parameters: ValidParameters {

|

||||

|

|

|

|||

|

|

@ -1,12 +1,10 @@

|

|||

use crate::block_allocator::{Allocator, BlockAllocation};

|

||||

use slotmap::{DefaultKey, SlotMap};

|

||||

use std::{

|

||||

collections::{BTreeSet, HashMap},

|

||||

sync::Arc,

|

||||

};

|

||||

|

||||

use slotmap::{DefaultKey, SlotMap};

|

||||

|

||||

use crate::block_allocator::{Allocator, BlockAllocation};

|

||||

|

||||

pub struct RadixAllocator {

|

||||

allocation_id: u64,

|

||||

|

||||

|

|

@ -16,26 +14,26 @@ pub struct RadixAllocator {

|

|||

|

||||

/// Blocks that are immediately available for allocation.

|

||||

free_blocks: Vec<u32>,

|

||||

|

||||

#[allow(dead_code)]

|

||||

// This isn't used because the prefix need to match without the windowing

|

||||

// mecanism. This at worst is overallocating, not necessarily being wrong.

|

||||

window_size: Option<u32>,

|

||||

|

||||

block_size: u32,

|

||||

}

|

||||

|

||||

impl RadixAllocator {

|

||||

pub fn new(block_size: u32, n_blocks: u32, window_size: Option<u32>) -> Self {

|

||||

assert_eq!(

|

||||

block_size, 1,

|

||||

"Radix tree allocator only works with block_size=1, was: {}",

|

||||

block_size

|

||||

);

|

||||

if window_size.is_some() {

|

||||

unimplemented!("Window size not supported in the prefix-caching block allocator yet");

|

||||

}

|

||||

|

||||

RadixAllocator {

|

||||

allocation_id: 0,

|

||||

allocations: HashMap::new(),

|

||||

cache_blocks: RadixTrie::new(),

|

||||

cache_blocks: RadixTrie::new(block_size as usize),

|

||||

|

||||

// Block 0 is reserved for health checks.

|

||||

free_blocks: (1..n_blocks).collect(),

|

||||

window_size,

|

||||

block_size,

|

||||

}

|

||||

}

|

||||

|

||||

|

|

@ -63,6 +61,7 @@ impl RadixAllocator {

|

|||

}

|

||||

}

|

||||

|

||||

// Allocator trait

|

||||

impl Allocator for RadixAllocator {

|

||||

fn allocate(

|

||||

&mut self,

|

||||

|

|

@ -74,22 +73,25 @@ impl Allocator for RadixAllocator {

|

|||

let node_id = self

|

||||

.cache_blocks

|

||||

.find(prefill_tokens.as_slice(), &mut blocks);

|

||||

// Even if this allocation fails below, we need to increase he

|

||||

// refcount to ensure that the prefix that was found is not evicted.

|

||||

|

||||

node_id

|

||||

} else {

|

||||

self.cache_blocks.root_id()

|

||||

};

|

||||

|

||||

// Even if this allocation fails below, we need to increase he

|

||||

// refcount to ensure that the prefix that was found is not evicted.

|

||||

self.cache_blocks

|

||||

.incref(prefix_node)

|

||||

.expect("Failed to increment refcount");

|

||||

|

||||

let prefix_len = blocks.len();

|

||||

let prefix_len = blocks.len() * self.block_size as usize;

|

||||

let suffix_len = tokens - prefix_len as u32;

|

||||

|

||||

match self.alloc_or_reclaim(suffix_len as usize) {

|

||||

let suffix_blocks = (suffix_len + self.block_size - 1) / self.block_size;

|

||||

|

||||

tracing::info!("Prefix {prefix_len} - Suffix {suffix_len}");

|

||||

|

||||

match self.alloc_or_reclaim(suffix_blocks as usize) {

|

||||

Some(suffix_blocks) => blocks.extend(suffix_blocks),

|

||||

None => {

|

||||

self.cache_blocks

|

||||

|

|

@ -100,7 +102,20 @@ impl Allocator for RadixAllocator {

|

|||

}

|

||||

|

||||

// 1:1 mapping of blocks and slots.

|

||||

let slots = blocks.clone();

|

||||

let slots = if self.block_size == 1 {

|

||||

blocks.clone()

|

||||

} else {

|

||||

let mut slots = Vec::with_capacity(blocks.len() * self.block_size as usize);

|

||||

'slots: for block_id in &blocks {

|

||||

for s in (block_id * self.block_size)..((block_id + 1) * self.block_size) {

|

||||

slots.push(s);

|

||||

if slots.len() as u32 == tokens {

|

||||

break 'slots;

|

||||

}

|

||||

}

|

||||

}

|

||||

slots

|

||||

};

|

||||

|

||||

let allocation = RadixAllocation {

|

||||

prefix_node,

|

||||

|

|

@ -108,6 +123,8 @@ impl Allocator for RadixAllocator {

|

|||

prefill_tokens: prefill_tokens.clone(),

|

||||

};

|

||||

|

||||

tracing::debug!("Blocks {blocks:?}");

|

||||

|

||||

self.allocation_id += 1;

|

||||

self.allocations.insert(self.allocation_id, allocation);

|

||||

|

||||

|

|

@ -136,12 +153,17 @@ impl Allocator for RadixAllocator {

|

|||

// If there are prefill tokens that did not come from the cache,

|

||||

// add them to the cache.

|

||||

if prefill_tokens.len() > allocation.cached_prefix_len {

|

||||

let aligned =

|

||||

(prefill_tokens.len() / self.block_size as usize) * self.block_size as usize;

|

||||

if aligned > 0 {

|

||||

let prefix_len = self

|

||||

.cache_blocks

|

||||

.insert(prefill_tokens, &blocks[..prefill_tokens.len()])

|

||||

.insert(

|

||||

&prefill_tokens[..aligned],

|

||||

&blocks[..aligned / self.block_size as usize],

|

||||

)

|

||||

// Unwrap, failing is a programming error.

|

||||

.expect("Failed to store prefill tokens");

|

||||

|

||||

// We can have a prefill with the following structure:

|

||||

//

|

||||

// |---| From the prefix cache.

|

||||

|

|

@ -151,12 +173,18 @@ impl Allocator for RadixAllocator {

|

|||

// This means that while processing this request there was a

|

||||

// partially overlapping request that had A..=E in its

|

||||

// prefill. In this case we need to free the blocks D E.

|

||||

self.free_blocks

|

||||

.extend(&blocks[allocation.cached_prefix_len..prefix_len]);

|

||||

if prefix_len > allocation.cached_prefix_len {

|

||||

self.free_blocks.extend(

|

||||

&blocks[allocation.cached_prefix_len / self.block_size as usize

|

||||

..prefix_len / self.block_size as usize],

|

||||

);

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

// Free non-prefill blocks.

|

||||

self.free_blocks.extend(&blocks[prefill_tokens.len()..]);

|

||||

self.free_blocks

|

||||

.extend(&blocks[prefill_tokens.len() / self.block_size as usize..]);

|

||||

} else {

|

||||

self.free_blocks.extend(blocks);

|

||||

}

|

||||

|

|

@ -204,16 +232,14 @@ pub struct RadixTrie {

|

|||

/// Time as a monotonically increating counter to avoid the system

|

||||

/// call that a real time lookup would require.

|

||||

time: u64,

|

||||

}

|

||||

impl Default for RadixTrie {

|

||||

fn default() -> Self {

|

||||

Self::new()

|

||||

}

|

||||

|

||||

/// All blocks need to be aligned with this

|

||||

block_size: usize,

|

||||

}

|

||||

|

||||

impl RadixTrie {

|

||||

/// Construct a new radix trie.

|

||||

pub fn new() -> Self {

|

||||

pub fn new(block_size: usize) -> Self {

|

||||

let root = TrieNode::new(vec![], vec![], 0, None);

|

||||

let mut nodes = SlotMap::new();

|

||||

let root = nodes.insert(root);

|

||||

|

|

@ -222,13 +248,14 @@ impl RadixTrie {

|

|||

nodes,

|

||||

root,

|

||||

time: 0,

|

||||

block_size,

|

||||

}

|

||||

}

|

||||

|

||||

/// Find the prefix of the given tokens.

|

||||

///

|

||||

/// The blocks corresponding to the part of the prefix that could be found

|

||||

/// are writteng to `blocks`. The number of blocks is in `0..=tokens.len()`.

|

||||

/// are written to `blocks`. The number of blocks is in `0..=tokens.len()`.

|

||||

/// Returns the identifier of the trie node that contains the longest

|

||||

/// prefix. The node identifier can be used by callers to e.g. increase its

|

||||

/// reference count.

|

||||

|

|

@ -246,8 +273,9 @@ impl RadixTrie {

|

|||

if let Some(&child_id) = node.children.get(&key[0]) {

|

||||

self.update_access_time(child_id);

|

||||

let child = self.nodes.get(child_id).expect("Invalid child identifier");

|

||||

let shared_prefix_len = child.key.shared_prefix_len(key);

|

||||

blocks.extend(&child.blocks[..shared_prefix_len]);

|

||||

let shared_prefix_len = shared_prefix(&child.key, key, self.block_size);

|

||||

assert_eq!(shared_prefix_len % self.block_size, 0);

|

||||

blocks.extend(&child.blocks[..shared_prefix_len / self.block_size]);

|

||||

|

||||

let key = &key[shared_prefix_len..];

|

||||

if !key.is_empty() {

|

||||

|

|

@ -276,6 +304,11 @@ impl RadixTrie {

|

|||

|

||||

node.ref_count -= 1;

|

||||

if node.ref_count == 0 {

|

||||

assert!(

|

||||

node.children.is_empty(),

|

||||

"Nodes with children must have refcount > 0"

|

||||

);

|

||||

|

||||

self.leaves.insert((node.last_accessed, node_id));

|

||||

}

|

||||

|

||||

|

|

@ -303,7 +336,7 @@ impl RadixTrie {

|

|||

/// Evict `n_blocks` from the trie.

|

||||

///

|

||||

/// Returns the evicted blocks. When the length is less than `n_blocks`,

|

||||

/// not enough blocks could beevicted.

|

||||

/// not enough blocks could be evicted.

|

||||

pub fn evict(&mut self, n_blocks: usize) -> Vec<u32> {

|

||||

// NOTE: we don't return Result here. If any of the unwrapping fails,

|

||||

// it's a programming error in the trie implementation, not a user

|

||||

|

|

@ -318,6 +351,12 @@ impl RadixTrie {

|

|||

let blocks_needed = n_blocks - evicted.len();

|

||||

|

||||

let node = self.nodes.get(node_id).expect("Leave does not exist");

|

||||

assert_eq!(

|

||||

node.ref_count, 0,

|

||||

"Leaf must have refcount of 0, got {}",

|

||||

node.ref_count

|

||||

);

|

||||

|

||||

if blocks_needed >= node.blocks.len() {

|

||||

// We need to evict the whole node if we need more blocks than it has.

|

||||

let node = self.remove_node(node_id);

|

||||

|

|

@ -348,7 +387,8 @@ impl RadixTrie {

|

|||

/// the first 10 elements of the tree **the blocks are not updated**.

|

||||

pub fn insert(&mut self, tokens: &[u32], blocks: &[u32]) -> Result<usize, TrieError> {

|

||||

self.time += 1;

|

||||

self.insert_(self.root, tokens, blocks)

|

||||

let common = self.insert_(self.root, tokens, blocks)?;

|

||||

Ok(common)

|

||||

}

|

||||

|

||||

/// Insertion worker.

|

||||

|

|

@ -362,7 +402,7 @@ impl RadixTrie {

|

|||

// the part of the prefix that is already in the trie to detect

|

||||

// mismatches.

|

||||

|

||||

if tokens.len() != blocks.len() {

|

||||

if tokens.len() != blocks.len() * self.block_size {

|

||||

return Err(TrieError::BlockTokenCountMismatch);

|

||||

}

|

||||

|

||||

|

|

@ -373,10 +413,10 @@ impl RadixTrie {

|

|||

.get_mut(child_id)

|

||||

// Unwrap here, since failure is a bug.

|

||||

.expect("Child node does not exist");

|

||||

let shared_prefix_len = child.key.shared_prefix_len(tokens);

|

||||

let shared_prefix_len = shared_prefix(&child.key, tokens, self.block_size);

|

||||

|

||||

// We are done, the prefix is already in the trie.

|

||||

if shared_prefix_len == tokens.len() {

|

||||

if shared_prefix_len == tokens.len() || shared_prefix_len == 0 {

|

||||

return Ok(shared_prefix_len);

|

||||

}

|

||||

|

||||

|

|

@ -386,7 +426,7 @@ impl RadixTrie {

|

|||

+ self.insert_(

|

||||

child_id,

|

||||

&tokens[shared_prefix_len..],

|

||||

&blocks[shared_prefix_len..],

|

||||

&blocks[shared_prefix_len / self.block_size..],

|

||||

)?);

|

||||

}

|

||||

|

||||

|

|

@ -395,7 +435,7 @@ impl RadixTrie {

|

|||

// remainder of the prefix into the node again

|

||||

let child_id = self.split_node(child_id, shared_prefix_len);

|

||||

let key = &tokens[shared_prefix_len..];

|

||||

let blocks = &blocks[shared_prefix_len..];

|

||||

let blocks = &blocks[shared_prefix_len / self.block_size..];

|

||||

Ok(shared_prefix_len + self.insert_(child_id, key, blocks)?)

|

||||

} else {

|

||||

self.add_node(node_id, tokens, blocks);

|

||||

|

|

@ -472,12 +512,16 @@ impl RadixTrie {

|

|||

fn remove_node(&mut self, node_id: NodeId) -> TrieNode {

|

||||

// Unwrap here, passing in an unknown id is a programming error.

|

||||

let node = self.nodes.remove(node_id).expect("Unknown node");

|

||||

assert!(

|

||||

node.children.is_empty(),

|

||||

"Tried to remove a node with {} children",

|

||||

node.children.len()

|

||||

);

|

||||

let parent_id = node.parent.expect("Attempted to remove root node");

|

||||

let parent = self.nodes.get_mut(parent_id).expect("Unknown parent node");

|

||||

parent.children.remove(&node.key[0]);

|

||||

self.decref(parent_id)

|

||||

.expect("Failed to decrease parent refcount");

|

||||

self.nodes.remove(node_id);

|

||||

node

|

||||

}

|

||||

|

||||

|

|

@ -549,34 +593,56 @@ impl TrieNode {

|

|||

}

|

||||

}

|

||||

|

||||

/// Helper trait to get the length of the shared prefix of two sequences.

|

||||

trait SharedPrefixLen {

|

||||

fn shared_prefix_len(&self, other: &Self) -> usize;

|

||||

}

|

||||

|

||||

impl<T> SharedPrefixLen for [T]

|

||||

where

|

||||

T: PartialEq,

|

||||

{

|

||||

fn shared_prefix_len(&self, other: &Self) -> usize {

|

||||

self.iter().zip(other).take_while(|(a, b)| a == b).count()

|

||||

}

|

||||

fn shared_prefix(left: &[u32], right: &[u32], block_size: usize) -> usize {

|

||||

let full = left.iter().zip(right).take_while(|(a, b)| a == b).count();

|

||||

// NOTE: this is the case because the child node was chosen based on

|

||||

// matching the first character of the key/prefix.

|

||||

assert!(full > 0, "Prefixes must at least share 1 token");

|

||||

(full / block_size) * block_size

|

||||

}

|

||||

|

||||

#[cfg(test)]

|

||||

mod tests {

|

||||

use std::sync::Arc;

|

||||

|

||||

use crate::block_allocator::Allocator;

|

||||

use super::*;

|

||||

|

||||

use super::RadixAllocator;

|

||||

#[test]

|

||||

fn allocator_block_size() {

|

||||

let mut cache = RadixAllocator::new(2, 12, None);

|

||||

let allocation = cache.allocate(8, Some(Arc::new(vec![0, 1, 2, 3]))).unwrap();

|

||||

assert_eq!(allocation.blocks, vec![8, 9, 10, 11]);

|

||||

assert_eq!(allocation.slots, vec![16, 17, 18, 19, 20, 21, 22, 23]);

|

||||

assert_eq!(allocation.prefix_len, 0);

|

||||

cache.free(allocation.blocks.clone(), allocation.allocation_id);

|

||||

|

||||

let allocation = cache.allocate(8, Some(Arc::new(vec![0, 1, 2, 3]))).unwrap();

|

||||

assert_eq!(allocation.blocks, vec![8, 9, 10, 11]);

|

||||

assert_eq!(allocation.slots, vec![16, 17, 18, 19, 20, 21, 22, 23]);

|

||||

assert_eq!(allocation.prefix_len, 4);

|

||||

}

|

||||

|

||||

#[test]

|

||||

fn allocator_block_size_non_aligned() {

|

||||

let mut cache = RadixAllocator::new(2, 12, None);

|

||||

let allocation = cache.allocate(7, Some(Arc::new(vec![0, 1, 2]))).unwrap();

|

||||

assert_eq!(allocation.blocks, vec![8, 9, 10, 11]);

|

||||

assert_eq!(allocation.slots, vec![16, 17, 18, 19, 20, 21, 22]);

|

||||

assert_eq!(allocation.prefix_len, 0);

|

||||

cache.free(allocation.blocks.clone(), allocation.allocation_id);

|

||||

|

||||

let allocation = cache.allocate(7, Some(Arc::new(vec![0, 1, 2]))).unwrap();

|

||||

assert_eq!(allocation.blocks, vec![8, 9, 10, 11]);

|

||||

assert_eq!(allocation.slots, vec![16, 17, 18, 19, 20, 21, 22]);

|

||||

assert_eq!(allocation.prefix_len, 2);

|

||||

}

|

||||

|

||||

#[test]

|

||||

fn allocator_reuses_prefixes() {

|

||||

let mut cache = RadixAllocator::new(1, 12, None);

|

||||

let allocation = cache.allocate(8, Some(Arc::new(vec![0, 1, 2, 3]))).unwrap();

|

||||

assert_eq!(allocation.blocks, vec![4, 5, 6, 7, 8, 9, 10, 11]);

|

||||

assert_eq!(allocation.slots, allocation.slots);

|

||||

assert_eq!(allocation.blocks, allocation.slots);

|

||||

assert_eq!(allocation.prefix_len, 0);

|

||||

cache.free(allocation.blocks.clone(), allocation.allocation_id);

|

||||

|

||||

|

|

@ -665,7 +731,7 @@ mod tests {

|

|||

|

||||

#[test]

|

||||

fn trie_insertions_have_correct_prefix_len() {

|

||||

let mut trie = super::RadixTrie::new();

|

||||

let mut trie = RadixTrie::new(1);

|

||||

|

||||

assert_eq!(trie.insert(&[0, 1, 2], &[0, 1, 2]).unwrap(), 0);

|

||||

|

||||

|

|

@ -686,9 +752,33 @@ mod tests {

|

|||

);

|

||||

}

|

||||

|

||||

#[test]

|

||||

fn trie_insertions_block_size() {

|

||||

let mut trie = RadixTrie::new(2);

|

||||

|

||||

assert_eq!(trie.insert(&[0, 1, 2, 3], &[0, 1]).unwrap(), 0);

|

||||

|

||||

// Already exists.

|

||||

// But needs to be block_size aligned

|

||||

assert_eq!(trie.insert(&[0, 1, 2, 3], &[0, 1]).unwrap(), 4);

|

||||

|

||||

// Completely new at root-level

|

||||

assert_eq!(trie.insert(&[1, 2, 3, 4], &[1, 2]).unwrap(), 0);

|

||||

|

||||

// Contains full prefix, but longer.

|

||||

assert_eq!(trie.insert(&[0, 1, 2, 3, 4, 5], &[0, 1, 2]).unwrap(), 4);

|

||||

|

||||

// Shares partial prefix, we need a split.

|

||||

assert_eq!(

|

||||

trie.insert(&[0, 1, 3, 4, 5, 6, 7, 8], &[0, 1, 2, 3])

|

||||

.unwrap(),

|

||||

2

|

||||

);

|

||||

}

|

||||

|

||||

#[test]

|

||||

fn trie_get_returns_correct_blocks() {

|

||||

let mut trie = super::RadixTrie::new();

|

||||

let mut trie = RadixTrie::new(1);

|

||||

trie.insert(&[0, 1, 2], &[0, 1, 2]).unwrap();

|

||||

trie.insert(&[1, 2, 3], &[1, 2, 3]).unwrap();

|

||||

trie.insert(&[0, 1, 2, 3, 4], &[0, 1, 2, 3, 4]).unwrap();

|

||||

|

|

@ -722,7 +812,7 @@ mod tests {

|

|||

|

||||

#[test]

|

||||

fn trie_evict_removes_correct_blocks() {

|

||||

let mut trie = super::RadixTrie::new();

|

||||

let mut trie = RadixTrie::new(1);

|

||||

trie.insert(&[0, 1, 2], &[0, 1, 2]).unwrap();

|

||||

trie.insert(&[0, 1, 2, 3, 5, 6, 7], &[0, 1, 2, 3, 5, 6, 7])

|

||||

.unwrap();

|

||||

|

|

|

|||

|

|

@ -148,6 +148,7 @@ async fn prefill(

|

|||

}),

|

||||

inputs: sequence.clone(),

|

||||

truncate: sequence_length,

|

||||

add_special_tokens: true,

|

||||

parameters: Some(parameters.clone()),

|

||||

stopping_parameters: Some(StoppingCriteriaParameters {

|

||||

max_new_tokens: decode_length,

|

||||

|

|

|

|||

|

|

@ -757,7 +757,12 @@ class AsyncClient:

|

|||

continue

|

||||

payload = byte_payload.decode("utf-8")

|

||||

if payload.startswith("data:"):

|

||||

json_payload = json.loads(payload.lstrip("data:").rstrip("\n"))

|

||||

payload_data = (

|

||||

payload.lstrip("data:").rstrip("\n").removeprefix(" ")

|

||||

)

|

||||

if payload_data == "[DONE]":

|

||||

break

|

||||

json_payload = json.loads(payload_data)

|

||||

try:

|

||||

response = ChatCompletionChunk(**json_payload)

|

||||

yield response

|

||||

|

|

|

|||

|

|

@ -556,6 +556,37 @@

|

|||

}

|

||||

}

|

||||

}

|

||||

},

|

||||

"/v1/models": {

|

||||

"get": {

|

||||

"tags": [

|

||||

"Text Generation Inference"

|

||||

],

|

||||

"summary": "Get model info",

|

||||

"operationId": "openai_get_model_info",

|

||||

"responses": {

|

||||

"200": {

|

||||

"description": "Served model info",

|

||||

"content": {

|

||||

"application/json": {

|

||||

"schema": {

|

||||

"$ref": "#/components/schemas/ModelInfo"

|

||||

}

|

||||

}

|

||||

}

|

||||

},

|

||||

"404": {

|

||||

"description": "Model not found",

|

||||

"content": {

|

||||

"application/json": {

|

||||

"schema": {

|

||||

"$ref": "#/components/schemas/ErrorResponse"

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

},

|

||||

"components": {

|

||||

|

|

@ -924,7 +955,7 @@

|

|||

"tool_prompt": {

|

||||

"type": "string",

|

||||

"description": "A prompt to be appended before the tools",

|

||||

"example": "\"You will be presented with a JSON schema representing a set of tools.\nIf the user request lacks of sufficient information to make a precise tool selection: Do not invent any tool's properties, instead notify with an error message.\n\nJSON Schema:\n\"",

|

||||

"example": "Given the functions available, please respond with a JSON for a function call with its proper arguments that best answers the given prompt. Respond in the format {name: function name, parameters: dictionary of argument name and its value}.Do not use variables.",

|

||||

"nullable": true

|

||||

},

|

||||

"tools": {

|

||||

|

|

@ -1747,6 +1778,35 @@

|

|||

}

|

||||

]

|

||||

},

|

||||

"ModelInfo": {

|

||||

"type": "object",

|

||||

"required": [

|

||||

"id",

|

||||

"object",

|

||||

"created",

|

||||

"owned_by"

|

||||

],

|

||||

"properties": {

|

||||

"created": {

|

||||

"type": "integer",

|

||||

"format": "int64",

|

||||

"example": 1686935002,

|

||||

"minimum": 0

|

||||

},

|

||||

"id": {

|

||||

"type": "string",

|

||||

"example": "gpt2"

|

||||

},

|

||||

"object": {

|

||||

"type": "string",

|

||||

"example": "model"

|

||||

},

|

||||

"owned_by": {

|

||||

"type": "string",

|

||||

"example": "openai"

|

||||

}

|

||||

}

|

||||

},

|

||||

"OutputMessage": {

|

||||

"oneOf": [

|

||||

{

|

||||

|

|

|

|||

|

|

@ -71,6 +71,8 @@

|

|||

title: How Guidance Works (via outlines)

|

||||

- local: conceptual/lora

|

||||

title: LoRA (Low-Rank Adaptation)

|

||||

- local: conceptual/external

|

||||

title: External Resources

|

||||

|

||||

|

||||

title: Conceptual Guides

|

||||

|

|

|

|||

|

|

@ -157,7 +157,12 @@ from huggingface_hub import InferenceClient

|

|||

|

||||

client = InferenceClient("http://localhost:3000")

|

||||

|

||||

regexp = "((25[0-5]|2[0-4]\\d|[01]?\\d\\d?)\\.){3}(25[0-5]|2[0-4]\\d|[01]?\\d\\d?)"

|

||||

section_regex = "(?:25[0-5]|2[0-4][0-9]|[01]?[0-9][0-9]?)"

|

||||

regexp = f"HELLO\.{section_regex}\.WORLD\.{section_regex}"

|

||||

|

||||

# This is a more realistic example of an ip address regex

|

||||

# regexp = f"{section_regex}\.{section_regex}\.{section_regex}\.{section_regex}"

|

||||

|

||||

|

||||

resp = client.text_generation(

|

||||

f"Whats Googles DNS? Please use the following regex: {regexp}",

|

||||

|

|

@ -170,7 +175,7 @@ resp = client.text_generation(

|

|||

|

||||

|

||||

print(resp)

|

||||

# 7.1.1.1

|

||||

# HELLO.255.WORLD.255

|

||||

|

||||

```

|

||||

|

||||

|

|

|

|||

|

|

@ -0,0 +1,4 @@

|

|||

# External Resources

|

||||

|

||||

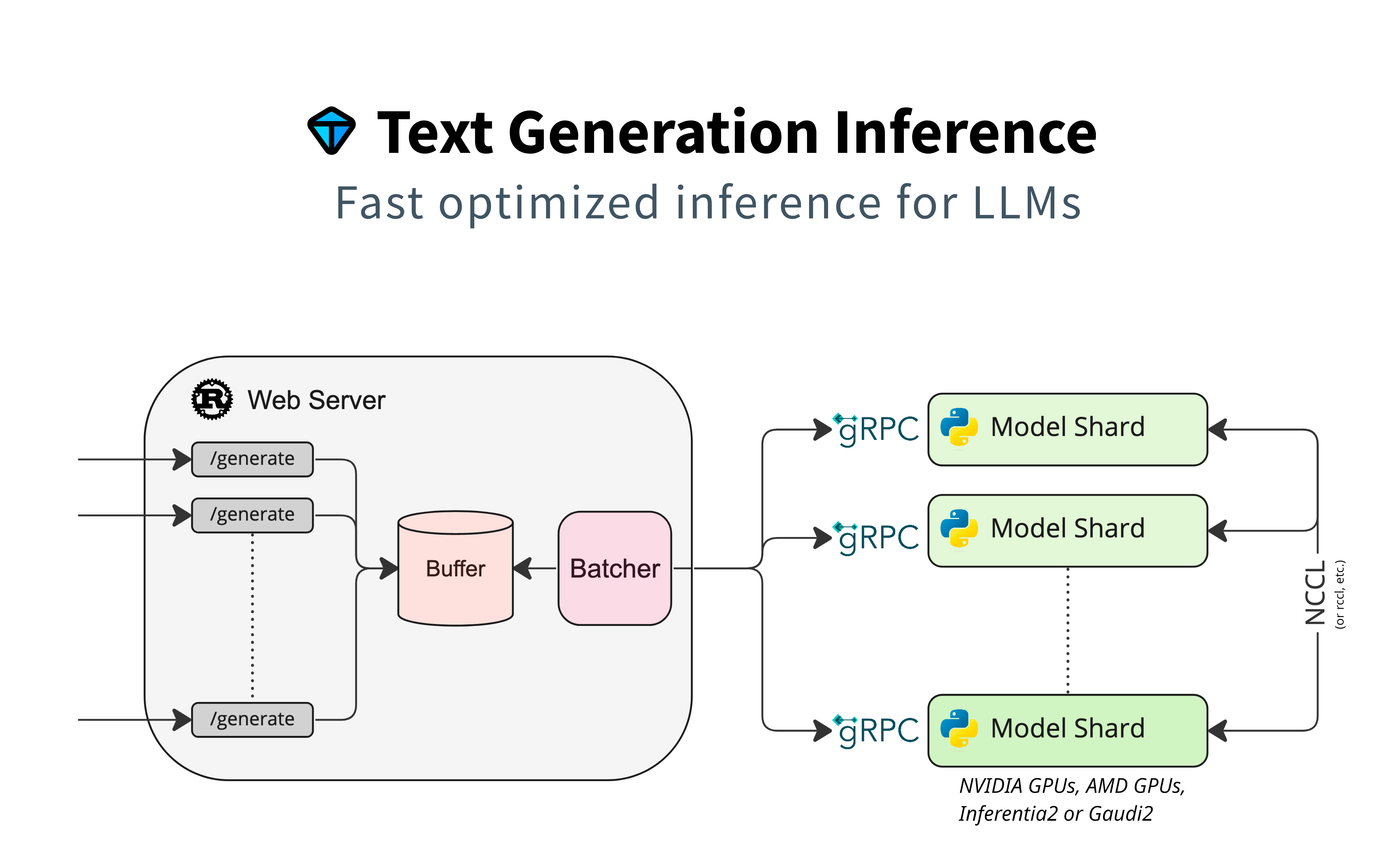

- Adyen wrote a detailed article about the interplay between TGI's main components: router and server.

|

||||

[LLM inference at scale with TGI (Martin Iglesias Goyanes - Adyen, 2024)](https://www.adyen.com/knowledge-hub/llm-inference-at-scale-with-tgi)

|

||||

|

|

@ -1,5 +1,6 @@

|

|||

# Streaming

|

||||

|

||||

|

||||

## What is Streaming?

|

||||

|

||||

Token streaming is the mode in which the server returns the tokens one by one as the model generates them. This enables showing progressive generations to the user rather than waiting for the whole generation. Streaming is an essential aspect of the end-user experience as it reduces latency, one of the most critical aspects of a smooth experience.

|

||||

|

|

|

|||

|

|

@ -12,7 +12,24 @@ volume=$PWD/data # share a volume with the Docker container to avoid downloading

|

|||

docker run --rm --privileged --cap-add=sys_nice \

|

||||

--device=/dev/dri \

|

||||

--ipc=host --shm-size 1g --net host -v $volume:/data \

|

||||

ghcr.io/huggingface/text-generation-inference:2.2.0-intel \

|

||||

ghcr.io/huggingface/text-generation-inference:2.2.0-intel-xpu \

|

||||

--model-id $model --cuda-graphs 0

|

||||

```

|

||||

|

||||

# Using TGI with Intel CPUs

|

||||

|

||||

Intel® Extension for PyTorch (IPEX) also provides further optimizations for Intel CPUs. The IPEX provides optimization operations such as flash attention, page attention, Add + LayerNorm, ROPE and more.

|

||||

|

||||

On a server powered by Intel CPU, TGI can be launched with the following command:

|

||||

|

||||

```bash

|

||||

model=teknium/OpenHermes-2.5-Mistral-7B

|

||||

volume=$PWD/data # share a volume with the Docker container to avoid downloading weights every run

|

||||

|

||||

docker run --rm --privileged --cap-add=sys_nice \

|

||||

--device=/dev/dri \

|

||||

--ipc=host --shm-size 1g --net host -v $volume:/data \

|

||||

ghcr.io/huggingface/text-generation-inference:2.2.0-intel-cpu \

|

||||

--model-id $model --cuda-graphs 0

|

||||

```

|

||||

|

||||

|

|

|

|||

154

flake.lock

154

flake.lock

|

|

@ -492,24 +492,6 @@

|

|||

"type": "github"

|

||||

}

|

||||

},

|

||||

"flake-utils_7": {

|

||||

"inputs": {

|

||||

"systems": "systems_7"

|

||||

},

|

||||

"locked": {

|

||||

"lastModified": 1710146030,

|

||||

"narHash": "sha256-SZ5L6eA7HJ/nmkzGG7/ISclqe6oZdOZTNoesiInkXPQ=",

|

||||

"owner": "numtide",

|

||||

"repo": "flake-utils",

|

||||

"rev": "b1d9ab70662946ef0850d488da1c9019f3a9752a",

|

||||

"type": "github"

|

||||

},

|

||||

"original": {

|

||||

"owner": "numtide",

|

||||

"repo": "flake-utils",

|

||||

"type": "github"

|

||||

}

|

||||

},

|

||||

"gitignore": {

|

||||

"inputs": {

|

||||

"nixpkgs": [

|

||||

|

|

@ -594,27 +576,6 @@

|

|||

"type": "github"

|

||||

}

|

||||

},

|

||||

"nix-github-actions": {

|

||||

"inputs": {

|

||||

"nixpkgs": [

|

||||

"poetry2nix",

|

||||

"nixpkgs"

|

||||

]

|

||||

},

|

||||

"locked": {

|

||||

"lastModified": 1703863825,

|

||||

"narHash": "sha256-rXwqjtwiGKJheXB43ybM8NwWB8rO2dSRrEqes0S7F5Y=",

|

||||

"owner": "nix-community",

|

||||

"repo": "nix-github-actions",

|

||||

"rev": "5163432afc817cf8bd1f031418d1869e4c9d5547",

|

||||

"type": "github"

|

||||

},

|

||||

"original": {

|

||||

"owner": "nix-community",

|

||||

"repo": "nix-github-actions",

|

||||

"type": "github"

|

||||

}

|

||||

},

|

||||

"nix-test-runner": {

|

||||

"flake": false,

|

||||

"locked": {

|

||||

|

|

@ -739,58 +700,20 @@

|

|||

},

|

||||

"nixpkgs_6": {

|

||||

"locked": {

|

||||

"lastModified": 1719763542,

|

||||

"narHash": "sha256-mXkOj9sJ0f69Nkc2dGGOWtof9d1YNY8Le/Hia3RN+8Q=",

|

||||

"owner": "NixOS",

|

||||

"lastModified": 1723912943,

|

||||

"narHash": "sha256-39F9GzyhxYcY3wTeKuEFWRJWcrGBosO4nf4xzMTWZX8=",

|

||||

"owner": "danieldk",

|

||||

"repo": "nixpkgs",

|

||||

"rev": "e6cdd8a11b26b4d60593733106042141756b54a3",

|

||||

"rev": "b82cdca86dbb30013b76c4b55d48806476820a5c",

|

||||

"type": "github"

|

||||

},

|

||||

"original": {

|

||||

"owner": "NixOS",

|

||||

"ref": "nixos-unstable-small",

|

||||

"owner": "danieldk",

|

||||

"ref": "cuda-12.4",

|

||||

"repo": "nixpkgs",

|

||||

"type": "github"

|

||||

}

|

||||

},

|

||||

"nixpkgs_7": {

|

||||

"locked": {

|

||||

"lastModified": 1723418128,

|

||||

"narHash": "sha256-k1pEqsnB6ikZyasXbtV6A9akPZMKlsyENPDUA6PXoJo=",

|

||||

"owner": "nixos",

|

||||

"repo": "nixpkgs",

|

||||

"rev": "129f579cbb5b4c1ad258fd96bdfb78eb14802727",

|

||||

"type": "github"

|

||||

},

|

||||

"original": {

|

||||

"owner": "nixos",

|

||||

"ref": "nixos-unstable-small",

|

||||

"repo": "nixpkgs",

|

||||

"type": "github"

|

||||

}

|

||||

},

|

||||

"poetry2nix": {

|

||||

"inputs": {

|

||||

"flake-utils": "flake-utils_7",

|

||||

"nix-github-actions": "nix-github-actions",

|

||||

"nixpkgs": "nixpkgs_6",

|

||||

"systems": "systems_8",

|

||||

"treefmt-nix": "treefmt-nix"

|

||||

},

|

||||

"locked": {

|

||||

"lastModified": 1723512448,

|

||||

"narHash": "sha256-VSTtxGKre1p6zd6ACuBmfDcR+BT9+ml8Y3KrSbfGFYU=",

|

||||

"owner": "nix-community",

|

||||

"repo": "poetry2nix",

|

||||

"rev": "ed52f844c4dd04dde45550c3189529854384124e",

|

||||

"type": "github"

|

||||

},

|

||||

"original": {

|

||||

"owner": "nix-community",

|

||||

"repo": "poetry2nix",

|

||||

"type": "github"

|

||||

}

|

||||

},

|

||||

"pre-commit-hooks": {

|

||||

"inputs": {

|

||||

"flake-compat": [

|

||||

|

|

@ -900,7 +823,6 @@

|

|||

"tgi-nix",

|

||||

"nixpkgs"

|

||||

],

|

||||

"poetry2nix": "poetry2nix",

|

||||

"rust-overlay": "rust-overlay",

|

||||

"tgi-nix": "tgi-nix"

|

||||

}

|

||||

|

|

@ -913,11 +835,11 @@

|

|||

]

|

||||

},

|

||||

"locked": {

|

||||

"lastModified": 1723515680,

|

||||

"narHash": "sha256-nHdKymsHCVIh0Wdm4MvSgxcTTg34FJIYHRQkQYaSuvk=",

|

||||

"lastModified": 1724638882,

|

||||

"narHash": "sha256-ap2jIQi/FuUHR6HCht6ASWhoz8EiB99XmI8Esot38VE=",

|

||||

"owner": "oxalica",

|

||||

"repo": "rust-overlay",

|

||||

"rev": "4ee3d9e9569f70d7bb40f28804d6fe950c81eab3",

|

||||

"rev": "19b70f147b9c67a759e35824b241f1ed92e46694",

|

||||

"type": "github"

|

||||

},

|

||||

"original": {

|

||||

|

|

@ -1016,46 +938,17 @@

|

|||

"type": "github"

|

||||

}

|

||||

},

|

||||

"systems_7": {

|

||||

"locked": {

|

||||

"lastModified": 1681028828,

|

||||

"narHash": "sha256-Vy1rq5AaRuLzOxct8nz4T6wlgyUR7zLU309k9mBC768=",

|

||||

"owner": "nix-systems",

|

||||

"repo": "default",

|

||||

"rev": "da67096a3b9bf56a91d16901293e51ba5b49a27e",

|

||||

"type": "github"

|

||||

},

|

||||

"original": {

|

||||

"owner": "nix-systems",

|

||||

"repo": "default",

|

||||

"type": "github"

|

||||

}

|

||||

},

|

||||

"systems_8": {

|

||||

"locked": {

|

||||

"lastModified": 1681028828,

|

||||

"narHash": "sha256-Vy1rq5AaRuLzOxct8nz4T6wlgyUR7zLU309k9mBC768=",

|

||||

"owner": "nix-systems",

|

||||

"repo": "default",

|

||||

"rev": "da67096a3b9bf56a91d16901293e51ba5b49a27e",

|

||||

"type": "github"

|

||||

},

|

||||

"original": {

|

||||

"id": "systems",

|

||||

"type": "indirect"

|

||||

}

|

||||

},

|

||||

"tgi-nix": {

|

||||

"inputs": {

|

||||

"flake-compat": "flake-compat_4",

|

||||

"nixpkgs": "nixpkgs_7"

|

||||

"nixpkgs": "nixpkgs_6"

|

||||

},

|

||||

"locked": {

|

||||

"lastModified": 1723532088,

|

||||

"narHash": "sha256-6h/P/BkFDw8txlikonKXp5IbluHSPhHJTQRftJLkbLQ=",

|

||||

"lastModified": 1725011596,

|

||||

"narHash": "sha256-zfq8lOXFgJnKxxsqSelHuKUvhxgH3cEmLoAgsOO62Cg=",

|

||||

"owner": "danieldk",

|

||||

"repo": "tgi-nix",

|

||||

"rev": "32335a37ce0f703bab901baf7b74eb11e9972d5f",

|

||||

"rev": "717c2b07e38538abf05237cca65b2d1363c2c9af",

|

||||

"type": "github"

|

||||

},

|

||||

"original": {

|

||||

|

|

@ -1063,27 +956,6 @@

|

|||

"repo": "tgi-nix",

|

||||

"type": "github"

|

||||

}

|

||||

},

|

||||

"treefmt-nix": {

|

||||

"inputs": {

|

||||

"nixpkgs": [

|

||||

"poetry2nix",

|

||||

"nixpkgs"

|

||||

]

|

||||

},

|

||||

"locked": {

|

||||

"lastModified": 1719749022,

|

||||

"narHash": "sha256-ddPKHcqaKCIFSFc/cvxS14goUhCOAwsM1PbMr0ZtHMg=",

|

||||

"owner": "numtide",

|

||||

"repo": "treefmt-nix",

|

||||

"rev": "8df5ff62195d4e67e2264df0b7f5e8c9995fd0bd",

|

||||

"type": "github"

|

||||

},

|

||||

"original": {

|

||||

"owner": "numtide",

|

||||

"repo": "treefmt-nix",

|

||||

"type": "github"

|

||||

}

|

||||

}

|

||||

},

|

||||

"root": "root",

|

||||

|

|

|

|||

96

flake.nix

96

flake.nix

|

|

@ -8,7 +8,6 @@

|

|||

tgi-nix.url = "github:danieldk/tgi-nix";

|

||||

nixpkgs.follows = "tgi-nix/nixpkgs";

|

||||

flake-utils.url = "github:numtide/flake-utils";

|

||||

poetry2nix.url = "github:nix-community/poetry2nix";

|

||||

rust-overlay = {

|

||||

url = "github:oxalica/rust-overlay";

|

||||

inputs.nixpkgs.follows = "tgi-nix/nixpkgs";

|

||||

|

|

@ -23,7 +22,6 @@

|

|||

flake-utils,

|

||||

rust-overlay,

|

||||

tgi-nix,

|

||||

poetry2nix,

|

||||

}:

|

||||

flake-utils.lib.eachDefaultSystem (

|

||||

system:

|

||||

|

|

@ -33,25 +31,40 @@

|

|||

src = ./.;

|

||||

additionalCargoNixArgs = [ "--all-features" ];

|

||||

};

|

||||

config = {

|

||||

allowUnfree = true;

|

||||

cudaSupport = true;

|

||||

};

|

||||

pkgs = import nixpkgs {

|

||||

inherit config system;

|

||||

inherit system;

|

||||

inherit (tgi-nix.lib) config;

|

||||

overlays = [

|

||||

rust-overlay.overlays.default

|

||||

tgi-nix.overlay

|

||||

tgi-nix.overlays.default

|

||||

];

|

||||

};

|

||||

inherit (poetry2nix.lib.mkPoetry2Nix { inherit pkgs; }) mkPoetryEditablePackage;

|

||||

text-generation-server = mkPoetryEditablePackage { editablePackageSources = ./server; };

|

||||

crateOverrides = import ./nix/crate-overrides.nix { inherit pkgs nix-filter; };

|

||||

benchmark = cargoNix.workspaceMembers.text-generation-benchmark.build.override {

|

||||

inherit crateOverrides;

|

||||

};

|

||||

launcher = cargoNix.workspaceMembers.text-generation-launcher.build.override {

|

||||

inherit crateOverrides;

|

||||

};

|

||||

router = cargoNix.workspaceMembers.text-generation-router-v3.build.override {

|

||||

inherit crateOverrides;

|

||||

};

|

||||

server = pkgs.python3.pkgs.callPackage ./nix/server.nix { inherit nix-filter; };

|

||||

in

|

||||

{

|

||||

devShells.default =

|

||||

with pkgs;

|

||||

mkShell {

|

||||

devShells = with pkgs; rec {

|

||||

default = pure;

|

||||

|

||||

pure = mkShell {

|

||||

buildInputs = [

|

||||

benchmark

|

||||

launcher

|

||||

router

|

||||

server

|

||||

];

|

||||

};

|

||||

|

||||

impure = mkShell {

|

||||

buildInputs =

|

||||

[

|

||||

openssl.dev

|

||||

|

|

@ -62,49 +75,44 @@

|

|||

"rust-src"

|

||||

];

|

||||

})

|

||||

protobuf

|

||||

]

|

||||

++ (with python3.pkgs; [

|

||||

venvShellHook

|

||||

docker

|

||||

pip

|

||||

|

||||

causal-conv1d

|

||||

click

|

||||

einops

|

||||

exllamav2

|

||||

fbgemm-gpu

|

||||

flashinfer

|

||||

flash-attn

|

||||

flash-attn-layer-norm

|

||||

flash-attn-rotary

|

||||

grpc-interceptor

|

||||

grpcio-reflection

|

||||

grpcio-status

|

||||

grpcio-tools

|

||||

hf-transfer

|

||||

loguru

|

||||

mamba-ssm

|

||||

marlin-kernels

|

||||

opentelemetry-api

|

||||

opentelemetry-exporter-otlp

|

||||

opentelemetry-instrumentation-grpc

|

||||

opentelemetry-semantic-conventions

|

||||

peft

|

||||

tokenizers

|

||||

torch

|

||||

transformers

|

||||

vllm

|

||||

|

||||

(cargoNix.workspaceMembers.text-generation-launcher.build.override { inherit crateOverrides; })

|

||||

(cargoNix.workspaceMembers.text-generation-router-v3.build.override { inherit crateOverrides; })

|

||||

ipdb

|

||||

pyright

|

||||

pytest

|

||||

pytest-asyncio

|

||||

ruff

|

||||

syrupy

|

||||

]);

|

||||

|

||||

inputsFrom = [ server ];

|

||||

|

||||

venvDir = "./.venv";

|

||||

|

||||

postVenv = ''

|

||||

postVenvCreation = ''

|

||||

unset SOURCE_DATE_EPOCH

|

||||

( cd server ; python -m pip install --no-dependencies -e . )

|

||||

( cd clients/python ; python -m pip install --no-dependencies -e . )

|

||||

'';

|

||||

postShellHook = ''

|

||||

unset SOURCE_DATE_EPOCH

|

||||

export PATH=$PATH:~/.cargo/bin

|

||||

'';

|

||||

};

|

||||

};

|

||||

|

||||

packages.default = pkgs.writeShellApplication {

|

||||

name = "text-generation-inference";

|

||||

runtimeInputs = [

|

||||

server

|

||||

router

|

||||

];

|

||||

text = ''

|

||||

${launcher}/bin/text-generation-launcher "$@"

|

||||

'';

|

||||

};

|

||||

}

|

||||

|

|

|

|||

|

|

@ -64,6 +64,7 @@ class ResponseComparator(JSONSnapshotExtension):

|

|||

self,

|

||||

data,

|

||||

*,

|

||||

include=None,

|

||||

exclude=None,

|

||||

matcher=None,

|

||||

):

|

||||

|

|

@ -79,7 +80,12 @@ class ResponseComparator(JSONSnapshotExtension):

|

|||

data = [d.model_dump() for d in data]

|

||||

|

||||

data = self._filter(

|

||||

data=data, depth=0, path=(), exclude=exclude, matcher=matcher

|

||||

data=data,

|

||||

depth=0,

|

||||

path=(),

|

||||

exclude=exclude,

|

||||

include=include,

|

||||

matcher=matcher,

|

||||

)

|

||||

return json.dumps(data, indent=2, ensure_ascii=False, sort_keys=False) + "\n"

|

||||

|

||||

|

|

@ -257,7 +263,7 @@ class IgnoreLogProbResponseComparator(ResponseComparator):

|

|||

|

||||

class LauncherHandle:

|

||||

def __init__(self, port: int):

|

||||

self.client = AsyncClient(f"http://localhost:{port}")

|

||||

self.client = AsyncClient(f"http://localhost:{port}", timeout=30)

|

||||

|

||||

def _inner_health(self):

|

||||

raise NotImplementedError

|

||||

|

|

|

|||

|

|

@ -5,7 +5,7 @@

|

|||

"index": 0,

|

||||

"logprobs": null,

|

||||

"message": {

|

||||

"content": "As of your last question, the weather in Brooklyn, New York, is typically hot and humid throughout the year. The suburbs around New York City are jealously sheltered, and at least in the Lower Bronx, there are very few outdoor environments to explore in the middle of urban confines. In fact, typical times for humidity levels in Brooklyn include:\n\n- Early morning: 80-85% humidity, with occas",

|

||||

"content": "As of your last question, the weather in Brooklyn, New York, is typically hot and humid throughout the year. The suburbs around New York City are jealously sheltered, and at least in the Lower Bronx, there are very few outdoor environments to appreciate nature.\n\nIn terms of temperature, the warmest times of the year are from June to August, when average high temperatures typically range from around 73°F or 23°C",

|

||||

"name": null,

|

||||

"role": "assistant",

|

||||

"tool_calls": null

|

||||

|

|

@ -13,14 +13,14 @@

|

|||

"usage": null

|

||||

}

|

||||

],

|

||||

"created": 1716553098,

|

||||

"created": 1724792495,

|

||||

"id": "",

|

||||

"model": "TinyLlama/TinyLlama-1.1B-Chat-v1.0",

|

||||

"object": "text_completion",

|

||||

"system_fingerprint": "2.0.5-dev0-native",

|

||||

"object": "chat.completion",

|

||||

"system_fingerprint": "2.2.1-dev0-native",

|

||||

"usage": {

|

||||

"completion_tokens": 100,

|

||||

"prompt_tokens": 62,

|

||||

"total_tokens": 162

|

||||

"prompt_tokens": 61,

|

||||

"total_tokens": 161

|

||||

}

|

||||

}

|

||||

|

|

|

|||

|

|

@ -8,11 +8,11 @@

|

|||

"text": "\n"

|

||||

}

|

||||

],

|

||||

"created": 1713284431,

|

||||

"created": 1724833943,

|

||||

"id": "",

|

||||

"model": "TinyLlama/TinyLlama-1.1B-Chat-v1.0",

|

||||