Added gradio example to docs (#867)

cc @osanseviero --------- Co-authored-by: Omar Sanseviero <osanseviero@gmail.com>

This commit is contained in:

parent

888c029114

commit

97444f9367

|

|

@ -75,6 +75,81 @@ To serve both ChatUI and TGI in same environment, simply add your own endpoints

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

## Gradio

|

||||||

|

|

||||||

|

Gradio is a Python library that helps you build web applications for your machine learning models with a few lines of code. It has a `ChatInterface` wrapper that helps create neat UIs for chatbots. Let's take a look at how to create a chatbot with streaming mode using TGI and Gradio. Let's install Gradio and Hub Python library first.

|

||||||

|

|

||||||

|

```bash

|

||||||

|

pip install huggingface-hub gradio

|

||||||

|

```

|

||||||

|

|

||||||

|

Assume you are serving your model on port 8080, we will query through [InferenceClient](consuming_tgi#inference-client).

|

||||||

|

|

||||||

|

```python

|

||||||

|

import gradio as gr

|

||||||

|

from huggingface_hub import InferenceClient

|

||||||

|

|

||||||

|

client = InferenceClient(model="http://127.0.0.1:8080")

|

||||||

|

|

||||||

|

def inference(message, history):

|

||||||

|

partial_message = ""

|

||||||

|

for token in client.text_generation(message, max_new_tokens=20, stream=True):

|

||||||

|

partial_message += token

|

||||||

|

yield partial_message

|

||||||

|

|

||||||

|

gr.ChatInterface(

|

||||||

|

inference,

|

||||||

|

chatbot=gr.Chatbot(height=300),

|

||||||

|

textbox=gr.Textbox(placeholder="Chat with me!", container=False, scale=7),

|

||||||

|

description="This is the demo for Gradio UI consuming TGI endpoint with LLaMA 7B-Chat model.",

|

||||||

|

title="Gradio 🤝 TGI",

|

||||||

|

examples=["Are tomatoes vegetables?"],

|

||||||

|

retry_btn="Retry",

|

||||||

|

undo_btn="Undo",

|

||||||

|

clear_btn="Clear",

|

||||||

|

).queue().launch()

|

||||||

|

```

|

||||||

|

|

||||||

|

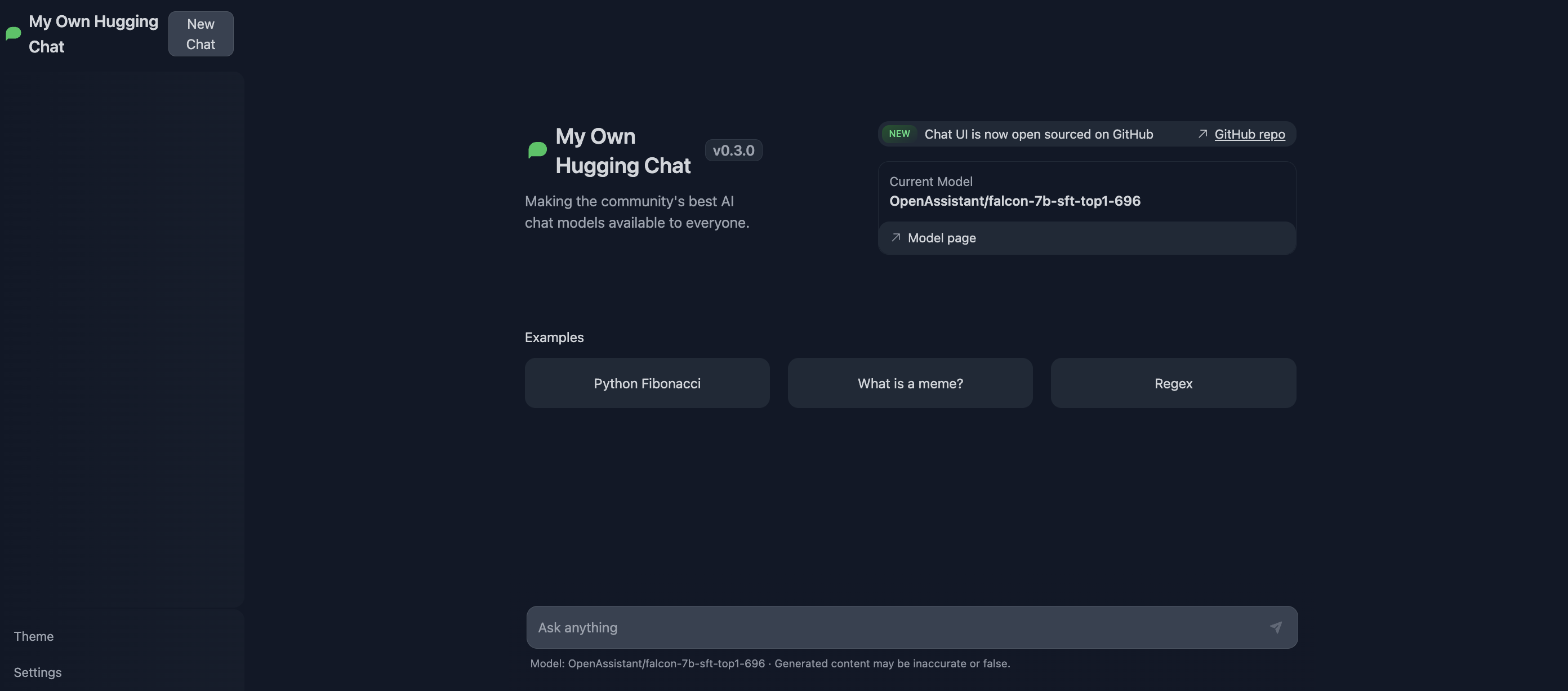

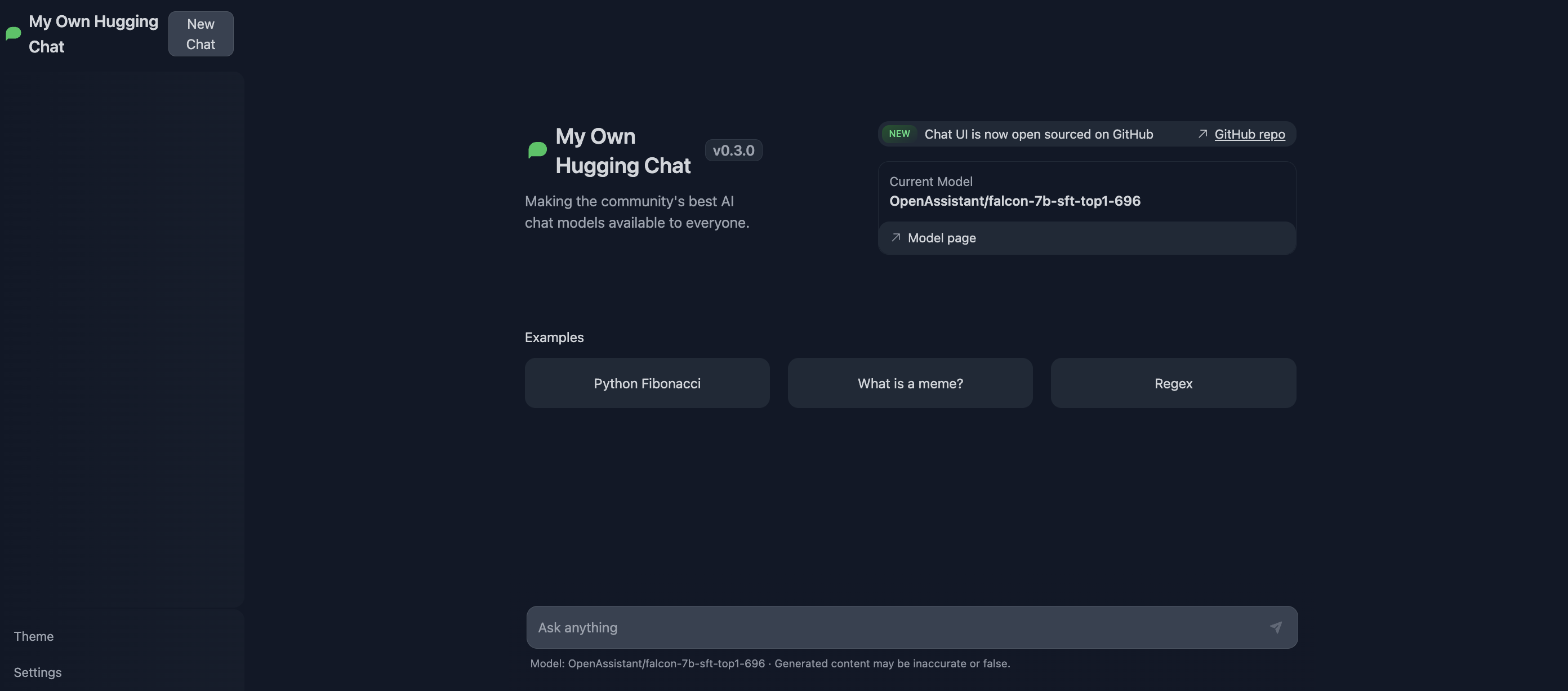

The UI looks like this 👇

|

||||||

|

|

||||||

|

<div class="flex justify-center">

|

||||||

|

<img

|

||||||

|

class="block dark:hidden"

|

||||||

|

src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/tgi/gradio-tgi.png"

|

||||||

|

/>

|

||||||

|

<img

|

||||||

|

class="hidden dark:block"

|

||||||

|

src="https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/tgi/gradio-tgi-dark.png"

|

||||||

|

/>

|

||||||

|

</div>

|

||||||

|

|

||||||

|

You can try the demo directly here 👇

|

||||||

|

|

||||||

|

<div class="block dark:hidden">

|

||||||

|

<iframe

|

||||||

|

src="https://merve-gradio-tgi-2.hf.space?__theme=light"

|

||||||

|

width="850"

|

||||||

|

height="750"

|

||||||

|

></iframe>

|

||||||

|

</div>

|

||||||

|

<div class="hidden dark:block">

|

||||||

|

<iframe

|

||||||

|

src="https://merve-gradio-tgi-2.hf.space?__theme=dark"

|

||||||

|

width="850"

|

||||||

|

height="750"

|

||||||

|

></iframe>

|

||||||

|

</div>

|

||||||

|

|

||||||

|

|

||||||

|

You can disable streaming mode using `return` instead of `yield` in your inference function, like below.

|

||||||

|

|

||||||

|

```python

|

||||||

|

def inference(message, history):

|

||||||

|

return client.text_generation(message, max_new_tokens=20)

|

||||||

|

```

|

||||||

|

|

||||||

|

You can read more about how to customize a `ChatInterface` [here](https://www.gradio.app/guides/creating-a-chatbot-fast).

|

||||||

|

|

||||||

## API documentation

|

## API documentation

|

||||||

|

|

||||||

You can consult the OpenAPI documentation of the `text-generation-inference` REST API using the `/docs` route. The Swagger UI is also available [here](https://huggingface.github.io/text-generation-inference).

|

You can consult the OpenAPI documentation of the `text-generation-inference` REST API using the `/docs` route. The Swagger UI is also available [here](https://huggingface.github.io/text-generation-inference).

|

||||||

|

|

|

||||||

Loading…

Reference in New Issue