Merge branch 'main' into feat/page_re_alloc

This commit is contained in:

commit

fe6a2756f1

|

|

@ -51,16 +51,19 @@ jobs:

|

|||

steps:

|

||||

- name: Checkout repository

|

||||

uses: actions/checkout@v3

|

||||

- name: Initialize Docker Buildx

|

||||

uses: docker/setup-buildx-action@v2.0.0

|

||||

with:

|

||||

install: true

|

||||

|

||||

- name: Inject slug/short variables

|

||||

uses: rlespinasse/github-slug-action@v4.4.1

|

||||

- name: Tailscale

|

||||

uses: huggingface/tailscale-action@main

|

||||

with:

|

||||

authkey: ${{ secrets.TAILSCALE_AUTHKEY }}

|

||||

slackChannel: ${{ secrets.SLACK_CIFEEDBACK_CHANNEL }}

|

||||

slackToken: ${{ secrets.SLACK_CIFEEDBACK_BOT_TOKEN }}

|

||||

- name: Initialize Docker Buildx

|

||||

uses: docker/setup-buildx-action@v2.0.0

|

||||

with:

|

||||

install: true

|

||||

- name: Login to GitHub Container Registry

|

||||

if: github.event_name != 'pull_request'

|

||||

uses: docker/login-action@v2

|

||||

|

|

@ -121,6 +124,7 @@ jobs:

|

|||

DOCKER_LABEL=sha-${{ env.GITHUB_SHA_SHORT }}${{ matrix.label }}

|

||||

tags: ${{ steps.meta.outputs.tags || steps.meta-pr.outputs.tags }}

|

||||

labels: ${{ steps.meta.outputs.labels || steps.meta-pr.outputs.labels }}

|

||||

network: host

|

||||

cache-from: type=registry,ref=registry.internal.huggingface.tech/api-inference/community/text-generation-inference:cache${{ matrix.label }},mode=min

|

||||

cache-to: type=registry,ref=registry.internal.huggingface.tech/api-inference/community/text-generation-inference:cache${{ matrix.label }},mode=min

|

||||

- name: Set up Python

|

||||

|

|

@ -139,3 +143,8 @@ jobs:

|

|||

export DOCKER_IMAGE=registry.internal.huggingface.tech/api-inference/community/text-generation-inference:sha-${{ env.GITHUB_SHA_SHORT }}

|

||||

export HUGGING_FACE_HUB_TOKEN=${{ secrets.HUGGING_FACE_HUB_TOKEN }}

|

||||

pytest -s -vv integration-tests

|

||||

- name: Tailscale Wait

|

||||

if: ${{ failure() || runner.debug == '1' }}

|

||||

uses: huggingface/tailscale-action@main

|

||||

with:

|

||||

waitForSSH: true

|

||||

|

|

|

|||

|

|

@ -33,9 +33,9 @@ jobs:

|

|||

- name: Install Rust

|

||||

uses: actions-rs/toolchain@v1

|

||||

with:

|

||||

# Released on: 02 May, 2024

|

||||

# https://releases.rs/docs/1.78.0/

|

||||

toolchain: 1.78.0

|

||||

# Released on: June 13, 2024

|

||||

# https://releases.rs/docs/1.79.0/

|

||||

toolchain: 1.79.0

|

||||

override: true

|

||||

components: rustfmt, clippy

|

||||

- name: Install Protoc

|

||||

|

|

|

|||

|

|

@ -0,0 +1,133 @@

|

|||

|

||||

# Contributor Covenant Code of Conduct

|

||||

|

||||

## Our Pledge

|

||||

|

||||

We as members, contributors, and leaders pledge to make participation in our

|

||||

community a harassment-free experience for everyone, regardless of age, body

|

||||

size, visible or invisible disability, ethnicity, sex characteristics, gender

|

||||

identity and expression, level of experience, education, socio-economic status,

|

||||

nationality, personal appearance, race, caste, color, religion, or sexual

|

||||

identity and orientation.

|

||||

|

||||

We pledge to act and interact in ways that contribute to an open, welcoming,

|

||||

diverse, inclusive, and healthy community.

|

||||

|

||||

## Our Standards

|

||||

|

||||

Examples of behavior that contributes to a positive environment for our

|

||||

community include:

|

||||

|

||||

* Demonstrating empathy and kindness toward other people

|

||||

* Being respectful of differing opinions, viewpoints, and experiences

|

||||

* Giving and gracefully accepting constructive feedback

|

||||

* Accepting responsibility and apologizing to those affected by our mistakes,

|

||||

and learning from the experience

|

||||

* Focusing on what is best not just for us as individuals, but for the overall

|

||||

community

|

||||

|

||||

Examples of unacceptable behavior include:

|

||||

|

||||

* The use of sexualized language or imagery, and sexual attention or advances of

|

||||

any kind

|

||||

* Trolling, insulting or derogatory comments, and personal or political attacks

|

||||

* Public or private harassment

|

||||

* Publishing others' private information, such as a physical or email address,

|

||||

without their explicit permission

|

||||

* Other conduct which could reasonably be considered inappropriate in a

|

||||

professional setting

|

||||

|

||||

## Enforcement Responsibilities

|

||||

|

||||

Community leaders are responsible for clarifying and enforcing our standards of

|

||||

acceptable behavior and will take appropriate and fair corrective action in

|

||||

response to any behavior that they deem inappropriate, threatening, offensive,

|

||||

or harmful.

|

||||

|

||||

Community leaders have the right and responsibility to remove, edit, or reject

|

||||

comments, commits, code, wiki edits, issues, and other contributions that are

|

||||

not aligned to this Code of Conduct, and will communicate reasons for moderation

|

||||

decisions when appropriate.

|

||||

|

||||

## Scope

|

||||

|

||||

This Code of Conduct applies within all community spaces, and also applies when

|

||||

an individual is officially representing the community in public spaces.

|

||||

Examples of representing our community include using an official e-mail address,

|

||||

posting via an official social media account, or acting as an appointed

|

||||

representative at an online or offline event.

|

||||

|

||||

## Enforcement

|

||||

|

||||

Instances of abusive, harassing, or otherwise unacceptable behavior may be

|

||||

reported to the community leaders responsible for enforcement at

|

||||

feedback@huggingface.co.

|

||||

All complaints will be reviewed and investigated promptly and fairly.

|

||||

|

||||

All community leaders are obligated to respect the privacy and security of the

|

||||

reporter of any incident.

|

||||

|

||||

## Enforcement Guidelines

|

||||

|

||||

Community leaders will follow these Community Impact Guidelines in determining

|

||||

the consequences for any action they deem in violation of this Code of Conduct:

|

||||

|

||||

### 1. Correction

|

||||

|

||||

**Community Impact**: Use of inappropriate language or other behavior deemed

|

||||

unprofessional or unwelcome in the community.

|

||||

|

||||

**Consequence**: A private, written warning from community leaders, providing

|

||||

clarity around the nature of the violation and an explanation of why the

|

||||

behavior was inappropriate. A public apology may be requested.

|

||||

|

||||

### 2. Warning

|

||||

|

||||

**Community Impact**: A violation through a single incident or series of

|

||||

actions.

|

||||

|

||||

**Consequence**: A warning with consequences for continued behavior. No

|

||||

interaction with the people involved, including unsolicited interaction with

|

||||

those enforcing the Code of Conduct, for a specified period of time. This

|

||||

includes avoiding interactions in community spaces as well as external channels

|

||||

like social media. Violating these terms may lead to a temporary or permanent

|

||||

ban.

|

||||

|

||||

### 3. Temporary Ban

|

||||

|

||||

**Community Impact**: A serious violation of community standards, including

|

||||

sustained inappropriate behavior.

|

||||

|

||||

**Consequence**: A temporary ban from any sort of interaction or public

|

||||

communication with the community for a specified period of time. No public or

|

||||

private interaction with the people involved, including unsolicited interaction

|

||||

with those enforcing the Code of Conduct, is allowed during this period.

|

||||

Violating these terms may lead to a permanent ban.

|

||||

|

||||

### 4. Permanent Ban

|

||||

|

||||

**Community Impact**: Demonstrating a pattern of violation of community

|

||||

standards, including sustained inappropriate behavior, harassment of an

|

||||

individual, or aggression toward or disparagement of classes of individuals.

|

||||

|

||||

**Consequence**: A permanent ban from any sort of public interaction within the

|

||||

community.

|

||||

|

||||

## Attribution

|

||||

|

||||

This Code of Conduct is adapted from the [Contributor Covenant][homepage],

|

||||

version 2.1, available at

|

||||

[https://www.contributor-covenant.org/version/2/1/code_of_conduct.html][v2.1].

|

||||

|

||||

Community Impact Guidelines were inspired by

|

||||

[Mozilla's code of conduct enforcement ladder][Mozilla CoC].

|

||||

|

||||

For answers to common questions about this code of conduct, see the FAQ at

|

||||

[https://www.contributor-covenant.org/faq][FAQ]. Translations are available at

|

||||

[https://www.contributor-covenant.org/translations][translations].

|

||||

|

||||

[homepage]: https://www.contributor-covenant.org

|

||||

[v2.1]: https://www.contributor-covenant.org/version/2/1/code_of_conduct.html

|

||||

[Mozilla CoC]: https://github.com/mozilla/diversity

|

||||

[FAQ]: https://www.contributor-covenant.org/faq

|

||||

[translations]: https://www.contributor-covenant.org/translations

|

||||

|

|

@ -0,0 +1,120 @@

|

|||

<!---

|

||||

Copyright 2024 The HuggingFace Team. All rights reserved.

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License");

|

||||

you may not use this file except in compliance with the License.

|

||||

You may obtain a copy of the License at

|

||||

|

||||

http://www.apache.org/licenses/LICENSE-2.0

|

||||

|

||||

Unless required by applicable law or agreed to in writing, software

|

||||

distributed under the License is distributed on an "AS IS" BASIS,

|

||||

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

See the License for the specific language governing permissions and

|

||||

limitations under the License.

|

||||

-->

|

||||

|

||||

# Contribute to text-generation-inference

|

||||

|

||||

Everyone is welcome to contribute, and we value everybody's contribution. Code

|

||||

contributions are not the only way to help the community. Answering questions, helping

|

||||

others, and improving the documentation are also immensely valuable.

|

||||

|

||||

It also helps us if you spread the word! Reference the library in blog posts

|

||||

about the awesome projects it made possible, shout out on Twitter every time it has

|

||||

helped you, or simply ⭐️ the repository to say thank you.

|

||||

|

||||

However you choose to contribute, please be mindful and respect our

|

||||

[code of conduct](https://github.com/huggingface/text-generation-inference/blob/main/CODE_OF_CONDUCT.md).

|

||||

|

||||

**This guide was heavily inspired by the awesome [scikit-learn guide to contributing](https://github.com/scikit-learn/scikit-learn/blob/main/CONTRIBUTING.md).**

|

||||

|

||||

## Ways to contribute

|

||||

|

||||

There are several ways you can contribute to text-generation-inference.

|

||||

|

||||

* Fix outstanding issues with the existing code.

|

||||

* Submit issues related to bugs or desired new features.

|

||||

* Contribute to the examples or to the documentation.

|

||||

|

||||

> All contributions are equally valuable to the community. 🥰

|

||||

|

||||

## Fixing outstanding issues

|

||||

|

||||

If you notice an issue with the existing code and have a fix in mind, feel free to [start contributing](https://docs.github.com/en/pull-requests/collaborating-with-pull-requests/proposing-changes-to-your-work-with-pull-requests/creating-a-pull-request) and open

|

||||

a Pull Request!

|

||||

|

||||

## Submitting a bug-related issue or feature request

|

||||

|

||||

Do your best to follow these guidelines when submitting a bug-related issue or a feature

|

||||

request. It will make it easier for us to come back to you quickly and with good

|

||||

feedback.

|

||||

|

||||

### Did you find a bug?

|

||||

|

||||

The text-generation-inference library is robust and reliable thanks to users who report the problems they encounter.

|

||||

|

||||

Before you report an issue, we would really appreciate it if you could **make sure the bug was not

|

||||

already reported** (use the search bar on GitHub under Issues). Your issue should also be related to bugs in the

|

||||

library itself, and not your code.

|

||||

|

||||

Once you've confirmed the bug hasn't already been reported, please include the following information in your issue so

|

||||

we can quickly resolve it:

|

||||

|

||||

* Your **OS type and version**, as well as your environment versions (versions of rust, python, and dependencies).

|

||||

* A short, self-contained, code snippet that allows us to reproduce the bug.

|

||||

* The *full* traceback if an exception is raised.

|

||||

* Attach any other additional information, like screenshots, you think may help.

|

||||

|

||||

To get the OS and software versions automatically, you can re-run the launcher with the `--env` flag:

|

||||

|

||||

```bash

|

||||

text-generation-launcher --env

|

||||

```

|

||||

|

||||

This will precede the launch of the model with the information relative to your environment. We recommend pasting

|

||||

that in your issue report.

|

||||

|

||||

### Do you want a new feature?

|

||||

|

||||

If there is a new feature you'd like to see in text-generation-inference, please open an issue and describe:

|

||||

|

||||

1. What is the *motivation* behind this feature? Is it related to a problem or frustration with the library? Is it

|

||||

a feature related to something you need for a project? Is it something you worked on and think it could benefit

|

||||

the community?

|

||||

|

||||

Whatever it is, we'd love to hear about it!

|

||||

|

||||

2. Describe your requested feature in as much detail as possible. The more you can tell us about it, the better

|

||||

we'll be able to help you.

|

||||

3. Provide a *code snippet* that demonstrates the feature's usage.

|

||||

4. If the feature is related to a paper, please include a link.

|

||||

|

||||

If your issue is well written we're already 80% of the way there by the time you create it.

|

||||

|

||||

We have added [templates](https://github.com/huggingface/text-generation-inference/tree/main/.github/ISSUE_TEMPLATE)

|

||||

to help you get started with your issue.

|

||||

|

||||

## Do you want to implement a new model?

|

||||

|

||||

New models are constantly released and if you want to implement a new model, please provide the following information:

|

||||

|

||||

* A short description of the model and a link to the paper.

|

||||

* Link to the implementation if it is open-sourced.

|

||||

* Link to the model weights if they are available.

|

||||

|

||||

If you are willing to contribute the model yourself, let us know so we can help you add it to text-generation-inference!

|

||||

|

||||

## Do you want to add documentation?

|

||||

|

||||

We're always looking for improvements to the documentation that make it more clear and accurate. Please let us know

|

||||

how the documentation can be improved such as typos and any content that is missing, unclear or inaccurate. We'll be

|

||||

happy to make the changes or help you make a contribution if you're interested!

|

||||

|

||||

## I want to become a maintainer of the project. How do I get there?

|

||||

|

||||

TGI is a project led and managed by Hugging Face as it powers our internal services. However, we are happy to have

|

||||

motivated individuals from other organizations join us as maintainers with the goal of making TGI the best inference

|

||||

service.

|

||||

|

||||

If you are such an individual (or organization), please reach out to us and let's collaborate.

|

||||

|

|

@ -1856,12 +1856,23 @@ dependencies = [

|

|||

|

||||

[[package]]

|

||||

name = "minijinja"

|

||||

version = "1.0.12"

|

||||

source = "git+https://github.com/mitsuhiko/minijinja.git?rev=5cd4efb#5cd4efb9e2639247df275fe6e22a5dbe0ce71b28"

|

||||

version = "2.0.2"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "e136ef580d7955019ab0a407b68d77c292a9976907e217900f3f76bc8f6dc1a4"

|

||||

dependencies = [

|

||||

"serde",

|

||||

]

|

||||

|

||||

[[package]]

|

||||

name = "minijinja-contrib"

|

||||

version = "2.0.2"

|

||||

source = "registry+https://github.com/rust-lang/crates.io-index"

|

||||

checksum = "15ee37078c98d31e510d6a7af488031a2c3ccacdb76c5c4fc98ddfe6d0e9da07"

|

||||

dependencies = [

|

||||

"minijinja",

|

||||

"serde",

|

||||

]

|

||||

|

||||

[[package]]

|

||||

name = "minimal-lexical"

|

||||

version = "0.2.1"

|

||||

|

|

@ -3604,6 +3615,7 @@ dependencies = [

|

|||

"metrics",

|

||||

"metrics-exporter-prometheus",

|

||||

"minijinja",

|

||||

"minijinja-contrib",

|

||||

"ngrok",

|

||||

"nohash-hasher",

|

||||

"once_cell",

|

||||

|

|

|

|||

|

|

@ -1,5 +1,5 @@

|

|||

# Rust builder

|

||||

FROM lukemathwalker/cargo-chef:latest-rust-1.78 AS chef

|

||||

FROM lukemathwalker/cargo-chef:latest-rust-1.79 AS chef

|

||||

WORKDIR /usr/src

|

||||

|

||||

ARG CARGO_REGISTRIES_CRATES_IO_PROTOCOL=sparse

|

||||

|

|

@ -140,9 +140,9 @@ RUN TORCH_CUDA_ARCH_LIST="8.0;8.6+PTX" make build-eetq

|

|||

# Build marlin kernels

|

||||

FROM kernel-builder as marlin-kernels-builder

|

||||

WORKDIR /usr/src

|

||||

COPY server/Makefile-marlin Makefile

|

||||

COPY server/marlin/ .

|

||||

# Build specific version of transformers

|

||||

RUN TORCH_CUDA_ARCH_LIST="8.0;8.6+PTX" make build-marlin

|

||||

RUN TORCH_CUDA_ARCH_LIST="8.0;8.6+PTX" python setup.py build

|

||||

|

||||

# Build Transformers CUDA kernels

|

||||

FROM kernel-builder as custom-kernels-builder

|

||||

|

|

@ -213,7 +213,7 @@ COPY --from=awq-kernels-builder /usr/src/llm-awq/awq/kernels/build/lib.linux-x86

|

|||

# Copy build artifacts from eetq kernels builder

|

||||

COPY --from=eetq-kernels-builder /usr/src/eetq/build/lib.linux-x86_64-cpython-310 /opt/conda/lib/python3.10/site-packages

|

||||

# Copy build artifacts from marlin kernels builder

|

||||

COPY --from=marlin-kernels-builder /usr/src/marlin/build/lib.linux-x86_64-cpython-310 /opt/conda/lib/python3.10/site-packages

|

||||

COPY --from=marlin-kernels-builder /usr/src/build/lib.linux-x86_64-cpython-310 /opt/conda/lib/python3.10/site-packages

|

||||

|

||||

# Copy builds artifacts from vllm builder

|

||||

COPY --from=vllm-builder /usr/src/vllm/build/lib.linux-x86_64-cpython-310 /opt/conda/lib/python3.10/site-packages

|

||||

|

|

|

|||

|

|

@ -1,5 +1,5 @@

|

|||

# Rust builder

|

||||

FROM lukemathwalker/cargo-chef:latest-rust-1.78 AS chef

|

||||

FROM lukemathwalker/cargo-chef:latest-rust-1.79 AS chef

|

||||

WORKDIR /usr/src

|

||||

|

||||

ARG CARGO_REGISTRIES_CRATES_IO_PROTOCOL=sparse

|

||||

|

|

|

|||

|

|

@ -1,4 +1,4 @@

|

|||

FROM lukemathwalker/cargo-chef:latest-rust-1.78 AS chef

|

||||

FROM lukemathwalker/cargo-chef:latest-rust-1.79 AS chef

|

||||

WORKDIR /usr/src

|

||||

|

||||

ARG CARGO_REGISTRIES_CRATES_IO_PROTOCOL=sparse

|

||||

|

|

|

|||

|

|

@ -17,6 +17,8 @@

|

|||

title: Supported Models and Hardware

|

||||

- local: messages_api

|

||||

title: Messages API

|

||||

- local: architecture

|

||||

title: Internal Architecture

|

||||

title: Getting started

|

||||

- sections:

|

||||

- local: basic_tutorials/consuming_tgi

|

||||

|

|

|

|||

|

|

@ -0,0 +1,227 @@

|

|||

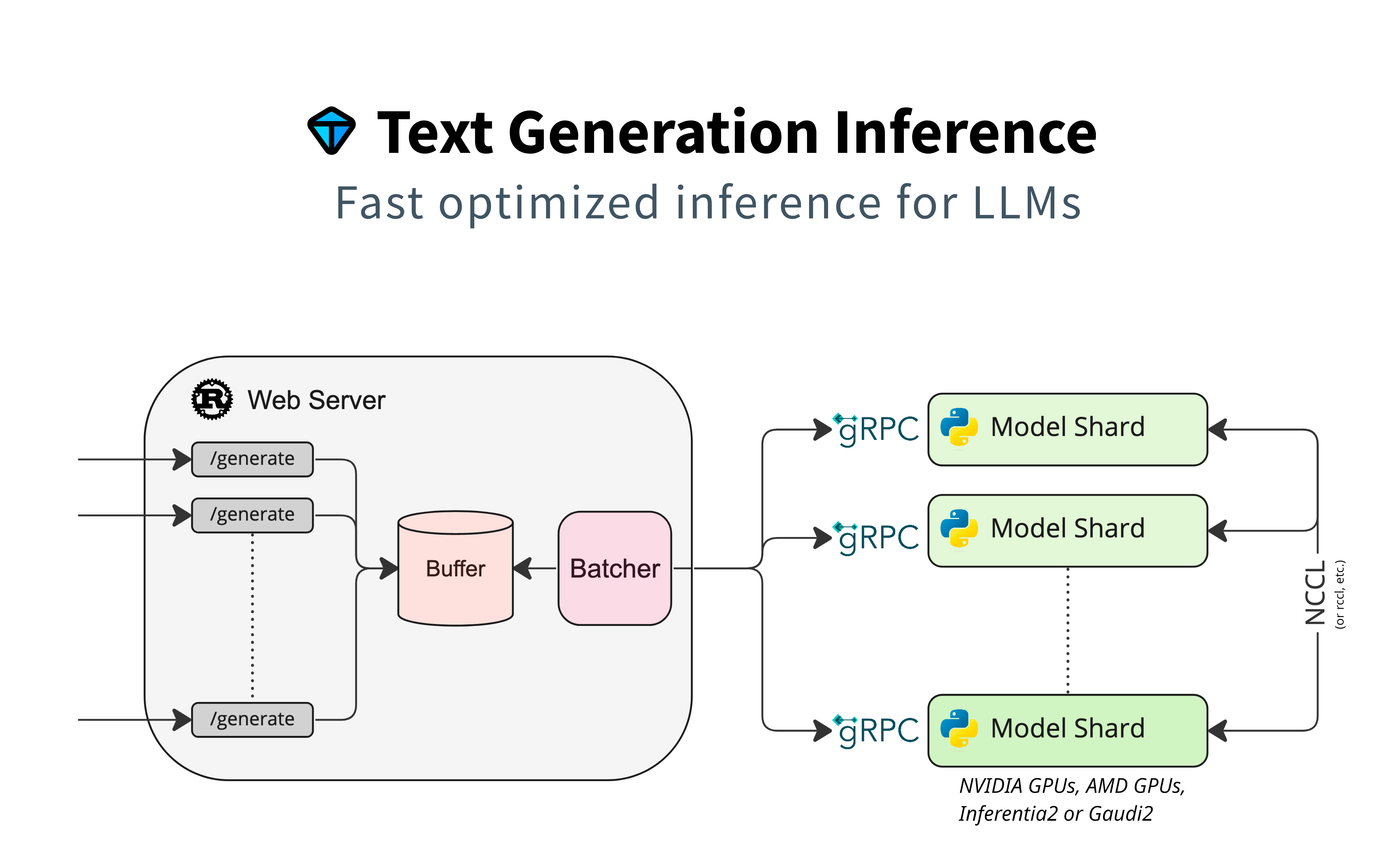

# Text Generation Inference Architecture

|

||||

|

||||

This document aims at describing the architecture of Text Generation Inference (TGI), by describing the call flow between the separate components.

|

||||

|

||||

A high-level architecture diagram can be seen here:

|

||||

|

||||

|

||||

|

||||

This diagram shows well there are these separate components:

|

||||

|

||||

- **The router**, also named `webserver`, that receives the client requests, buffers them, creates some batches, and prepares gRPC calls to a model server.

|

||||

- **The model server**, responsible of receiving the gRPC requests and to process the inference on the model. If the model is sharded across multiple accelerators (e.g.: multiple GPUs), the model server shards might be synchronized via NCCL or equivalent.

|

||||

- **The launcher** is a helper thar will be able to launch one or several model servers (if model is sharded), and it launches the router with the compatible arguments.

|

||||

|

||||

The router and the model server can be two different machines, they do not need to be deployed together.

|

||||

|

||||

## The Router

|

||||

|

||||

This component is a rust web server binary that accepts HTTP requests using the custom [HTTP API](https://huggingface.github.io/text-generation-inference/), as well as OpenAI's [Messages API](https://huggingface.co/docs/text-generation-inference/messages_api).

|

||||

The router receives the API calls and handles the "baches" logic (and introduction to batching can be found [here](https://github.com/huggingface/text-generation-inference/blob/main/router/README.md)).

|

||||

It uses different strategies to reduce latency between requests and responses, especially oriented to decoding latency. It will use queues, schedulers, and block allocators to achieve that and produce batched requests that it will then be sent to the model server.

|

||||

|

||||

### Router's command line

|

||||

|

||||

The router command line will be the way to pass parameters to it (it does not rely on configuration file):

|

||||

|

||||

```

|

||||

Text Generation Webserver

|

||||

|

||||

Usage: text-generation-router [OPTIONS]

|

||||

|

||||

Options:

|

||||

--max-concurrent-requests <MAX_CONCURRENT_REQUESTS>

|

||||

[env: MAX_CONCURRENT_REQUESTS=] [default: 128]

|

||||

--max-best-of <MAX_BEST_OF>

|

||||

[env: MAX_BEST_OF=] [default: 2]

|

||||

--max-stop-sequences <MAX_STOP_SEQUENCES>

|

||||

[env: MAX_STOP_SEQUENCES=] [default: 4]

|

||||

--max-top-n-tokens <MAX_TOP_N_TOKENS>

|

||||

[env: MAX_TOP_N_TOKENS=] [default: 5]

|

||||

--max-input-tokens <MAX_INPUT_TOKENS>

|

||||

[env: MAX_INPUT_TOKENS=] [default: 1024]

|

||||

--max-total-tokens <MAX_TOTAL_TOKENS>

|

||||

[env: MAX_TOTAL_TOKENS=] [default: 2048]

|

||||

--waiting-served-ratio <WAITING_SERVED_RATIO>

|

||||

[env: WAITING_SERVED_RATIO=] [default: 1.2]

|

||||

--max-batch-prefill-tokens <MAX_BATCH_PREFILL_TOKENS>

|

||||

[env: MAX_BATCH_PREFILL_TOKENS=] [default: 4096]

|

||||

--max-batch-total-tokens <MAX_BATCH_TOTAL_TOKENS>

|

||||

[env: MAX_BATCH_TOTAL_TOKENS=]

|

||||

--max-waiting-tokens <MAX_WAITING_TOKENS>

|

||||

[env: MAX_WAITING_TOKENS=] [default: 20]

|

||||

--max-batch-size <MAX_BATCH_SIZE>

|

||||

[env: MAX_BATCH_SIZE=]

|

||||

--hostname <HOSTNAME>

|

||||

[env: HOSTNAME=] [default: 0.0.0.0]

|

||||

-p, --port <PORT>

|

||||

[env: PORT=] [default: 3000]

|

||||

--master-shard-uds-path <MASTER_SHARD_UDS_PATH>

|

||||

[env: MASTER_SHARD_UDS_PATH=] [default: /tmp/text-generation-server-0]

|

||||

--tokenizer-name <TOKENIZER_NAME>

|

||||

[env: TOKENIZER_NAME=] [default: bigscience/bloom]

|

||||

--tokenizer-config-path <TOKENIZER_CONFIG_PATH>

|

||||

[env: TOKENIZER_CONFIG_PATH=]

|

||||

--revision <REVISION>

|

||||

[env: REVISION=]

|

||||

--validation-workers <VALIDATION_WORKERS>

|

||||

[env: VALIDATION_WORKERS=] [default: 2]

|

||||

--json-output

|

||||

[env: JSON_OUTPUT=]

|

||||

--otlp-endpoint <OTLP_ENDPOINT>

|

||||

[env: OTLP_ENDPOINT=]

|

||||

--cors-allow-origin <CORS_ALLOW_ORIGIN>

|

||||

[env: CORS_ALLOW_ORIGIN=]

|

||||

--ngrok

|

||||

[env: NGROK=]

|

||||

--ngrok-authtoken <NGROK_AUTHTOKEN>

|

||||

[env: NGROK_AUTHTOKEN=]

|

||||

--ngrok-edge <NGROK_EDGE>

|

||||

[env: NGROK_EDGE=]

|

||||

--messages-api-enabled

|

||||

[env: MESSAGES_API_ENABLED=]

|

||||

--disable-grammar-support

|

||||

[env: DISABLE_GRAMMAR_SUPPORT=]

|

||||

--max-client-batch-size <MAX_CLIENT_BATCH_SIZE>

|

||||

[env: MAX_CLIENT_BATCH_SIZE=] [default: 4]

|

||||

-h, --help

|

||||

Print help

|

||||

-V, --version

|

||||

Print version

|

||||

```

|

||||

|

||||

## The Model Server

|

||||

|

||||

The model server is a python server, capable of starting a server waiting for gRPC requests, loads a given model, perform sharding to provide [tensor parallelism](https://huggingface.co/docs/text-generation-inference/conceptual/tensor_parallelism), and stays alive while waiting for new requests.

|

||||

The model server supports models instantiated using Pytorch and optimized for inference mainly on CUDA/ROCM.

|

||||

|

||||

### Model Server Variants

|

||||

|

||||

Several variants of the model server exist that are actively supported by Hugging Face:

|

||||

|

||||

- By default, the model server will attempt building [a server optimized for Nvidia GPUs with CUDA](https://huggingface.co/docs/text-generation-inference/installation_nvidia). The code for this version is hosted in the [main TGI repository](https://github.com/huggingface/text-generation-inference).

|

||||

- A [version optimized for AMD with ROCm](https://huggingface.co/docs/text-generation-inference/installation_amd) is hosted in the main TGI repository. Some model features differ.

|

||||

- The [version for Intel Gaudi](https://huggingface.co/docs/text-generation-inference/installation_gaudi) is maintained on a forked repository, often resynchronized with the main [TGI repository](https://github.com/huggingface/tgi-gaudi).

|

||||

- A [version for Neuron (AWS Inferentia2)](https://huggingface.co/docs/text-generation-inference/installation_inferentia) is maintained as part of [Optimum Neuron](https://github.com/huggingface/optimum-neuron/tree/main/text-generation-inference).

|

||||

- A version for Google TPUs is maintained as part of [Optimum TPU](https://github.com/huggingface/optimum-tpu/tree/main/text-generation-inference).

|

||||

|

||||

Not all variants provide the same features, as hardware and middleware capabilities do not provide the same optimizations.

|

||||

|

||||

### Command Line Interface

|

||||

|

||||

The official command line interface (CLI) for the server supports three subcommands, `download-weights`, `quantize` and `serve`:

|

||||

|

||||

- `download-weights` will download weights from the hub and, in some variants it will convert weights to a format that is adapted to the given implementation;

|

||||

- `quantize` will allow to quantize a model using the `qptq` package. This feature is not available nor supported on all variants;

|

||||

- `serve` will start the server that load a model (or a model shard), receives gRPC calls from the router, performs an inference and provides a formatted response to the given request.

|

||||

|

||||

Serve's command line parameters on the TGI repository are these:

|

||||

|

||||

```

|

||||

Usage: cli.py serve [OPTIONS] MODEL_ID

|

||||

|

||||

╭─ Arguments ──────────────────────────────────────────────────────────────────────────────────────────────╮

|

||||

│ * model_id TEXT [default: None] [required] │

|

||||

╰──────────────────────────────────────────────────────────────────────────────────────────────────────────╯

|

||||

╭─ Options ────────────────────────────────────────────────────────────────────────────────────────────────╮

|

||||

│ --revision TEXT [default: None] │

|

||||

│ --sharded --no-sharded [default: no-sharded] │

|

||||

│ --quantize [bitsandbytes|bitsandbytes [default: None] │

|

||||

│ -nf4|bitsandbytes-fp4|gptq │

|

||||

│ |awq|eetq|exl2|fp8] │

|

||||

│ --speculate INTEGER [default: None] │

|

||||

│ --dtype [float16|bfloat16] [default: None] │

|

||||

│ --trust-remote-code --no-trust-remote-code [default: │

|

||||

│ no-trust-remote-code] │

|

||||

│ --uds-path PATH [default: │

|

||||

│ /tmp/text-generation-serve… │

|

||||

│ --logger-level TEXT [default: INFO] │

|

||||

│ --json-output --no-json-output [default: no-json-output] │

|

||||

│ --otlp-endpoint TEXT [default: None] │

|

||||

│ --help Show this message and exit. │

|

||||

╰──────────────────────────────────────────────────────────────────────────────────────────────────────────╯

|

||||

```

|

||||

|

||||

Note that some variants might support different parameters, and they could possibly accept more options that can be passed on using environment variables.

|

||||

|

||||

## Call Flow

|

||||

|

||||

Once both components are initialized, weights downloaded and model server is up and running, router and model server exchange data and info through the gRPC call. There are currently two supported schemas, [v2](https://github.com/huggingface/text-generation-inference/blob/main/proto/generate.proto) and [v3](https://github.com/huggingface/text-generation-inference/blob/main/proto/v3/generate.proto). These two versions are almost identical, except for:

|

||||

|

||||

- input chunks support, for text and image data,

|

||||

- paged attention support

|

||||

|

||||

Here's a diagram that displays the exchanges that follow the router and model server startup.

|

||||

|

||||

```mermaid

|

||||

sequenceDiagram

|

||||

|

||||

Router->>Model Server: service discovery

|

||||

Model Server-->>Router: urls for other shards

|

||||

|

||||

Router->>Model Server: get model info

|

||||

Model Server-->>Router: shard info

|

||||

|

||||

Router->>Model Server: health check

|

||||

Model Server-->>Router: health OK

|

||||

|

||||

Router->>Model Server: warmup(max_input_tokens, max_batch_prefill_tokens, max_total_tokens, max_batch_size)

|

||||

Model Server-->>Router: warmup result

|

||||

```

|

||||

|

||||

After these are done, the router is ready to receive generate calls from multiple clients. Here's an example.

|

||||

|

||||

```mermaid

|

||||

sequenceDiagram

|

||||

participant Client 1

|

||||

participant Client 2

|

||||

participant Client 3

|

||||

participant Router

|

||||

participant Model Server

|

||||

|

||||

Client 1->>Router: generate_stream

|

||||

Router->>Model Server: prefill(batch1)

|

||||

Model Server-->>Router: generations, cached_batch1, timings

|

||||

Router-->>Client 1: token 1

|

||||

|

||||

Router->>Model Server: decode(cached_batch1)

|

||||

Model Server-->>Router: generations, cached_batch1, timings

|

||||

Router-->>Client 1: token 2

|

||||

|

||||

Router->>Model Server: decode(cached_batch1)

|

||||

Model Server-->>Router: generations, cached_batch1, timings

|

||||

Router-->>Client 1: token 3

|

||||

|

||||

Client 2->>Router: generate_stream

|

||||

Router->>Model Server: prefill(batch2)

|

||||

Note right of Model Server: This stops previous batch, that is restarted

|

||||

Model Server-->>Router: generations, cached_batch2, timings

|

||||

Router-->>Client 2: token 1'

|

||||

|

||||

Router->>Model Server: decode(cached_batch1, cached_batch2)

|

||||

Model Server-->>Router: generations, cached_batch1, timings

|

||||

Router-->>Client 1: token 4

|

||||

Router-->>Client 2: token 2'

|

||||

|

||||

Note left of Client 1: Client 1 leaves

|

||||

Router->>Model Server: filter_batch(cached_batch1, request_ids_to_keep=batch2)

|

||||

Model Server-->>Router: filtered batch

|

||||

|

||||

Router->>Model Server: decode(cached_batch2)

|

||||

Model Server-->>Router: generations, cached_batch2, timings

|

||||

Router-->>Client 2: token 3'

|

||||

|

||||

Client 3->>Router: generate_stream

|

||||

Note right of Model Server: This stops previous batch, that is restarted

|

||||

Router->>Model Server: prefill(batch3)

|

||||

Note left of Client 1: Client 3 leaves without receiving any batch

|

||||

Router->>Model Server: clear_cache(batch3)

|

||||

Note right of Model Server: This stops previous batch, that is restarted

|

||||

|

||||

Router->>Model Server: decode(cached_batch3)

|

||||

Note right of Model Server: Last token (stopping criteria)

|

||||

Model Server-->>Router: generations, cached_batch3, timings

|

||||

Router-->>Client 2: token 4'

|

||||

|

||||

|

||||

```

|

||||

|

|

@ -20,7 +20,7 @@ Text Generation Inference enables serving optimized models on specific hardware

|

|||

- [Baichuan](https://huggingface.co/baichuan-inc/Baichuan2-7B-Chat)

|

||||

- [Falcon](https://huggingface.co/tiiuae/falcon-7b-instruct)

|

||||

- [StarCoder 2](https://huggingface.co/bigcode/starcoder2-15b-instruct-v0.1)

|

||||

- [Qwen 2](https://huggingface.co/bigcode/starcoder2-15b-instruct-v0.1)

|

||||

- [Qwen 2](https://huggingface.co/collections/Qwen/qwen2-6659360b33528ced941e557f)

|

||||

- [Opt](https://huggingface.co/facebook/opt-6.7b)

|

||||

- [T5](https://huggingface.co/google/flan-t5-xxl)

|

||||

- [Galactica](https://huggingface.co/facebook/galactica-120b)

|

||||

|

|

|

|||

|

|

@ -0,0 +1,84 @@

|

|||

{

|

||||

"details": {

|

||||

"best_of_sequences": null,

|

||||

"finish_reason": "length",

|

||||

"generated_tokens": 10,

|

||||

"prefill": [

|

||||

{

|

||||

"id": 2323,

|

||||

"logprob": null,

|

||||

"text": "Test"

|

||||

},

|

||||

{

|

||||

"id": 1715,

|

||||

"logprob": -11.34375,

|

||||

"text": " request"

|

||||

}

|

||||

],

|

||||

"seed": null,

|

||||

"tokens": [

|

||||

{

|

||||

"id": 198,

|

||||

"logprob": -2.5742188,

|

||||

"special": false,

|

||||

"text": "\n"

|

||||

},

|

||||

{

|

||||

"id": 262,

|

||||

"logprob": -1.6230469,

|

||||

"special": false,

|

||||

"text": " "

|

||||

},

|

||||

{

|

||||

"id": 3270,

|

||||

"logprob": -2.046875,

|

||||

"special": false,

|

||||

"text": " \"\"\"\n"

|

||||

},

|

||||

{

|

||||

"id": 262,

|

||||

"logprob": -0.015281677,

|

||||

"special": false,

|

||||

"text": " "

|

||||

},

|

||||

{

|

||||

"id": 422,

|

||||

"logprob": -2.1425781,

|

||||

"special": false,

|

||||

"text": " if"

|

||||

},

|

||||

{

|

||||

"id": 1715,

|

||||

"logprob": -0.9238281,

|

||||

"special": false,

|

||||

"text": " request"

|

||||

},

|

||||

{

|

||||

"id": 13204,

|

||||

"logprob": -0.076660156,

|

||||

"special": false,

|

||||

"text": ".method"

|

||||

},

|

||||

{

|

||||

"id": 624,

|

||||

"logprob": -0.021987915,

|

||||

"special": false,

|

||||

"text": " =="

|

||||

},

|

||||

{

|

||||

"id": 364,

|

||||

"logprob": -0.39208984,

|

||||

"special": false,

|

||||

"text": " '"

|

||||

},

|

||||

{

|

||||

"id": 3019,

|

||||

"logprob": -0.10821533,

|

||||

"special": false,

|

||||

"text": "POST"

|

||||

}

|

||||

],

|

||||

"top_tokens": null

|

||||

},

|

||||

"generated_text": "\n \"\"\"\n if request.method == 'POST"

|

||||

}

|

||||

|

|

@ -0,0 +1,84 @@

|

|||

{

|

||||

"details": {

|

||||

"best_of_sequences": null,

|

||||

"finish_reason": "length",

|

||||

"generated_tokens": 10,

|

||||

"prefill": [

|

||||

{

|

||||

"id": 2323,

|

||||

"logprob": null,

|

||||

"text": "Test"

|

||||

},

|

||||

{

|

||||

"id": 1715,

|

||||

"logprob": -11.34375,

|

||||

"text": " request"

|

||||

}

|

||||

],

|

||||

"seed": 0,

|

||||

"tokens": [

|

||||

{

|

||||

"id": 13,

|

||||

"logprob": -2.2539062,

|

||||

"special": false,

|

||||

"text": "."

|

||||

},

|

||||

{

|

||||

"id": 578,

|

||||

"logprob": -0.15563965,

|

||||

"special": false,

|

||||

"text": " The"

|

||||

},

|

||||

{

|

||||

"id": 3622,

|

||||

"logprob": -0.8203125,

|

||||

"special": false,

|

||||

"text": " server"

|

||||

},

|

||||

{

|

||||

"id": 706,

|

||||

"logprob": 0.0,

|

||||

"special": false,

|

||||

"text": " has"

|

||||

},

|

||||

{

|

||||

"id": 539,

|

||||

"logprob": 0.0,

|

||||

"special": false,

|

||||

"text": " not"

|

||||

},

|

||||

{

|

||||

"id": 3686,

|

||||

"logprob": 0.0,

|

||||

"special": false,

|

||||

"text": " yet"

|

||||

},

|

||||

{

|

||||

"id": 3288,

|

||||

"logprob": 0.0,

|

||||

"special": false,

|

||||

"text": " sent"

|

||||

},

|

||||

{

|

||||

"id": 904,

|

||||

"logprob": 0.0,

|

||||

"special": false,

|

||||

"text": " any"

|

||||

},

|

||||

{

|

||||

"id": 828,

|

||||

"logprob": 0.0,

|

||||

"special": false,

|

||||

"text": " data"

|

||||

},

|

||||

{

|

||||

"id": 382,

|

||||

"logprob": -1.5517578,

|

||||

"special": false,

|

||||

"text": ".\n\n"

|

||||

}

|

||||

],

|

||||

"top_tokens": null

|

||||

},

|

||||

"generated_text": "Test request. The server has not yet sent any data.\n\n"

|

||||

}

|

||||

|

|

@ -0,0 +1,338 @@

|

|||

[

|

||||

{

|

||||

"details": {

|

||||

"best_of_sequences": null,

|

||||

"finish_reason": "length",

|

||||

"generated_tokens": 10,

|

||||

"prefill": [

|

||||

{

|

||||

"id": 2323,

|

||||

"logprob": null,

|

||||

"text": "Test"

|

||||

},

|

||||

{

|

||||

"id": 1715,

|

||||

"logprob": -11.34375,

|

||||

"text": " request"

|

||||

}

|

||||

],

|

||||

"seed": null,

|

||||

"tokens": [

|

||||

{

|

||||

"id": 198,

|

||||

"logprob": -2.5742188,

|

||||

"special": false,

|

||||

"text": "\n"

|

||||

},

|

||||

{

|

||||

"id": 262,

|

||||

"logprob": -1.6220703,

|

||||

"special": false,

|

||||

"text": " "

|

||||

},

|

||||

{

|

||||

"id": 3270,

|

||||

"logprob": -2.0410156,

|

||||

"special": false,

|

||||

"text": " \"\"\"\n"

|

||||

},

|

||||

{

|

||||

"id": 262,

|

||||

"logprob": -0.015281677,

|

||||

"special": false,

|

||||

"text": " "

|

||||

},

|

||||

{

|

||||

"id": 422,

|

||||

"logprob": -2.1445312,

|

||||

"special": false,

|

||||

"text": " if"

|

||||

},

|

||||

{

|

||||

"id": 1715,

|

||||

"logprob": -0.92333984,

|

||||

"special": false,

|

||||

"text": " request"

|

||||

},

|

||||

{

|

||||

"id": 13204,

|

||||

"logprob": -0.07672119,

|

||||

"special": false,

|

||||

"text": ".method"

|

||||

},

|

||||

{

|

||||

"id": 624,

|

||||

"logprob": -0.021987915,

|

||||

"special": false,

|

||||

"text": " =="

|

||||

},

|

||||

{

|

||||

"id": 364,

|

||||

"logprob": -0.39208984,

|

||||

"special": false,

|

||||

"text": " '"

|

||||

},

|

||||

{

|

||||

"id": 3019,

|

||||

"logprob": -0.10638428,

|

||||

"special": false,

|

||||

"text": "POST"

|

||||

}

|

||||

],

|

||||

"top_tokens": null

|

||||

},

|

||||

"generated_text": "\n \"\"\"\n if request.method == 'POST"

|

||||

},

|

||||

{

|

||||

"details": {

|

||||

"best_of_sequences": null,

|

||||

"finish_reason": "length",

|

||||

"generated_tokens": 10,

|

||||

"prefill": [

|

||||

{

|

||||

"id": 2323,

|

||||

"logprob": null,

|

||||

"text": "Test"

|

||||

},

|

||||

{

|

||||

"id": 1715,

|

||||

"logprob": -11.34375,

|

||||

"text": " request"

|

||||

}

|

||||

],

|

||||

"seed": null,

|

||||

"tokens": [

|

||||

{

|

||||

"id": 198,

|

||||

"logprob": -2.5742188,

|

||||

"special": false,

|

||||

"text": "\n"

|

||||

},

|

||||

{

|

||||

"id": 262,

|

||||

"logprob": -1.6220703,

|

||||

"special": false,

|

||||

"text": " "

|

||||

},

|

||||

{

|

||||

"id": 3270,

|

||||

"logprob": -2.0410156,

|

||||

"special": false,

|

||||

"text": " \"\"\"\n"

|

||||

},

|

||||

{

|

||||

"id": 262,

|

||||

"logprob": -0.015281677,

|

||||

"special": false,

|

||||

"text": " "

|

||||

},

|

||||

{

|

||||

"id": 422,

|

||||

"logprob": -2.1445312,

|

||||

"special": false,

|

||||

"text": " if"

|

||||

},

|

||||

{

|

||||

"id": 1715,

|

||||

"logprob": -0.92333984,

|

||||

"special": false,

|

||||

"text": " request"

|

||||

},

|

||||

{

|

||||

"id": 13204,

|

||||

"logprob": -0.07672119,

|

||||

"special": false,

|

||||

"text": ".method"

|

||||

},

|

||||

{

|

||||

"id": 624,

|

||||

"logprob": -0.021987915,

|

||||

"special": false,

|

||||

"text": " =="

|

||||

},

|

||||

{

|

||||

"id": 364,

|

||||

"logprob": -0.39208984,

|

||||

"special": false,

|

||||

"text": " '"

|

||||

},

|

||||

{

|

||||

"id": 3019,

|

||||

"logprob": -0.10638428,

|

||||

"special": false,

|

||||

"text": "POST"

|

||||

}

|

||||

],

|

||||

"top_tokens": null

|

||||

},

|

||||

"generated_text": "\n \"\"\"\n if request.method == 'POST"

|

||||

},

|

||||

{

|

||||

"details": {

|

||||

"best_of_sequences": null,

|

||||

"finish_reason": "length",

|

||||

"generated_tokens": 10,

|

||||

"prefill": [

|

||||

{

|

||||

"id": 2323,

|

||||

"logprob": null,

|

||||

"text": "Test"

|

||||

},

|

||||

{

|

||||

"id": 1715,

|

||||

"logprob": -11.34375,

|

||||

"text": " request"

|

||||

}

|

||||

],

|

||||

"seed": null,

|

||||

"tokens": [

|

||||

{

|

||||

"id": 198,

|

||||

"logprob": -2.5742188,

|

||||

"special": false,

|

||||

"text": "\n"

|

||||

},

|

||||

{

|

||||

"id": 262,

|

||||

"logprob": -1.6220703,

|

||||

"special": false,

|

||||

"text": " "

|

||||

},

|

||||

{

|

||||

"id": 3270,

|

||||

"logprob": -2.0410156,

|

||||

"special": false,

|

||||

"text": " \"\"\"\n"

|

||||

},

|

||||

{

|

||||

"id": 262,

|

||||

"logprob": -0.015281677,

|

||||

"special": false,

|

||||

"text": " "

|

||||

},

|

||||

{

|

||||

"id": 422,

|

||||

"logprob": -2.1445312,

|

||||

"special": false,

|

||||

"text": " if"

|

||||

},

|

||||

{

|

||||

"id": 1715,

|

||||

"logprob": -0.92333984,

|

||||

"special": false,

|

||||

"text": " request"

|

||||

},

|

||||

{

|

||||

"id": 13204,

|

||||

"logprob": -0.07672119,

|

||||

"special": false,

|

||||

"text": ".method"

|

||||

},

|

||||

{

|

||||

"id": 624,

|

||||

"logprob": -0.021987915,

|

||||

"special": false,

|

||||

"text": " =="

|

||||

},

|

||||

{

|

||||

"id": 364,

|

||||

"logprob": -0.39208984,

|

||||

"special": false,

|

||||

"text": " '"

|

||||

},

|

||||

{

|

||||

"id": 3019,

|

||||

"logprob": -0.10638428,

|

||||

"special": false,

|

||||

"text": "POST"

|

||||

}

|

||||

],

|

||||

"top_tokens": null

|

||||

},

|

||||

"generated_text": "\n \"\"\"\n if request.method == 'POST"

|

||||

},

|

||||

{

|

||||

"details": {

|

||||

"best_of_sequences": null,

|

||||

"finish_reason": "length",

|

||||

"generated_tokens": 10,

|

||||

"prefill": [

|

||||

{

|

||||

"id": 2323,

|

||||

"logprob": null,

|

||||

"text": "Test"

|

||||

},

|

||||

{

|

||||

"id": 1715,

|

||||

"logprob": -11.34375,

|

||||

"text": " request"

|

||||

}

|

||||

],

|

||||

"seed": null,

|

||||

"tokens": [

|

||||

{

|

||||

"id": 198,

|

||||

"logprob": -2.5742188,

|

||||

"special": false,

|

||||

"text": "\n"

|

||||

},

|

||||

{

|

||||

"id": 262,

|

||||

"logprob": -1.6220703,

|

||||

"special": false,

|

||||

"text": " "

|

||||

},

|

||||

{

|

||||

"id": 3270,

|

||||

"logprob": -2.0410156,

|

||||

"special": false,

|

||||

"text": " \"\"\"\n"

|

||||

},

|

||||

{

|

||||

"id": 262,

|

||||

"logprob": -0.015281677,

|

||||

"special": false,

|

||||

"text": " "

|

||||

},

|

||||

{

|

||||

"id": 422,

|

||||

"logprob": -2.1445312,

|

||||

"special": false,

|

||||

"text": " if"

|

||||

},

|

||||

{

|

||||

"id": 1715,

|

||||

"logprob": -0.92333984,

|

||||

"special": false,

|

||||

"text": " request"

|

||||

},

|

||||

{

|

||||

"id": 13204,

|

||||

"logprob": -0.07672119,

|

||||

"special": false,

|

||||

"text": ".method"

|

||||

},

|

||||

{

|

||||

"id": 624,

|

||||

"logprob": -0.021987915,

|

||||

"special": false,

|

||||

"text": " =="

|

||||

},

|

||||

{

|

||||

"id": 364,

|

||||

"logprob": -0.39208984,

|

||||

"special": false,

|

||||

"text": " '"

|

||||

},

|

||||

{

|

||||

"id": 3019,

|

||||

"logprob": -0.10638428,

|

||||

"special": false,

|

||||

"text": "POST"

|

||||

}

|

||||

],

|

||||

"top_tokens": null

|

||||

},

|

||||

"generated_text": "\n \"\"\"\n if request.method == 'POST"

|

||||

}

|

||||

]

|

||||

|

|

@ -0,0 +1,61 @@

|

|||

{

|

||||

"details": {

|

||||

"best_of_sequences": null,

|

||||

"finish_reason": "eos_token",

|

||||

"generated_tokens": 8,

|

||||

"prefill": [],

|

||||

"seed": null,

|

||||

"tokens": [

|

||||

{

|

||||

"id": 2502,

|

||||

"logprob": -1.734375,

|

||||

"special": false,

|

||||

"text": "image"

|

||||

},

|

||||

{

|

||||

"id": 2196,

|

||||

"logprob": -0.5756836,

|

||||

"special": false,

|

||||

"text": " result"

|

||||

},

|

||||

{

|

||||

"id": 604,

|

||||

"logprob": -0.007843018,

|

||||

"special": false,

|

||||

"text": " for"

|

||||

},

|

||||

{

|

||||

"id": 12254,

|

||||

"logprob": -1.7167969,

|

||||

"special": false,

|

||||

"text": " chicken"

|

||||

},

|

||||

{

|

||||

"id": 611,

|

||||

"logprob": -0.17053223,

|

||||

"special": false,

|

||||

"text": " on"

|

||||

},

|

||||

{

|

||||

"id": 573,

|

||||

"logprob": -0.7626953,

|

||||

"special": false,

|

||||

"text": " the"

|

||||

},

|

||||

{

|

||||

"id": 8318,

|

||||

"logprob": -0.02709961,

|

||||

"special": false,

|

||||

"text": " beach"

|

||||

},

|

||||

{

|

||||

"id": 1,

|

||||

"logprob": -0.20739746,

|

||||

"special": true,

|

||||

"text": "<eos>"

|

||||

}

|

||||

],

|

||||

"top_tokens": null

|

||||

},

|

||||

"generated_text": "image result for chicken on the beach"

|

||||

}

|

||||

|

|

@ -0,0 +1,23 @@

|

|||

{

|

||||

"choices": [

|

||||

{

|

||||

"finish_reason": "eos_token",

|

||||

"index": 0,

|

||||

"logprobs": null,

|

||||

"message": {

|

||||

"content": "{\n \"temperature\": [\n 35,\n 34,\n 36\n ],\n \"unit\": \"°c\"\n}",

|

||||

"role": "assistant"

|

||||

}

|

||||

}

|

||||

],

|

||||

"created": 1718044128,

|

||||

"id": "",

|

||||

"model": "TinyLlama/TinyLlama-1.1B-Chat-v1.0",

|

||||

"object": "text_completion",

|

||||

"system_fingerprint": "2.0.5-dev0-native",

|

||||

"usage": {

|

||||

"completion_tokens": 39,

|

||||

"prompt_tokens": 136,

|

||||

"total_tokens": 175

|

||||

}

|

||||

}

|

||||

|

|

@ -0,0 +1,85 @@

|

|||

{

|

||||

"details": {

|

||||

"best_of_sequences": null,

|

||||

"finish_reason": "eos_token",

|

||||

"generated_tokens": 12,

|

||||

"prefill": [],

|

||||

"seed": null,

|

||||

"tokens": [

|

||||

{

|

||||

"id": 450,

|

||||

"logprob": -0.26342773,

|

||||

"special": false,

|

||||

"text": " The"

|

||||

},

|

||||

{

|

||||

"id": 21282,

|

||||

"logprob": -0.01838684,

|

||||

"special": false,

|

||||

"text": " cow"

|

||||

},

|

||||

{

|

||||

"id": 322,

|

||||

"logprob": -0.18041992,

|

||||

"special": false,

|

||||

"text": " and"

|

||||

},

|

||||

{

|

||||

"id": 521,

|

||||

"logprob": -0.62841797,

|

||||

"special": false,

|

||||

"text": " ch"

|

||||

},

|

||||

{

|

||||

"id": 21475,

|

||||

"logprob": -0.0037956238,

|

||||

"special": false,

|

||||

"text": "icken"

|

||||

},

|

||||

{

|

||||

"id": 526,

|

||||

"logprob": -0.018737793,

|

||||

"special": false,

|

||||

"text": " are"

|

||||

},

|

||||

{

|

||||

"id": 373,

|

||||

"logprob": -1.0820312,

|

||||

"special": false,

|

||||

"text": " on"

|

||||

},

|

||||

{

|

||||

"id": 263,

|

||||

"logprob": -0.5083008,

|

||||

"special": false,

|

||||

"text": " a"

|

||||

},

|

||||

{

|

||||

"id": 25695,

|

||||

"logprob": -0.07128906,

|

||||

"special": false,

|

||||

"text": " beach"

|

||||

},

|

||||

{

|

||||

"id": 29889,

|

||||

"logprob": -0.12573242,

|

||||

"special": false,

|

||||

"text": "."

|

||||

},

|

||||

{

|

||||

"id": 32002,

|

||||

"logprob": -0.0029792786,

|

||||

"special": true,

|

||||

"text": "<end_of_utterance>"

|

||||

},

|

||||

{

|

||||

"id": 2,

|

||||

"logprob": -0.00024962425,

|

||||

"special": true,

|

||||

"text": "</s>"

|

||||

}

|

||||

],

|

||||

"top_tokens": null

|

||||

},

|

||||

"generated_text": " The cow and chicken are on a beach."

|

||||

}

|

||||

|

|

@ -0,0 +1,133 @@

|

|||

{

|

||||

"details": {

|

||||

"best_of_sequences": null,

|

||||

"finish_reason": "length",

|

||||

"generated_tokens": 20,

|

||||

"prefill": [],

|

||||

"seed": null,

|

||||

"tokens": [

|

||||

{

|

||||

"id": 415,

|

||||

"logprob": -0.04421997,

|

||||

"special": false,

|

||||

"text": " The"

|

||||

},

|

||||

{

|

||||

"id": 12072,

|

||||

"logprob": -0.13500977,

|

||||

"special": false,

|

||||

"text": " cow"

|

||||

},

|

||||

{

|

||||

"id": 349,

|

||||

"logprob": -0.06750488,

|

||||

"special": false,

|

||||

"text": " is"

|

||||

},

|

||||

{

|

||||

"id": 6328,

|

||||

"logprob": -0.6352539,

|

||||

"special": false,

|

||||

"text": " standing"

|

||||

},

|

||||

{

|

||||

"id": 356,

|

||||

"logprob": -0.16186523,

|

||||

"special": false,

|

||||

"text": " on"

|

||||

},

|

||||

{

|

||||

"id": 272,

|

||||

"logprob": -0.5078125,

|

||||

"special": false,

|

||||

"text": " the"

|

||||

},

|

||||

{

|

||||

"id": 10305,

|

||||

"logprob": -0.017913818,

|

||||

"special": false,

|

||||

"text": " beach"

|

||||

},

|

||||

{

|

||||

"id": 304,

|

||||

"logprob": -1.5205078,

|

||||

"special": false,

|

||||

"text": " and"

|

||||

},

|

||||

{

|

||||

"id": 272,

|

||||

"logprob": -0.029174805,

|

||||

"special": false,

|

||||

"text": " the"

|

||||

},

|

||||

{

|

||||

"id": 13088,

|

||||

"logprob": -0.003479004,

|

||||

"special": false,

|

||||

"text": " chicken"

|

||||

},

|

||||

{

|

||||

"id": 349,

|

||||

"logprob": -0.0035095215,

|

||||

"special": false,

|

||||

"text": " is"

|

||||

},

|

||||

{

|

||||

"id": 6398,

|

||||

"logprob": -0.3088379,

|

||||

"special": false,

|

||||

"text": " sitting"

|

||||

},

|

||||

{

|

||||

"id": 356,

|

||||

"logprob": -0.027755737,

|

||||

"special": false,

|

||||

"text": " on"

|

||||

},

|

||||

{

|

||||

"id": 264,

|

||||

"logprob": -0.31884766,

|

||||

"special": false,

|

||||

"text": " a"

|

||||

},

|

||||

{

|

||||

"id": 17972,

|

||||

"logprob": -0.047943115,

|

||||

"special": false,

|

||||

"text": " pile"

|

||||

},

|

||||

{

|

||||

"id": 302,

|

||||

"logprob": -0.0002925396,

|

||||

"special": false,

|

||||

"text": " of"

|

||||

},

|

||||

{

|

||||

"id": 2445,

|

||||

"logprob": -0.02935791,

|

||||

"special": false,

|

||||

"text": " money"

|

||||

},

|

||||

{

|

||||

"id": 28723,

|

||||

"logprob": -0.031219482,

|

||||

"special": false,

|

||||

"text": "."

|

||||

},

|

||||

{

|

||||

"id": 32002,

|

||||

"logprob": -0.00034475327,

|

||||

"special": true,

|

||||

"text": "<end_of_utterance>"

|

||||

},

|

||||

{

|

||||

"id": 2,

|

||||

"logprob": -1.1920929e-07,

|

||||

"special": true,

|

||||

"text": "</s>"

|

||||

}

|

||||

],

|

||||

"top_tokens": null

|

||||

},

|

||||

"generated_text": " The cow is standing on the beach and the chicken is sitting on a pile of money."

|

||||

}

|

||||

|

|

@ -0,0 +1,65 @@

|

|||

import pytest

|

||||

|

||||

|

||||

@pytest.fixture(scope="module")

|

||||

def flash_llama_gptq_marlin_handle(launcher):

|

||||

with launcher(

|

||||

"astronomer/Llama-3-8B-Instruct-GPTQ-4-Bit", num_shard=2, quantize="marlin"

|

||||

) as handle:

|

||||

yield handle

|

||||

|

||||

|

||||

@pytest.fixture(scope="module")

|

||||

async def flash_llama_gptq_marlin(flash_llama_gptq_marlin_handle):

|

||||

await flash_llama_gptq_marlin_handle.health(300)

|

||||

return flash_llama_gptq_marlin_handle.client

|

||||

|

||||

|

||||

@pytest.mark.asyncio

|

||||

@pytest.mark.private

|

||||

async def test_flash_llama_gptq_marlin(flash_llama_gptq_marlin, response_snapshot):

|

||||

response = await flash_llama_gptq_marlin.generate(

|

||||

"Test request", max_new_tokens=10, decoder_input_details=True

|

||||

)

|

||||

|

||||

assert response.details.generated_tokens == 10

|

||||

assert response == response_snapshot

|

||||

|

||||

|

||||

@pytest.mark.asyncio

|

||||

@pytest.mark.private

|

||||

async def test_flash_llama_gptq_marlin_all_params(

|

||||

flash_llama_gptq_marlin, response_snapshot

|

||||

):

|

||||

response = await flash_llama_gptq_marlin.generate(

|

||||

"Test request",

|

||||

max_new_tokens=10,

|

||||

repetition_penalty=1.2,

|

||||

return_full_text=True,

|

||||

temperature=0.5,

|

||||

top_p=0.9,

|

||||

top_k=10,

|

||||

truncate=5,

|

||||

typical_p=0.9,

|

||||

watermark=True,

|

||||

decoder_input_details=True,

|

||||

seed=0,

|

||||

)

|

||||

|

||||

assert response.details.generated_tokens == 10

|

||||

assert response == response_snapshot

|

||||

|

||||

|

||||

@pytest.mark.asyncio

|

||||

@pytest.mark.private

|

||||

async def test_flash_llama_gptq_marlin_load(

|

||||

flash_llama_gptq_marlin, generate_load, response_snapshot

|

||||

):

|

||||

responses = await generate_load(

|

||||

flash_llama_gptq_marlin, "Test request", max_new_tokens=10, n=4

|

||||

)

|

||||

|

||||