* added kwargs for easier intialisation of random model * initial commit for conversion script * current debug script * update * Update * done * add updated debug conversion script * style * clean conversion script |

||

|---|---|---|

| .github/workflows | ||

| docs/source | ||

| examples | ||

| scripts | ||

| src/diffusers | ||

| tests | ||

| utils | ||

| .gitignore | ||

| LICENSE | ||

| Makefile | ||

| README.md | ||

| conversion.py | ||

| debug_conversion.py | ||

| pyproject.toml | ||

| run.py | ||

| setup.cfg | ||

| setup.py | ||

README.md

🤗 Diffusers provides pretrained diffusion models across multiple modalities, such as vision and audio, and serves as a modular toolbox for inference and training of diffusion models.

More precisely, 🤗 Diffusers offers:

- State-of-the-art diffusion pipelines that can be run in inference with just a couple of lines of code (see src/diffusers/pipelines).

- Various noise schedulers that can be used interchangeably for the prefered speed vs. quality trade-off in inference (see src/diffusers/schedulers).

- Multiple types of models, such as UNet, that can be used as building blocks in an end-to-end diffusion system (see src/diffusers/models).

- Training examples to show how to train the most popular diffusion models (see examples).

Definitions

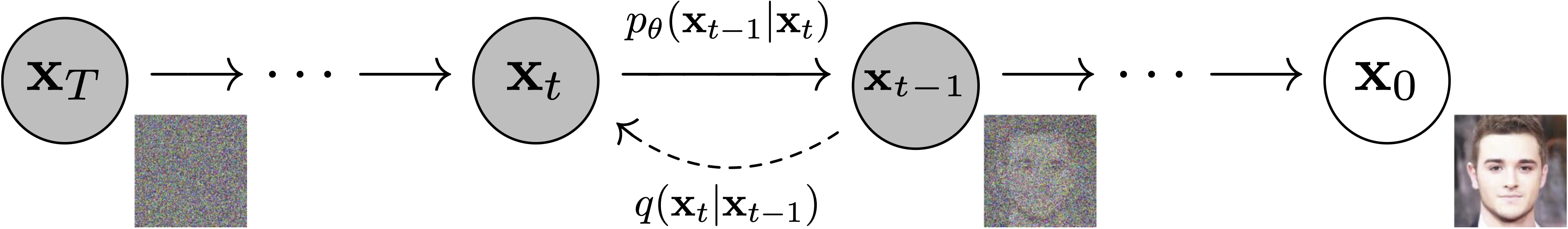

Models: Neural network that models p_\theta(\mathbf{x}_{t-1}|\mathbf{x}_t) (see image below) and is trained end-to-end to denoise a noisy input to an image.

Examples: UNet, Conditioned UNet, 3D UNet, Transformer UNet

Figure from DDPM paper (https://arxiv.org/abs/2006.11239).

Schedulers: Algorithm class for both inference and training. The class provides functionality to compute previous image according to alpha, beta schedule as well as predict noise for training. Examples: DDPM, DDIM, PNDM, DEIS

Sampling and training algorithms. Figure from DDPM paper (https://arxiv.org/abs/2006.11239).

Diffusion Pipeline: End-to-end pipeline that includes multiple diffusion models, possible text encoders, ... Examples: Glide, Latent-Diffusion, Imagen, DALL-E 2

Figure from ImageGen (https://imagen.research.google/).

Philosophy

- Readability and clarity is prefered over highly optimized code. A strong importance is put on providing readable, intuitive and elementary code design. E.g., the provided schedulers are separated from the provided models and provide well-commented code that can be read alongside the original paper.

- Diffusers is modality independent and focusses on providing pretrained models and tools to build systems that generate continous outputs, e.g. vision and audio.

- Diffusion models and schedulers are provided as consise, elementary building blocks whereas diffusion pipelines are a collection of end-to-end diffusion systems that can be used out-of-the-box, should stay as close as possible to their original implementation and can include components of other library, such as text-encoders. Examples for diffusion pipelines are Glide and Latent Diffusion.

Quickstart

Check out this notebook: https://colab.research.google.com/drive/1nMfF04cIxg6FujxsNYi9kiTRrzj4_eZU?usp=sharing

Installation

pip install diffusers # should install diffusers 0.0.4

1. diffusers as a toolbox for schedulers and models

diffusers is more modularized than transformers. The idea is that researchers and engineers can use only parts of the library easily for the own use cases.

It could become a central place for all kinds of models, schedulers, training utils and processors that one can mix and match for one's own use case.

Both models and schedulers should be load- and saveable from the Hub.

For more examples see schedulers and models

Example for Unconditonal Image generation DDPM:

import torch

from diffusers import UNetUnconditionalModel, DDIMScheduler

import PIL.Image

import numpy as np

import tqdm

torch_device = "cuda" if torch.cuda.is_available() else "cpu"

# 1. Load models

scheduler = DDIMScheduler.from_config("fusing/ddpm-celeba-hq", tensor_format="pt")

unet = UNetUnconditionalModel.from_pretrained("fusing/ddpm-celeba-hq", ddpm=True).to(torch_device)

# 2. Sample gaussian noise

generator = torch.manual_seed(23)

unet.image_size = unet.resolution

image = torch.randn(

(1, unet.in_channels, unet.image_size, unet.image_size),

generator=generator,

)

image = image.to(torch_device)

# 3. Denoise

num_inference_steps = 50

eta = 0.0 # <- deterministic sampling

scheduler.set_timesteps(num_inference_steps)

for t in tqdm.tqdm(scheduler.timesteps):

# 1. predict noise residual

with torch.no_grad():

residual = unet(image, t)["sample"]

prev_image = scheduler.step(residual, t, image, eta)["prev_sample"]

# 3. set current image to prev_image: x_t -> x_t-1

image = prev_image

# 4. process image to PIL

image_processed = image.cpu().permute(0, 2, 3, 1)

image_processed = (image_processed + 1.0) * 127.5

image_processed = image_processed.numpy().astype(np.uint8)

image_pil = PIL.Image.fromarray(image_processed[0])

# 5. save image

image_pil.save("generated_image.png")

Example for Unconditonal Image generation LDM: